Find the Best Cosmetic Hospitals — Choose with Confidence

Discover top cosmetic hospitals in one place and take the next step toward the look you’ve been dreaming of.

“Your confidence is your power — invest in yourself, and let your best self shine.”

Compare • Shortlist • Decide smarter — works great on mobile too.

Introduction

Model Monitoring & Drift Detection Tools help organizations continuously track the performance, reliability, and fairness of deployed machine learning models. These platforms detect changes in model behavior, data distribution, or prediction quality over time, ensuring AI systems remain accurate and compliant. With AI adoption accelerating across industries, monitoring has become critical to prevent operational risks and maintain trust in automated decisions.

In real-world settings, these tools are used to monitor credit scoring models in financial institutions, detect anomalies in manufacturing predictive maintenance, track recommendation engines in e-commerce, safeguard healthcare diagnostic AI, and oversee fraud detection systems in insurance and banking.

When evaluating a tool, buyers should consider:

- Real-time monitoring and alerting capabilities

- Drift detection methods (data vs. concept drift)

- Scalability and multi-model support

- Integration with MLOps pipelines

- AI explainability and transparency features

- Security, compliance, and audit capabilities

- Visualization and reporting dashboards

- Pricing model and total cost of ownership

- Support, onboarding, and community strength

- Customization and API access for advanced use cases

Best for: Data science teams, ML engineers, MLOps teams, enterprises deploying multiple models across finance, healthcare, and retail.

Not ideal for: Organizations with minimal ML deployment or those relying on simple scripts without continuous model monitoring; lightweight solutions or manual checks may suffice.

Key Trends in Model Monitoring & Drift Detection Tools

- Increasing use of AI-driven automated drift detection across data and predictions.

- Adoption of unified MLOps platforms integrating monitoring, retraining, and version control.

- Cloud-first and hybrid deployment models for flexibility in enterprise environments.

- Real-time streaming data support for rapid anomaly detection.

- Enhanced explainability tools to satisfy regulatory and ethical compliance.

- Integration with CI/CD pipelines for model retraining and automated deployment.

- Open-source SDKs for custom monitoring workflows alongside enterprise solutions.

- AI-assisted root cause analysis for prediction degradation.

- Emphasis on multi-tenant and multi-model observability dashboards.

- Shift toward subscription-based and usage-based pricing for SMB adoption.

How We Selected These Tools (Methodology)

- Evaluated market adoption and reputation in ML monitoring space.

- Assessed breadth of features, including drift detection, alerting, and reporting.

- Reviewed reliability and performance in production-scale environments.

- Examined security posture, compliance, and audit readiness.

- Verified integration capabilities with popular ML frameworks and cloud platforms.

- Considered customer fit across different company sizes and industry verticals.

- Analyzed vendor support, community engagement, and documentation quality.

- Prioritized solutions offering scalability and extensibility for multi-model deployments.

- Included both enterprise-grade and open-source developer-focused tools.

- Compared pricing models relative to features and value delivered.

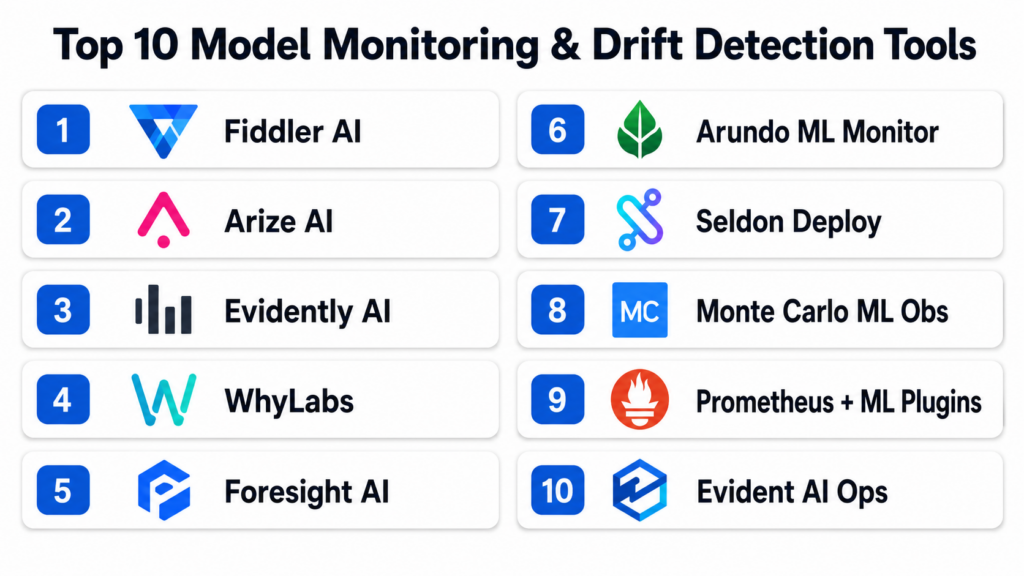

Top 10 Model Monitoring & Drift Detection Tools

#1 — Fiddler AI

Short description : Fiddler AI enables enterprises to monitor ML models for performance, fairness, and drift. It’s designed for data science and MLops teams seeking end-to-end model observability with automated alerts.

Key Features

- Real-time model performance monitoring

- Drift detection on features and predictions

- Explainability dashboards for fairness audits

- Multi-model support across frameworks

- Integration with cloud and on-prem pipelines

- Root cause analysis for degraded models

Pros

- Strong explainability tools for compliance

- Enterprise-ready with robust security and audit logs

Cons

- Pricing can be high for smaller teams

- Steeper learning curve for non-technical stakeholders

Platforms / Deployment

- Web, Cloud, Hybrid

Security & Compliance

- SOC 2, ISO 27001, SSO/SAML, MFA

Integrations & Ecosystem

Fiddler integrates seamlessly with popular ML frameworks and MLOps platforms.

- Python SDK, REST APIs

- Cloud storage connectors

- CI/CD pipeline integration

- Slack/email alerts

Support & Community

Comprehensive documentation, onboarding support, and enterprise-grade SLAs. Active user community for knowledge sharing.

#2 — Arize AI

Short description : Arize AI helps ML teams detect performance issues and concept drift across production models. It targets enterprise ML teams and is widely used in financial and retail AI deployments.

Key Features

- Data and prediction drift detection

- Model version comparison

- Real-time alerting and notifications

- Visualizations for root cause analysis

- Multi-framework compatibility

Pros

- Strong visualization and reporting tools

- Supports multiple deployment environments

Cons

- Can be complex for small teams

- Some advanced analytics require additional configuration

Platforms / Deployment

- Web, Cloud

Security & Compliance

- SOC 2, SSO/SAML, encryption, audit logging

Integrations & Ecosystem

Integrates with Python SDKs, REST APIs, Snowflake, BigQuery, and AWS pipelines.

- CI/CD triggers

- Slack/email notifications

- Jupyter notebook integrations

Support & Community

Enterprise support available, detailed guides and documentation, active customer forums.

#3 — Evidently AI

Short description : Evidently AI provides open-source monitoring of ML models, including data and concept drift. It is ideal for developers and SMBs seeking lightweight monitoring with strong visualization.

Key Features

- Drift detection and statistical reporting

- Model performance dashboards

- Automated reports generation

- Open-source extensibility

- Python-native SDK

Pros

- Open-source and flexible

- Easy integration with Python ML pipelines

Cons

- Limited enterprise-grade features

- Self-hosted deployment may require setup effort

Platforms / Deployment

- Web, Self-hosted, Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python, pandas, scikit-learn

- Jupyter notebook visualizations

- API endpoints for custom integration

Support & Community

Active open-source community, GitHub issues, and discussion forums.

#4 — WhyLabs

Short description : WhyLabs specializes in automated monitoring for data quality and ML drift, focusing on observability for enterprise AI.

Key Features

- Continuous model monitoring

- Data quality and drift alerts

- Visualization dashboards

- Model version tracking

- API-driven notifications

Pros

- Enterprise-grade features with strong compliance

- Supports multi-model monitoring

Cons

- Advanced features may require professional services

- Smaller developer community than open-source tools

Platforms / Deployment

- Web, Cloud, Hybrid

Security & Compliance

- SOC 2, ISO 27001, SSO/SAML, MFA

Integrations & Ecosystem

- Python SDK, REST API

- Cloud connectors (AWS, GCP, Azure)

- Slack/email notifications

- MLOps pipeline integration

Support & Community

Enterprise-level support with onboarding and documentation. Community support limited.

#5 — Foresight AI

Short description : Foresight AI provides ML model monitoring with predictive insights to anticipate performance degradation. Suitable for financial and operational ML teams.

Key Features

- Drift detection and anomaly alerts

- Predictive degradation analysis

- Multi-model observability

- Dashboard visualizations

- API access for custom metrics

Pros

- Predictive insights for proactive interventions

- Customizable alerts and reporting

Cons

- Limited open-source support

- May require dedicated engineering resources

Platforms / Deployment

- Web, Cloud, Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- REST APIs

- Python and R SDKs

- Cloud platform connectors

- Integration with alerting systems

Support & Community

Professional support, onboarding, and technical guides.

#6 — Arundo ML Monitor

Short description : Arundo ML Monitor targets industrial and IoT models, providing drift detection and performance monitoring for mission-critical applications.

Key Features

- IoT and time-series model monitoring

- Drift detection for predictive models

- Real-time dashboards

- Root cause analytics

- Multi-model support

Pros

- Strong support for industrial applications

- Scalable and real-time monitoring

Cons

- Specialized for industrial use, not general ML

- Higher cost for small deployments

Platforms / Deployment

- Web, Cloud, Hybrid

Security & Compliance

- SOC 2, Not publicly stated for other standards

Integrations & Ecosystem

- REST APIs, SDKs

- Cloud storage connectors

- Dashboard embedding

- Notification system integration

Support & Community

Enterprise-grade support with dedicated account managers.

#7 — Seldon Deploy

Short description : Seldon Deploy offers model monitoring and operationalization for Kubernetes-based ML deployments. Ideal for developers managing containerized models.

Key Features

- Kubernetes-native monitoring

- Data and prediction drift alerts

- Multi-model support

- Metrics collection and visualization

- Model version tracking

Pros

- Strong containerized deployment support

- Developer-focused extensibility

Cons

- Requires Kubernetes expertise

- Limited non-Kubernetes deployment options

Platforms / Deployment

- Web, Cloud, Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python SDK, REST APIs

- Prometheus/Grafana metrics

- CI/CD pipeline integration

- Kubernetes-native logging

Support & Community

Active developer community, documentation, commercial support available.

#8 — Monte Carlo ML Observability

Short description : Monte Carlo provides data and model observability with automated drift detection, focused on enterprises handling critical data pipelines.

Key Features

- Model and data drift alerts

- Data quality monitoring

- Dashboard visualizations

- Root cause analysis

- Multi-platform monitoring

Pros

- Strong enterprise-grade observability

- Automated reporting and alerts

Cons

- May be complex for small teams

- Enterprise pricing model

Platforms / Deployment

- Web, Cloud

Security & Compliance

- SOC 2, ISO 27001, SSO/SAML

Integrations & Ecosystem

- Cloud connectors, Python SDK

- API access for custom dashboards

- Alerting and notification integrations

Support & Community

Professional support, detailed guides, and enterprise onboarding.

#9 — Prometheus + ML Plug-ins

Short description : Prometheus with ML-specific plug-ins allows open-source monitoring of ML models and drift detection, favored by developer-centric teams.

Key Features

- Time-series monitoring for models

- Drift detection via metrics and alerts

- Open-source extensibility

- Integration with Grafana dashboards

- API-based custom metrics

Pros

- Open-source and flexible

- Strong community and ecosystem

Cons

- Requires engineering setup and maintenance

- Limited enterprise features out-of-the-box

Platforms / Deployment

- Web, Self-hosted, Cloud

Security & Compliance

- Varies / N/A

Integrations & Ecosystem

- Grafana dashboards

- REST APIs

- CI/CD integration

- Cloud connectors

Support & Community

Large open-source community, GitHub resources, community forums.

#10 — Evident AI Ops

Short description : Evident AI Ops monitors ML models with automated drift alerts and predictive performance analytics for enterprise applications.

Key Features

- Drift detection and performance monitoring

- Predictive alerts

- Multi-model observability

- Visual dashboards

- API integration

Pros

- Enterprise-ready with advanced monitoring

- Automated insights for rapid intervention

Cons

- Smaller developer community

- Pricing details vary

Platforms / Deployment

- Web, Cloud, Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python SDKs, REST APIs

- Slack/email alerts

- Cloud platform connectors

Support & Community

Professional onboarding and documentation, technical support available.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Fiddler AI | Enterprise observability | Web | Cloud / Hybrid | Explainability dashboards | N/A |

| Arize AI | Multi-model monitoring | Web | Cloud | Version comparison & alerts | N/A |

| Evidently AI | Developer-friendly | Web | Cloud / Self-hosted | Open-source visualization | N/A |

| WhyLabs | Enterprise ML monitoring | Web | Cloud / Hybrid | Data quality & alerts | N/A |

| Foresight AI | Predictive ML insights | Web | Cloud / Hybrid | Predictive degradation alerts | N/A |

| Arundo ML Monitor | Industrial/IoT models | Web | Cloud / Hybrid | Time-series drift detection | N/A |

| Seldon Deploy | Kubernetes ML ops | Web | Cloud / Self-hosted | Kubernetes-native monitoring | N/A |

| Monte Carlo ML Obs | Enterprise pipelines | Web | Cloud | Automated root cause analysis | N/A |

| Prometheus + ML Plugins | Developer-centric | Web | Self-hosted / Cloud | Open-source metrics & dashboards | N/A |

| Evident AI Ops | Enterprise AI ops | Web | Cloud / Hybrid | Predictive performance analytics | N/A |

Evaluation & Scoring of Model Monitoring & Drift Detection Tools

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| Fiddler AI | 9 | 8 | 8 | 9 | 9 | 8 | 7 | 8.5 |

| Arize AI | 8 | 8 | 8 | 8 | 8 | 8 | 7 | 8.0 |

| Evidently AI | 7 | 9 | 7 | 7 | 7 | 7 | 9 | 7.6 |

| WhyLabs | 8 | 7 | 8 | 8 | 8 | 7 | 7 | 7.8 |

| Foresight AI | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.5 |

| Arundo ML Monitor | 7 | 7 | 7 | 7 | 8 | 7 | 7 | 7.3 |

| Seldon Deploy | 8 | 7 | 8 | 7 | 8 | 7 | 7 | 7.7 |

| Monte Carlo ML Obs | 8 | 7 | 8 | 8 | 8 | 7 | 7 | 7.9 |

| Prometheus + ML Plugins | 7 | 6 | 7 | 6 | 7 | 6 | 9 | 6.9 |

| Evident AI Ops | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.5 |

Interpretation: Higher weighted totals indicate stronger overall value for enterprise and developer teams. Scores are comparative across features, integrations, usability, and enterprise readiness.

Which Model Monitoring & Drift Detection Tools Tool Is Right for You?

Solo / Freelancer

Open-source tools like Evidently AI or Prometheus with ML plugins offer lightweight monitoring with minimal overhead.

SMB

Evidently AI, Foresight AI, or WhyLabs provide cost-effective monitoring with easy integration into existing ML workflows.

Mid-Market

Arize AI, Fiddler AI, and Monte Carlo provide strong dashboards, alerts, and compliance features for growing ML teams.

Enterprise

Fiddler AI, WhyLabs, Monte Carlo, and Evident AI Ops excel in multi-model, multi-team observability with robust security and compliance.

Budget vs Premium

Open-source options reduce upfront costs but may need engineering effort. Premium solutions deliver full enterprise-grade features with support and compliance guarantees.

Feature Depth vs Ease of Use

Developer-focused tools prioritize extensibility and APIs, whereas enterprise solutions prioritize dashboards, alerts, and reporting for broader stakeholders.

Integrations & Scalability

Choose platforms that integrate with your cloud providers, MLOps pipelines, and alerting systems. Enterprise deployments require multi-model support and cross-team scalability.

Security & Compliance Needs

High-risk industries should prioritize SOC 2, ISO 27001, SSO/SAML, MFA, and audit logging. Open-source tools may need additional configuration to meet compliance requirements.

Frequently Asked Questions (FAQs)

1. What is model drift, and why does it matter?

Model drift occurs when a machine learning model’s predictions degrade over time due to changes in data or environment. Monitoring drift ensures models remain accurate, reducing operational risk and maintaining trust.

2. How quickly can these tools detect drift?

Detection depends on platform and configuration. Most modern tools offer real-time or near-real-time alerts to enable rapid intervention and prevent significant performance loss.

3. Do these tools support multiple ML frameworks?

Yes, top tools typically support popular frameworks like TensorFlow, PyTorch, Scikit-learn, XGBoost, and more, with multi-model dashboards for comprehensive observability.

4. Are open-source options viable for enterprise use?

Open-source tools are flexible and cost-effective but may require engineering resources for deployment, scaling, and security compliance compared to commercial offerings.

5. What security features should I expect?

Enterprise tools often include SSO/SAML, MFA, encryption, RBAC, and audit logs. SOC 2 and ISO 27001 compliance is common among commercial vendors.

6. How do I integrate monitoring into CI/CD pipelines?

Most tools offer Python SDKs, REST APIs, and webhooks to feed drift alerts, metrics, and logs directly into CI/CD or MLOps pipelines for automated model management.

7. Can these tools help with model explainability?

Yes, many platforms provide visualizations and explainability dashboards to analyze feature importance, bias, and fairness alongside performance metrics.

8. What is the pricing model for these platforms?

Pricing varies: some offer subscription-based plans, usage-based pricing, or enterprise licenses. Open-source tools may incur only infrastructure and engineering costs.

9. How scalable are these tools?

Enterprise-grade tools scale to monitor hundreds of models across multiple teams. Open-source tools can be scaled but often require additional engineering support.

10. Are there alternatives if I don’t need full monitoring?

For small deployments, lightweight logging, simple performance metrics, or manual retraining checks may suffice without full-featured monitoring platforms.

Conclusion

Model Monitoring & Drift Detection Tools are essential for maintaining the reliability, accuracy, and fairness of ML systems. Choosing the right solution depends on team size, deployment complexity, compliance requirements, and budget. Open-source tools provide flexibility and low-cost options for developers and SMBs, while enterprise-grade platforms deliver robust dashboards, automated alerts, and compliance features for high-risk environments. Real-time detection, multi-model support, and integrations with MLOps pipelines are critical evaluation points. For any organization deploying ML at scale, the next steps are simple: shortlist