Find the Best Cosmetic Hospitals — Choose with Confidence

Discover top cosmetic hospitals in one place and take the next step toward the look you’ve been dreaming of.

“Your confidence is your power — invest in yourself, and let your best self shine.”

Compare • Shortlist • Decide smarter — works great on mobile too.

Introduction

Stream processing frameworks are specialized software architectures designed to ingest, process, and analyze continuous flows of data in real-time. Unlike traditional batch processing, which collects data over a period and processes it all at once, stream processing acts on data as it arrives, typically within milliseconds or seconds. This capability is essential for modern businesses that require immediate insights to drive automated decisions, monitor live systems, or provide responsive user experiences.

In the current digital landscape, the volume of data generated by IoT devices, social media feeds, financial transactions, and server logs has surpassed the limits of periodic processing. Organizations now treat data as a continuous river rather than a static lake. This shift allows for instantaneous reactions to shifting market conditions or emerging security threats. By utilizing these frameworks, developers can build applications that calculate aggregates, detect patterns, and trigger alerts on “data in motion” rather than waiting for “data at rest.”

Real-world use cases:

- Electronic Fraud Detection: Analyzing credit card swipes against historical user behavior to block fraudulent charges before the transaction is finalized.

- Predictive Maintenance: Monitoring industrial sensors on manufacturing lines to identify mechanical vibrations that signal a pending failure.

- Dynamic Pricing: Automatically adjusting ride-share or e-commerce prices based on real-time supply and demand fluctuations.

- Network Observability: Scanning gigabytes of log data per second to identify DDoS attacks or system bottlenecks the moment they occur.

- Personalized Content Delivery: Updating social media algorithms or news feeds based on a user’s immediate interaction with current content.

Buyer evaluation criteria:

- Latency: The delay between data arrival and the completion of its processing.

- Throughput: The maximum volume of events the framework can handle per second under heavy load.

- State Management: The ability to remember and update information over time (e.g., keeping a running total).

- Fault Tolerance: How the system recovers from hardware failures without losing data.

- Programming Model: Support for high-level languages like SQL, Python, Java, or Scala.

- Delivery Guarantees: Whether the system ensures data is processed “at-most-once,” “at-least-once,” or “exactly-once.”

- Scalability: The ease of adding more compute resources to handle increasing data loads.

- Ecosystem Integration: Compatibility with messaging queues like Kafka and databases like NoSQL or traditional SQL.

Mandatory paragraph

- Best for: Data engineers, software architects, and DevOps professionals in sectors like fintech, telecommunications, and e-commerce who need to build low-latency, high-throughput data pipelines.

- Not ideal for: Organizations with limited technical staff or those dealing with simple, static reports where periodic batch updates are sufficient and cost-effective.

Key Trends in Stream Processing Frameworks

- Convergence of Batch and Stream: More frameworks are moving toward a unified model where the same code can handle both historical data and live streams, simplifying the development lifecycle.

- SQL-First Development: To democratize data access, frameworks are prioritizing SQL interfaces, allowing analysts—not just specialized engineers—to build streaming pipelines.

- Serverless Streaming: Cloud providers are increasingly offering serverless processing where users pay only for the data processed, removing the need to manage complex clusters.

- Stateful Functions: The rise of frameworks that allow developers to build complex, stateful applications that behave like traditional microservices but operate on streaming data.

- AI and Machine Learning Integration: Native support for embedding ML models directly into the stream, allowing for real-time inference and scoring of every incoming event.

- Edge Processing: Pushing stream processing capabilities closer to the data source (like IoT gateways) to reduce bandwidth costs and latency.

- Python Ecosystem Growth: While Java and Scala dominated the past, new frameworks are focusing on Python to cater to the massive data science and AI community.

- Low-Code/No-Code Interfaces: The emergence of visual drag-and-drop builders for stream processing, reducing the barrier to entry for business users.

How We Selected These Tools (Methodology)

To determine the top 10 stream processing frameworks, we applied a rigorous evaluation framework:

- Market Adoption & Mindshare: We prioritized frameworks with a high number of deployments in production environments across various industries.

- Technical Robustness: We analyzed the core architecture, focusing on fault tolerance, horizontal scaling, and delivery guarantees.

- Community and Support: We looked for active open-source communities or reliable commercial support systems that ensure long-term viability.

- Developer Experience: We assessed the quality of documentation, the variety of supported languages, and the availability of testing tools.

- Performance Benchmarks: We evaluated the ability of each framework to maintain low latency even during massive data spikes.

- Integration Density: We favored tools that offer a wide array of pre-built connectors for popular data sources and sinks.

- Operational Complexity: We considered the effort required to deploy, monitor, and maintain the framework in a production setting.

- Security Architecture: We examined built-in features for data encryption, role-based access control, and identity management.

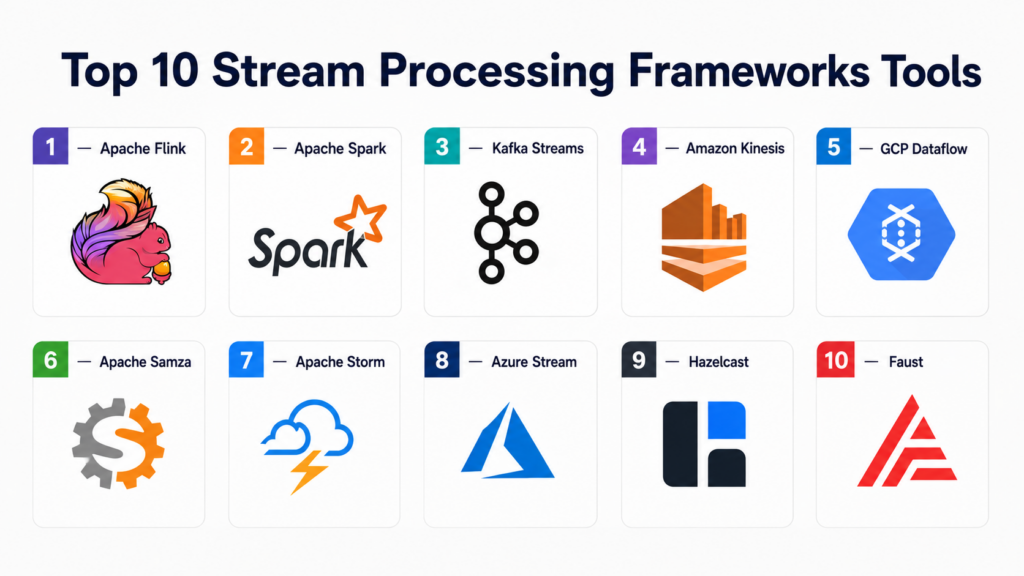

Top 10 Stream Processing Frameworks Tools

#1 — Apache Flink

Short description:

Apache Flink is a powerful, open-source framework designed for stateful computations over both unbounded and bounded data streams. It is widely considered the gold standard for high-performance stream processing due to its “true” streaming architecture. Unlike micro-batch systems, Flink processes events one by one as they arrive, providing sub-second latency and robust exactly-once semantics. It is ideal for large-scale enterprises building complex event-driven applications.

Key Features

- Stateful Processing: Maintains application state across large-scale distributed clusters with high reliability.

- Low Latency: Offers true record-at-a-time processing, minimizing the delay between event ingestion and output.

- Exactly-Once Guarantees: Ensures that even in the event of a system failure, every record is processed exactly one time.

- Flink SQL: Allows users to write streaming queries using standard SQL, making it accessible to non-developers.

- Event-Time Processing: Handles data based on when the event actually happened, not when it arrived at the system.

- Savepoints: Allows for taking a snapshot of the entire application state for upgrades or migrations without losing data.

- Flexible Windows: Supports tumbling, sliding, and session windows for complex temporal aggregations.

Pros

- Exceptional performance for high-volume, low-latency requirements.

- The most sophisticated state management and recovery system in the industry.

- Unified framework for both stream and batch processing.

Cons

- High operational complexity and steep learning curve for developers.

- Requires significant memory resources to maintain large application states.

Platforms / Deployment

- Linux / macOS / Windows (via Docker/Kubernetes)

- Cloud / Self-hosted / Hybrid

Security & Compliance

- Kerberos authentication, SSL/TLS encryption, RBAC.

- FIPS 140-2 (via commercial providers), GDPR (through data handling practices).

Integrations & Ecosystem

Flink integrates seamlessly with the broader big data ecosystem, acting as a central processing hub.

- Apache Kafka, Amazon Kinesis, Google Pub/Sub.

- Elasticsearch, Cassandra, JDBC-compatible databases.

- Hadoop HDFS, Amazon S3, Azure Data Lake.

Support & Community

Very strong open-source community with extensive documentation. Commercial support is available through companies like Ververica and major cloud providers.

#2 — Apache Spark (Structured Streaming)

Short description:

Apache Spark is a unified analytics engine that includes “Structured Streaming,” a high-level API built on the Spark SQL engine. It allows developers to express streaming computations similarly to batch computations. It utilizes a micro-batching model, which provides high throughput and simplifies the development process for teams already familiar with the Spark ecosystem. It is best for organizations needing a unified platform for ETL, machine learning, and streaming.

Key Features

- Unified API: Uses the same DataFrames and Datasets API for both batch and streaming code.

- High Throughput: Optimized for processing massive volumes of data in small intervals.

- ACID Transactions: Integration with Delta Lake ensures reliable data updates and consistent reads.

- Continuous Processing Mode: An experimental mode that aims for sub-millisecond latency by bypassing micro-batching.

- Broad Language Support: Native support for Java, Scala, Python, and R.

- Dynamic Resource Allocation: Scales the number of executors up or down based on the workload.

Pros

- Easiest transition for teams already using Apache Spark for batch processing.

- Extremely high throughput for massive data ingestion tasks.

- Massive library of machine learning and graph processing tools (MLlib, GraphX).

Cons

- Native micro-batching architecture introduces higher latency than Flink (typically 100ms+).

- State management is less flexible for complex event-driven logic.

Platforms / Deployment

- Linux / Windows / macOS

- Cloud (Databricks, AWS EMR, Google Dataproc) / Self-hosted

Security & Compliance

- RBAC, Kerberos, SSL/TLS, fine-grained access control through Unity Catalog.

- SOC 2, ISO 27001 (via managed cloud platforms).

Integrations & Ecosystem

Spark features an unparalleled ecosystem with thousands of third-party libraries and connectors.

- Apache Kafka, Azure Event Hubs.

- Snowflake, MongoDB, Redis.

- TensorFlow, PyTorch (for integrated AI pipelines).

Support & Community

One of the largest open-source communities in history. Commercial support is led by Databricks and every major cloud vendor.

#3 — Apache Kafka Streams

Short description:

Kafka Streams is a client-side library for building applications and microservices where the input and output data are stored in Kafka clusters. Unlike other frameworks on this list, it does not require a separate processing cluster; it runs as a standard Java application. It is the preferred choice for developers building lightweight, scalable microservices that interact deeply with Kafka.

Key Features

- No Cluster Required: Runs as a simple library within your application, reducing infrastructure overhead.

- Exactly-Once Processing: Fully integrated with Kafka’s transactional API to ensure data integrity.

- Stateful and Stateless Operations: Supports joins, aggregations, and windowing out of the box.

- Interactive Queries: Allows your application to query its own internal state directly without an external database.

- High Scalability: Scales by simply launching more instances of your application.

Pros

- Minimal operational footprint compared to Flink or Spark.

- Tightest possible integration with Apache Kafka topics.

- Ideal for event-driven microservices architecture.

Cons

- Limited to the Kafka ecosystem; cannot ingest data directly from other sources.

- Restricted primarily to Java and Scala (though some Python ports exist).

Platforms / Deployment

- Windows / macOS / Linux / Android / iOS (as a Java library)

- Cloud / Self-hosted / Hybrid

Security & Compliance

- Kafka SASL/SSL, ACLs for topic access, encryption.

- Varies / Depends on Kafka cluster configuration.

Integrations & Ecosystem

Designed to be the “brains” of a Kafka-centric architecture.

- Apache Kafka (Primary source and sink).

- Confluent Schema Registry.

- ksqlDB (for SQL-based streaming on top of Kafka).

Support & Community

Robust community support within the Kafka ecosystem. Professional support is provided by Confluent.

#4 — Amazon Kinesis Data Analytics

Short description:

Amazon Kinesis Data Analytics is a fully managed AWS service that allows you to process and analyze streaming data using SQL or Java. Under the hood, it often runs Apache Flink, but it abstracts away the management of the underlying infrastructure. It is designed for AWS users who want to build real-time applications without the burden of cluster administration.

Key Features

- Serverless Scaling: Automatically scales the compute resources to match the data throughput.

- SQL for Real-Time: Enables the creation of streaming applications using standard SQL queries.

- Flink Integration: Supports running full Apache Flink applications for complex requirements.

- Pay-as-you-go: Users pay only for the resources consumed by the running application.

- Integrated Monitoring: Built-in integration with Amazon CloudWatch for logging and alerts.

Pros

- Zero server management or cluster configuration.

- Fastest way to deploy a Flink-based application within the AWS ecosystem.

- Seamless security through AWS IAM.

Cons

- Vendor lock-in to the AWS platform.

- Can be more expensive for constant, high-volume workloads compared to self-hosted Flink.

Platforms / Deployment

- Web / API

- Cloud (AWS Managed Service)

Security & Compliance

- AWS IAM, KMS encryption, VPC isolation.

- SOC 2, ISO 27001, HIPAA, PCI-DSS.

Integrations & Ecosystem

Native integration with the entire AWS data stack.

- Amazon Kinesis Data Streams and Firehose.

- Amazon S3, Redshift, and DynamoDB.

- AWS Lambda.

Support & Community

Professional support through AWS support plans. Large user base but less community control over the underlying service.

#5 — Google Cloud Dataflow

Short description:

Google Cloud Dataflow is a fully managed, serverless service for executing Apache Beam pipelines on Google Cloud. It provides a unified model for both stream and batch processing and is famous for its automated resource management. It is best for GCP users who need a highly resilient, hands-off approach to massive data pipelines.

Key Features

- Apache Beam Foundation: Uses the Beam SDK, providing a powerful, portable programming model.

- Horizontal Autoscaling: Dynamically adds or removes worker nodes based on data volume.

- Liquid Sharding: Automatically rebalances work across all workers to eliminate bottlenecks.

- Exactly-Once Guarantees: Ensures data integrity through built-in deduplication and checkpointing.

- Streaming Engine: Offloads processing to a dedicated service backend for better performance and scaling.

Pros

- Industry-leading autoscaling and workload balancing.

- Portable code—pipelines can be moved to other Beam runners (like Flink) if needed.

- Exceptional integration with Google BigQuery and AI tools.

Cons

- Strictly tied to the Google Cloud Platform for execution.

- Apache Beam has a high conceptual overhead and complexity.

Platforms / Deployment

- Web / API

- Cloud (GCP Managed Service)

Security & Compliance

- GCP IAM, VPC Service Controls, Customer-Managed Encryption Keys (CMEK).

- SOC 2, ISO 27001, HIPAA, FedRAMP.

Integrations & Ecosystem

Optimized for the Google Cloud Big Data stack.

- Google Cloud Pub/Sub, BigQuery.

- Cloud Storage, Cloud Spanner.

- Vertex AI.

Support & Community

Enterprise support through Google Cloud. The Apache Beam community provides open-source guidance.

#6 — Apache Samza

Short description:

Apache Samza is a distributed stream processing framework that was originally developed at LinkedIn. It is designed to handle high-performance, stateful applications and is built to integrate tightly with Apache Kafka and Hadoop YARN. Samza focuses on reliability and “fault-tolerance at scale,” making it suitable for large enterprises with established Hadoop infrastructures.

Key Features

- Stateful Processing: Uses a local key-value store for efficient state management.

- Isolation: Runs as a YARN application, ensuring that different stream tasks do not interfere with each other.

- Scalability: Can handle thousands of partitions and TBs of state across a large cluster.

- Exactly-Once Processing: High-level API supports reliable data delivery and state consistency.

- Unified API: Support for both batch and streaming workloads through a single programming model.

Pros

- Extremely reliable for massive, multi-tenant environments.

- Proven at scale by companies like LinkedIn and TripAdvisor.

- Excellent handling of large-scale stateful operations.

Cons

- Heavy dependency on the Hadoop/YARN ecosystem.

- Smaller community and slower innovation compared to Flink or Spark.

Platforms / Deployment

- Linux

- Self-hosted / Hybrid (On Hadoop clusters)

Security & Compliance

- Kerberos, SSL/TLS, YARN-based container security.

- Not publicly stated (Individual enterprise audit).

Integrations & Ecosystem

Strong focus on the LinkedIn-style data stack.

- Apache Kafka, Hadoop HDFS, Azure Event Hubs.

- RocksDB (for local state storage).

Support & Community

Maintained as an Apache project with a dedicated user base in the LinkedIn ecosystem. Commercial support is limited.

#7 — Apache Storm

Short description:

Apache Storm is one of the original distributed real-time computation systems. It is famous for its “nimbus” and “supervisor” architecture and its ability to process data with extremely low latency. While it has been largely superseded by Flink and Spark in modern deployments, it remains a reliable choice for simple, high-speed filtering and transformation tasks.

Key Features

- Micro-Latency: Offers very low latency processing for individual tuples.

- Guaranteed Data Processing: Ensures that every message is processed at least once.

- Polyglot: Allows developers to write topologies in any language that can read from and write to standard I/O.

- High Reliability: Automatically restarts failed workers and reassigns tasks.

- Trident API: A higher-level abstraction that provides exactly-once semantics and stateful processing.

Pros

- Extremely fast for basic data transformations.

- Flexible language support (Clojure, Java, Python, Ruby, etc.).

- Battle-tested over a decade in production environments.

Cons

- Difficult to achieve exactly-once processing without the complex Trident API.

- Operational overhead of managing the Nimbus/Supervisor nodes is high.

Platforms / Deployment

- Linux / macOS / Windows

- Self-hosted / Hybrid

Security & Compliance

- Kerberos, SSL/TLS.

- Not publicly stated.

Integrations & Ecosystem

Legacy integration with the Hadoop ecosystem.

- Apache Kafka, RabbitMQ, Kinesis.

- HDFS, Redis, MongoDB.

Support & Community

A mature Apache project. The community is still active but focus has shifted toward newer frameworks.

#8 — Azure Stream Analytics

Short description:

Azure Stream Analytics is a fully managed, real-time analytics and complex event-processing engine from Microsoft. It is designed to analyze and process large volumes of fast-streaming data from multiple sources simultaneously. It uses a SQL-based language, making it highly accessible for business analysts and developers already within the Azure cloud.

Key Features

- SQL-Based Syntax: Allows users to build complex streaming logic using familiar SQL.

- Sub-Second Latency: Optimized for fast event processing and immediate insights.

- Serverless Scaling: Automatically adjusts processing units to match the data throughput.

- Edge Capability: Can run the same streaming logic on IoT Edge devices.

- Built-in ML: Simple functions to call Azure Machine Learning models within the SQL query.

Pros

- Easiest entry point for Microsoft-centric organizations.

- No infrastructure management—purely PaaS.

- Excellent visualization through native Power BI integration.

Cons

- Limited to the Azure ecosystem.

- Less flexible for extremely complex custom logic compared to Flink.

Platforms / Deployment

- Web / API

- Cloud (Azure Managed Service) / Edge

Security & Compliance

- Azure Entra ID, VNET isolation, encryption.

- ISO 27001, SOC 2, HIPAA, GDPR.

Integrations & Ecosystem

Native connections to the Microsoft Intelligent Data Platform.

- Azure Event Hubs and IoT Hub.

- Azure Blob Storage, SQL Database, Cosmos DB.

- Power BI.

Support & Community

Microsoft Enterprise Support. The community is mostly comprised of Azure enterprise users.

#9 — Hazelcast

Short description:

Hazelcast is a unified real-time stream processing platform that combines an in-memory data store with a high-performance streaming engine. This unique architecture allows applications to query historical context stored in memory while processing live events. It is designed for ultra-low latency requirements like high-frequency trading and real-time inventory management.

Key Features

- In-Memory Architecture: Processes data directly in RAM for the fastest possible speeds.

- Unified Platform: Combines stream processing and in-memory storage in a single deployment.

- SQL Support: Supports querying both live streams and stored data using SQL.

- Exactly-Once Guarantees: Ensures data consistency even during node failures.

- Compact Footprint: Lightweight enough to run on edge devices or within microservices.

Pros

- Incredible speed due to the elimination of disk I/O.

- Simplified architecture by merging the database and the stream processor.

- Highly reliable distributed caching capabilities.

Cons

- Cost can be high due to heavy reliance on RAM.

- Smaller community for stream processing specifically compared to Spark or Flink.

Platforms / Deployment

- Linux / macOS / Windows / Android

- Cloud (Hazelcast Viridian) / Self-hosted / Hybrid

Security & Compliance

- Mutual TLS, JAAS, encryption at rest, RBAC.

- SOC 2 (Managed Cloud).

Integrations & Ecosystem

Focused on high-speed data movement and enterprise connectivity.

- Apache Kafka, Pulsar, Kinesis.

- Oracle, MySQL, Postgres (CDC support).

- Snowflake, BigQuery.

Support & Community

Active open-source community. Hazelcast Inc. provides professional enterprise support.

#10 — Faust

Short description:

Faust is a library built on top of Python’s AsyncIO, originally developed by the team at Robinhood. It is designed to bring the power of Kafka Streams to the Python community. Faust allows developers to process streams of data using high-level Python patterns, making it the top choice for AI and data science teams working with real-time Kafka data.

Key Features

- AsyncIO Integration: Leverages Python’s asynchronous capabilities for high performance.

- Pythonic API: Uses standard Python classes and functions to define stream processors.

- No Master Node: Like Kafka Streams, it is a library that runs within your application.

- Stateful Operations: Support for tables (state) that can be queried from within the app.

- Scalable: Easy to scale across multiple CPU cores or separate containers.

Pros

- The best “native” experience for Python developers.

- Minimal infrastructure overhead—no separate cluster to manage.

- Great for integrating real-time data with Python-based AI models.

Cons

- Performance is lower than Java-based frameworks like Flink.

- Requires knowledge of asynchronous programming in Python.

Platforms / Deployment

- Linux / macOS / Windows (via Docker)

- Cloud / Self-hosted

Security & Compliance

- Depends on Kafka cluster security (SASL/SSL).

- Not publicly stated.

Integrations & Ecosystem

Strong focus on the Python and Kafka stack.

- Apache Kafka.

- Redis (for shared state).

- PyTorch, Scikit-learn (for real-time ML).

Support & Community

Growing community among Python developers. Community-driven development on GitHub.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| Apache Flink | Complex Stateful Apps | Linux, Win, Mac | Hybrid | Exactly-Once Processing | 4.8/5 |

| Apache Spark | Unified Data Pipelines | Linux, Win, Mac | Hybrid | Micro-batch Throughput | 4.7/5 |

| Kafka Streams | Java Microservices | Multi-platform | Embedded | No Cluster Required | 4.6/5 |

| Amazon Kinesis | AWS Ecosystem | Web/API | Managed | Serverless Flink | 4.4/5 |

| GCP Dataflow | GCP Managed Data | Web/API | Managed | Liquid Sharding | 4.5/5 |

| Apache Samza | Hadoop/YARN Users | Linux | Self-hosted | Multi-tenant Isolation | 4.2/5 |

| Apache Storm | Basic Low-Latency | Multi-platform | Self-hosted | Nimbus Architecture | 4.1/5 |

| Azure Stream | Microsoft Stack | Web/API | Managed | SQL for Streaming | 4.3/5 |

| Hazelcast | Ultra-Low Latency | Multi-platform | Hybrid | In-Memory Storage | 4.5/5 |

| Faust | Python/AI Teams | Linux, Mac | Embedded | AsyncIO Stream Library | 4.2/5 |

Evaluation & Scoring of Stream Processing Frameworks

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| Apache Flink | 10 | 4 | 9 | 9 | 10 | 9 | 8 | 8.45 |

| Apache Spark | 9 | 7 | 10 | 10 | 9 | 10 | 9 | 9.05 |

| Kafka Streams | 8 | 8 | 7 | 9 | 8 | 8 | 10 | 8.20 |

| Amazon Kinesis | 8 | 9 | 9 | 10 | 8 | 9 | 7 | 8.45 |

| GCP Dataflow | 9 | 8 | 9 | 10 | 9 | 9 | 7 | 8.60 |

| Apache Samza | 8 | 4 | 7 | 8 | 8 | 6 | 6 | 6.75 |

| Apache Storm | 7 | 5 | 7 | 7 | 8 | 6 | 7 | 6.65 |

| Azure Stream | 8 | 9 | 9 | 10 | 8 | 9 | 8 | 8.60 |

| Hazelcast | 9 | 6 | 8 | 9 | 10 | 8 | 7 | 8.15 |

| Faust | 7 | 7 | 7 | 7 | 7 | 5 | 9 | 7.00 |

Interpretation:

- 9.0+: Exceptional versatility, easy adoption, and broad ecosystem support (e.g., Spark).

- 8.0 – 8.9: Specialized leaders with extreme performance or deep cloud integration (e.g., Flink, Dataflow).

- 7.0 – 7.9: Target-specific frameworks (e.g., Python teams).

- Below 7.0: Legacy or niche tools that may require specific infrastructure.

Which Stream Processing Frameworks Tool Is Right for You?

Solo / Freelancer

If you are working alone, you likely want to avoid managing server clusters. Kafka Streams or Faust are excellent because they run as simple libraries within your code. If you are already in the cloud, Amazon Kinesis Data Analytics or Azure Stream Analytics allow you to start for pennies without configuring hardware.

SMB

Small businesses with limited engineering resources should prioritize ease of use and managed services. Google Cloud Dataflow or Azure Stream Analytics are ideal because they handle all scaling and maintenance automatically, allowing your team to focus on the business logic rather than the plumbing.

Mid-Market

For companies with growing data teams and diverse processing needs (batch, stream, and ML), Apache Spark is the most practical choice. The massive community and ease of finding Spark-certified engineers make it the safest bet for scaling an organization without hitting a talent wall.

Enterprise

Large-scale enterprises with mission-critical, low-latency requirements should invest in Apache Flink. While it requires more specialized knowledge, the “exactly-once” guarantees and sophisticated state management are necessary for handling global-scale financial or operational data safely.

Budget vs Premium

- Budget: Open-source Apache Spark or Flink deployed on self-managed Kubernetes clusters offers the lowest software cost but higher operational labor costs.

- Premium: Managed offerings like Databricks or Confluent Cloud provide a “white-glove” experience with higher costs but significantly lower management overhead.

Feature Depth vs Ease of Use

If you need deep, fine-grained control over every aspect of the streaming lifecycle, Apache Flink is the winner. If you need to get a streaming dashboard up and running in a few hours using SQL, Azure Stream Analytics or Amazon Kinesis are much better choices.

Integrations & Scalability

Apache Spark has the widest integration ecosystem, supporting almost every modern database and AI library. For pure vertical and horizontal scalability in a cloud-native environment, Google Cloud Dataflow is unmatched due to its liquid sharding and automated resource management.

Security & Compliance Needs

For organizations with high regulatory requirements, managed cloud services like GCP Dataflow or Amazon Kinesis are the most efficient path, as they come with pre-verified SOC 2, HIPAA, and PCI-DSS compliance out of the box.

Frequently Asked Questions (FAQs)

1. What is the difference between “At-Least-Once” and “Exactly-Once” processing?

“At-Least-Once” means that if a failure occurs, the system will re-process data, which might lead to duplicate records. “Exactly-Once” uses sophisticated checkpointing to ensure that every piece of data affects the final outcome only one time, even if a server crashes and restarts, which is critical for financial transactions.

2. Can I use a stream processing framework for historical data?

Yes, many modern frameworks like Apache Flink and Spark are “unified,” meaning you can run the exact same code on a historical file (batch) as you do on a live message queue (stream). This is often called the “Kappa Architecture.”

3. Do I always need a messaging system like Kafka to use these frameworks?

Generally, yes. Stream processing frameworks need a reliable “buffer” to hold data before it is processed. Systems like Apache Kafka, Amazon Kinesis, or Google Pub/Sub provide this buffer, ensuring that data is not lost if the processing framework needs to restart.

4. Is stream processing more expensive than batch processing?

It can be. Stream processing requires infrastructure to be “always-on” to listen for new data, whereas batch processing can spin up resources only when needed. However, the business value of getting insights in seconds often far outweighs the increased infrastructure cost.

5. How much programming knowledge is required to use these tools?

It varies. Frameworks like Flink and Spark require strong Java, Scala, or Python skills. However, managed services from AWS, Azure, and Google allow you to perform complex stream processing using only standard SQL, making it accessible to data analysts.

6. What is “Event-Time” vs “Processing-Time”?

“Event-Time” is the time when the data was created (e.g., when a user clicked a button). “Processing-Time” is when the data actually reached the server. Advanced frameworks like Flink prioritize Event-Time to ensure accuracy even if there are delays in the network.

7. Can these frameworks handle “Out-of-Order” data?

Yes. Modern frameworks use a concept called “Watermarks” to handle data that arrives late or out of sequence. They can wait for a specific period before finalizing a window of data to ensure that late events are included in the final calculation.

8. What is the difference between micro-batching and true streaming?

Micro-batching (like Spark) collects events for a short period (e.g., 500ms) and processes them together. True streaming (like Flink) processes every event the microsecond it arrives. True streaming offers the lowest possible latency but is often more complex to manage.

9. Can I run machine learning models inside a stream?

Absolutely. Most frameworks allow you to load a pre-trained model (like a TensorFlow or Scikit-learn model) and use it to “score” every event in the stream, such as predicting if a login attempt is likely to be a hack.

10. How do I choose between self-hosting and a managed service?

Choose self-hosting if you have a highly skilled DevOps team and want maximum control over costs and configuration. Choose a managed service (like Dataflow or Kinesis) if you want to get to market quickly and don’t want to spend time managing server patches and cluster scaling.

Conclusion

Selecting a stream processing framework is a decision that impacts every layer of an organization’s data strategy. While Apache Flink remains the powerhouse for complex, stateful requirements, the rise of unified platforms like Apache Spark and serverless offerings from cloud providers has made real-time analytics more accessible than ever. The focus has shifted from “can we process this” to “how efficiently can we gain value from this.”The “best” framework depends entirely on your existing infrastructure and the technical proficiency of your team. Organizations already in the AWS or Azure clouds will find immense value in native managed services, while data-heavy engineering teams may prefer the flexibility and power of open-source Flink or Kafka Streams. As a next step, identify a single use case—such as real-time log monitoring—and run a small pilot with one of these frameworks to see how it fits into your operational culture.