Find the Best Cosmetic Hospitals — Choose with Confidence

Discover top cosmetic hospitals in one place and take the next step toward the look you’ve been dreaming of.

“Your confidence is your power — invest in yourself, and let your best self shine.”

Compare • Shortlist • Decide smarter — works great on mobile too.

Introduction

A Lakehouse platform is a modern data architecture that combines the cost-effective storage and flexibility of a data lake with the high-performance querying, ACID transactions, and robust governance of a traditional data warehouse. In the current data landscape, organizations no longer want to maintain two separate, siloed systems for business intelligence and machine learning. The Lakehouse solves this by implementing a metadata layer on top of open-source file formats, allowing structured, semi-structured, and unstructured data to coexist in a single, high-performance repository.

VectorMine The shift toward this architecture is driven by the need for real-time insights and the explosion of generative artificial intelligence. Managing a data lake without the “house” component is like trying to find a specific grain of sand in a desert during a windstorm—painful and largely unproductive. By bringing data warehousing capabilities directly to the storage layer, businesses can eliminate complex ETL (Extract, Transform, Load) pipelines, reduce data redundancy, and provide a “single source of truth” for both analysts and data scientists.

Real-world use cases:

- Predictive Maintenance: Analyzing massive streams of IoT sensor data (unstructured) alongside maintenance logs (structured) to predict equipment failure.

- Customer 360: Combining clickstream data, social media sentiment, and purchase history to create hyper-personalized marketing campaigns.

- Fraud Detection: Running complex machine learning models on transaction streams in real-time to flag suspicious activity before it settles.

- Regulatory Reporting: Ensuring high-speed compliance reporting using the ACID transaction capabilities of the Lakehouse.

- GenAI Development: Storing and indexing massive text corpora for Large Language Model (LLM) training and fine-tuning.

Evaluation criteria for buyers:

- Open Table Format Support: Compatibility with Delta Lake, Apache Iceberg, or Apache Hudi.

- Compute-Storage Decoupling: The ability to scale processing power independently of storage costs.

- Governance & Security: Centralized access control, data lineage, and auditing capabilities.

- Performance Optimization: Features like indexing, caching, and query acceleration.

- SQL Support: The maturity of the SQL engine for traditional BI workloads.

- Machine Learning Integration: Native support for notebooks, model tracking, and Python/R.

- Data Ingestion: Ease of “Zero-ETL” and streaming data ingestion.

- Concurrency: The ability to handle hundreds of simultaneous users without performance lag.

- Vendor Lock-in: The degree to which data is trapped in proprietary formats.

- Total Cost of Ownership: The balance between performance gains and consumption-based pricing.

Mandatory paragraph

- Best for: Large enterprises and data-driven startups that require a unified platform for both business intelligence and advanced AI/ML workloads.

- Not ideal for: Organizations with very small datasets that fit easily into a single database, or businesses that only require simple, infrequent reporting without any need for unstructured data analysis.

Key Trends in Lakehouse Platforms

- The Rise of Universal Interoperability: Platforms are moving toward supporting all major table formats (Iceberg, Hudi, Delta) simultaneously, reducing the risk of picking the “wrong” standard.

- Generative AI Integration: Modern platforms are embedding vector search and LLM orchestration directly into the data layer, allowing users to talk to their data in plain English.

- Serverless Dominance: There is a massive shift toward serverless compute models where users pay only for the exact seconds a query is running, removing the headache of cluster management.

- Governance at the Metadata Level: Centralized governance engines now manage permissions across different clouds and tools from a single pane of glass.

- Zero-ETL Pathways: Major cloud providers are creating direct links between operational databases and lakehouses, making the traditional, fragile ETL process obsolete.

- Streaming-First Architecture: Real-time data ingestion is becoming the default rather than an add-on, with sub-second latency for live dashboards.

- Edge Lakehouse Extensions: Capabilities are stretching to the edge, allowing local data processing before syncing refined insights back to the central hub.

- Automated Data Engineering: AI-driven “autopilots” are now handling partition tuning, indexing, and cost optimization without human intervention.

How We Selected These Tools (Methodology)

The selection of the top 10 Lakehouse platforms was determined through a rigorous evaluation of the current enterprise data market. The methodology focused on:

- Market Adoption: We prioritized platforms that are currently used by global industry leaders and have high community engagement.

- Table Format Maturity: We looked for platforms that offer native, high-performance implementations of open-source standards.

- Architectural Innovation: Priority was given to tools that have successfully decoupled compute and storage to maximize efficiency.

- End-to-End Capability: We selected platforms that offer a complete lifecycle, from ingestion and governance to BI and ML.

- Reliability Signals: We analyzed uptime records and the ability to handle petabyte-scale workloads.

- Security Posture: Evaluation included the maturity of fine-grained access controls and compliance certifications.

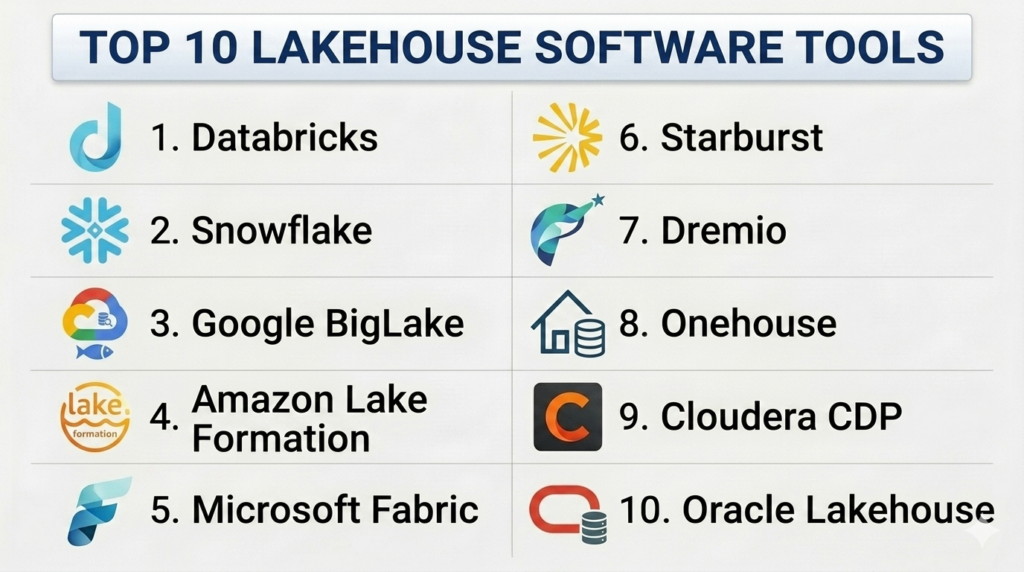

Top 10 Lakehouse Software Tools

#1 — Databricks

Short description: The pioneer of the Lakehouse category, Databricks offers a unified platform for data engineering, data science, and SQL analytics built on the Delta Lake format.

Key Features

- Delta Live Tables: Simplifies the creation of reliable, maintainable, and testable data processing pipelines.

- Unity Catalog: A unified governance layer for all data and AI assets across multiple clouds.

- Photon Engine: A vectorized query engine designed to provide high-performance SQL execution on the lake.

- Mosaic AI: Comprehensive tools for building, deploying, and monitoring generative AI and LLMs.

- Serverless SQL: Provides instant, elastic compute for BI workloads without cluster management.

- Lakeflow: Integrated solution for automated data ingestion and orchestration.

Pros

- Unrivaled performance for large-scale machine learning and complex data engineering.

- Deep integration with open-source Delta Lake ensures high data portability.

Cons

- The platform can be complex for small teams without dedicated data engineers.

- Costs can escalate quickly if serverless and scaling settings are not strictly monitored.

Platforms / Deployment

- AWS / Azure / Google Cloud

- Cloud / Hybrid

Security & Compliance

- SSO/SAML, MFA, RBAC, Attribute-Based Access Control (ABAC).

- SOC 2, ISO 27001, HIPAA, FedRAMP High.

Integrations & Ecosystem

Databricks has a massive ecosystem of partners and native connectors.

- Informatica / Fivetran

- Tableau / Power BI

- dbt / Terraform

- Apache Kafka

Support & Community

Extensive documentation, a massive global user community, and tiered professional support for enterprise customers.

#2 — Snowflake

Short description: Traditionally a data warehouse, Snowflake has evolved into a Lakehouse platform through its support for Apache Iceberg, Unistore, and advanced unstructured data capabilities.

Key Features

- Apache Iceberg Tables: Allows users to store data in open formats while maintaining Snowflake’s performance and governance.

- Snowpark: A developer framework that brings Python, Java, and Scala directly into the data platform.

- Cortex AI: Integrated LLM services that allow users to perform sentiment analysis, translation, and summarization using SQL.

- Dynamic Tables: Simplifies data transformation by automatically updating results as new data arrives.

- Unistore: Enables transactional and analytical workloads to run within a single table structure.

- Horizon: A unified security and governance suite for all data and AI assets.

Pros

- Incredible ease of use with a “zero-maintenance” architecture.

- Superior data sharing capabilities through the Snowflake Marketplace.

Cons

- Historically more expensive for high-volume data storage compared to raw object stores.

- The transition to “open” formats is still maturing compared to “cloud-native” lakehouse tools.

Platforms / Deployment

- AWS / Azure / Google Cloud

- Cloud

Security & Compliance

- End-to-end encryption, MFA, SSO, Private Link.

- SOC 2, ISO 27001, HIPAA, PCI DSS, FedRAMP.

Integrations & Ecosystem

A vast network of integrations through the Snowflake Partner Connect program.

- Matillion / Airbyte

- Looker / Sigma Computing

- Alteryx / Dataiku

Support & Community

Excellent 24/7 technical support and a highly engaged community of “Data Heroes.”

#3 — Google Cloud BigLake

Short description: A storage engine that unifies BigQuery and data lakes, allowing users to query data across multiple clouds and formats with consistent governance.

Key Features

- Unified Security: Applies BigQuery-level row and column-level security to files in S3, Azure Data Lake, and GCS.

- Multi-Cloud Querying: Ability to query data where it lives without moving it to the Google Cloud.

- Iceberg & Delta Support: Native performance optimizations for all major open table formats.

- Vertex AI Integration: Direct connection to Google’s advanced ML platform for model training.

- BigSearch: Enables search-index capabilities on petabytes of log and trace data.

- Metastore Integration: Seamless connection with Hive Metastore for existing Hadoop workloads.

Pros

- Best-in-class multi-cloud governance and security.

- Deep integration with Google’s world-leading AI and search technologies.

Cons

- Optimized specifically for the Google Cloud ecosystem; less ideal for AWS-only shops.

- Can be confusing to navigate the overlap between BigQuery, BigLake, and Dataproc.

Platforms / Deployment

- Google Cloud / AWS / Azure

- Cloud / Hybrid

Security & Compliance

- VPC Service Controls, CMEK (Customer-Managed Encryption Keys).

- SOC 2, ISO 27001, HIPAA, GDPR.

Integrations & Ecosystem

Part of the broader Google Data Cloud.

- Looker / Data Studio

- Dataprep by Trifacta

- Pub/Sub / Dataflow

Support & Community

Standard Google Cloud support tiers and a growing community of GCP data architects.

#4 — Amazon Lake Formation

Short description: A service that makes it easy to set up a secure data lake and implement a lakehouse architecture within the AWS ecosystem.

Key Features

- Centralized Permissions: One place to define access for Glue, Athena, and Redshift Spectrum.

- Governed Tables: Supports ACID transactions and automatic file compaction for S3 storage.

- Blueprint Ingestion: Pre-defined workflows for moving data from operational databases into the lake.

- Cell-Level Security: Fine-grained control over exactly which data points different users can see.

- Cross-Account Sharing: Securely share data across different AWS accounts without copying.

- Integration with Glue Data Quality: Automated monitoring and cleaning of incoming data streams.

Pros

- Deeply integrated with the AWS stack, making it the natural choice for AWS-centric teams.

- Very cost-effective when using S3 as the primary storage layer.

Cons

- Management of underlying services (Glue, Athena, S3) can be fragmented.

- Lacks the “all-in-one” cohesive UI found in Databricks or Snowflake.

Platforms / Deployment

- AWS

- Cloud

Security & Compliance

- IAM integration, KMS encryption, VPC endpoints.

- SOC 2, ISO 27001, HIPAA, FedRAMP High.

Integrations & Ecosystem

Native to the world’s largest cloud ecosystem.

- Amazon Redshift / Athena

- Amazon EMR / SageMaker

- QuickSight

Support & Community

Extensive AWS documentation and access to the global AWS partner network for implementation.

#5 — Microsoft Azure Fabric

Short description: A unified analytics platform that brings together data engineering, data science, and BI in a single, SaaS-based lakehouse environment.

Key Features

- OneLake: A “OneDrive for data” that provides a single, unified logical lake for the entire organization.

- Direct Lake Mode: Allows Power BI to query data in the lakehouse directly without importing or duplicating it.

- Synapse Data Engineering: High-performance Spark pools for large-scale data processing.

- Data Factory Integration: Built-in orchestration for complex data movement and transformation.

- Shortcut Technology: Virtualizes data from other lakes (S3, GCS) into OneLake without movement.

- Copilot for Data: Generative AI assistance for writing SQL, Spark code, and creating reports.

Pros

- Superior integration with the Microsoft 365 and Power BI ecosystems.

- Simplifies the complex Azure Synapse/Data Factory landscape into one cohesive UI.

Cons

- A relatively new platform that is still rapidly evolving.

- Optimized for “all-in” Microsoft environments; less flexible for multi-cloud.

Platforms / Deployment

- Azure

- Cloud

Security & Compliance

- Microsoft Entra ID (formerly Azure AD), Purview integration.

- SOC 2, ISO 27001, HIPAA, FedRAMP.

Integrations & Ecosystem

Perfect for organizations using the Microsoft stack.

- Power BI / Excel

- Azure DevOps

- Microsoft Purview

Support & Community

Standard Microsoft enterprise support and an enormous community of Azure practitioners.

#6 — Starburst

Short description: Built on the open-source Trino engine, Starburst provides a high-performance data lakehouse that allows users to query data where it lives across any cloud.

Key Features

- Warp Speed: Automated indexing and caching designed to make data lake queries run at data warehouse speeds.

- Starburst Gravity: A centralized governance layer that manages security across all connected data sources.

- Stargate: Enables high-speed, cross-region and cross-cloud analytics while minimizing data egress.

- Iceberg-Centric: Deeply optimized for Apache Iceberg as the primary table format for the lakehouse.

- Great Expectations Integration: Built-in data quality checks to ensure reliable insights.

- SQL-First Experience: Provides a familiar environment for traditional data analysts.

Pros

- Arguably the fastest query engine for federated data across multiple sources.

- Extreme flexibility—runs on any cloud or on-premises environment.

Cons

- Focused primarily on the “query” and “governance” part of the lakehouse, not the storage.

- May require additional tools for heavy machine learning or notebook-based work.

Platforms / Deployment

- AWS / Azure / GCP / On-premises

- Cloud / Hybrid / Self-hosted

Security & Compliance

- Ranger / Hive Metastore integration, SSO, RBAC.

- SOC 2 Type II.

Integrations & Ecosystem

Open and flexible connectivity.

- dbt / Airflow

- Tableau / Power BI / ThoughtSpot

- Snowflake / BigQuery (as sources)

Support & Community

Excellent professional support from the creators of Trino and a strong open-source lineage.

#7 — Dremio

Short description: Known as the “easy button” for data lakes, Dremio provides a self-service Lakehouse platform built on Apache Arrow for lightning-fast SQL.

Key Features

- Reflections: A unique acceleration technology that automatically optimizes queries using sub-surface data structures.

- Arctic: A catalog for Iceberg that provides Git-like capabilities (branching, merging) for data.

- Arrow Flight: High-performance data transport that is much faster than traditional ODBC/JDBC.

- Semantic Layer: Allows data teams to define business logic once and share it across all BI tools.

- No-Copy Data Sharing: Allows users to share datasets across the organization without creating duplicates.

- SQL Runner: A powerful, browser-based SQL editor for rapid data exploration.

Pros

- Exceptional performance-to-cost ratio for SQL workloads on S3 or ADLS.

- User-friendly interface that empowers non-technical analysts to explore the lake.

Cons

- Less focused on the “data science” and “ML” side compared to Databricks.

- Self-hosting the software can require significant cluster management effort.

Platforms / Deployment

- AWS / Azure / Google Cloud / On-premises

- Cloud / Hybrid / Self-hosted

Security & Compliance

- RBAC, LDAP/AD integration, TLS encryption.

- SOC 2.

Integrations & Ecosystem

Strongest in the BI and SQL world.

- Tableau / Power BI

- Apache Iceberg / Nessie

- dbt

Support & Community

Responsive support and a dedicated “Dremio University” for free user training.

#8 — Onehouse

Short description: A managed service for the Apache Hudi community, Onehouse provides a “plug-and-play” lakehouse that automates data ingestion and table management.

Key Features

- OneTable: A revolutionary project that allows Hudi data to be read as Delta Lake or Iceberg with no data movement.

- Universal Ingest: Automated, low-code ingestion from databases, streams, and files.

- Continuous Compaction: Automatically cleans up and optimizes small files in the background.

- Incremental ETL: Processes only the changes in data, drastically reducing compute costs.

- Metadata Indexing: High-speed lookup for specific records within a massive data lake.

- Managed Hudi: Removes the complexity of tuning and maintaining Apache Hudi clusters.

Pros

- Best-in-class support for real-time, mutable data (updates and deletes).

- Reduces the “data engineering tax” by automating the most difficult parts of lake management.

Cons

- Newer entry in the market compared to established giants.

- Highly focused on the Hudi ecosystem; less ideal if you are strictly committed to another format.

Platforms / Deployment

- AWS / Google Cloud

- Cloud

Security & Compliance

- VPC Peering, Private Link, Encryption at rest.

- SOC 2 Type II.

Integrations & Ecosystem

Focused on the modern data-in-motion stack.

- Apache Kafka / Confluent

- Amazon Redshift / BigQuery (as query engines)

- StreamSets

Support & Community

Deep expertise from the original creators of Apache Hudi and high-touch customer support.

#9 — Cloudera Data Platform (CDP)

Short description: The evolution of the traditional Hadoop stack, CDP provides a hybrid, multi-cloud Lakehouse for high-security enterprise environments.

Key Features

- Shared Data Experience (SDX): Consistent security, governance, and metadata across all environments.

- Cloudera Data Warehouse: A powerful SQL engine that can handle thousands of concurrent users.

- Cloudera Machine Learning: Integrated workspace for building and deploying AI models at scale.

- Ozone Object Store: High-performance, scalable storage for on-premises lakehouse deployments.

- DataFlow: Visual interface for managing complex data movement and streaming.

- Iceberg Support: Native integration of Apache Iceberg for all storage and compute services.

Pros

- The best choice for hybrid-cloud and strict on-premises requirements.

- Incredible depth of features for large, legacy enterprise data environments.

Cons

- Can be significantly more expensive and complex than cloud-native “SaaS” options.

- The legacy Hadoop DNA can sometimes make the UI feel less modern.

Platforms / Deployment

- AWS / Azure / GCP / On-premises

- Cloud / Hybrid / Private Cloud

Security & Compliance

- Apache Ranger, Atlas integration, Kerberos.

- SOC 2, ISO 27001, HIPAA, PCI DSS.

Integrations & Ecosystem

Comprehensive coverage of the big data ecosystem.

- Apache NiFi / Spark / Flink

- Tableau / Power BI

- Apache Hive / Impala

Support & Community

World-class enterprise support and a veteran community of big data professionals.

#10 — Oracle Cloud Lakehouse

Short description: Combines the Oracle Autonomous Database with high-performance object storage and Big Data service to provide a unified data hub.

Key Features

- Autonomous Database: Self-driving, self-securing, and self-repairing SQL engine.

- Big Data Service: Fully managed Hadoop and Spark clusters for the lakehouse.

- OCI Data Catalog: Centralized metadata management across all Oracle cloud services.

- Zero-ETL with MySQL: Direct integration between operational databases and the analytical lake.

- OCI Data Science: Collaborative workspace for building and deploying ML models.

- Integrated API Gateway: Securely expose lakehouse insights as external APIs.

Pros

- Exceptional performance for organizations already using Oracle databases.

- Automation features reduce the need for large DBA teams.

Cons

- Optimized for the Oracle Cloud; integration with other cloud providers is more difficult.

- Less focus on open-source community standards compared to Databricks or Snowflake.

Platforms / Deployment

- Oracle Cloud (OCI)

- Cloud

Security & Compliance

- Oracle Data Safe, Identity and Access Management.

- SOC 2, ISO 27001, HIPAA, FedRAMP High.

Integrations & Ecosystem

Focused on the Oracle enterprise stack.

- Oracle Analytics Cloud

- GoldenGate

- NetSuite / Fusion Apps

Support & Community

Vast enterprise support network and a dedicated community of Oracle professionals.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| 1. Databricks | Unified AI & Data | AWS, Azure, GCP | Hybrid | Unity Catalog | 4.8/5 |

| 2. Snowflake | Low Maintenance | AWS, Azure, GCP | Cloud | Data Marketplace | 4.7/5 |

| 3. Google BigLake | Multi-Cloud Governance | AWS, Azure, GCP | Cloud | BigQuery Omniverse | 4.6/5 |

| 4. Amazon Lake Formation | AWS Ecosystem | AWS | Cloud | Cell-Level Security | 4.4/5 |

| 5. Microsoft Fabric | Microsoft/BI Integration | Azure | Cloud | OneLake Architecture | 4.5/5 |

| 6. Starburst | Federated Querying | Multi-Cloud / On-prem | Hybrid | Warp Speed Indexing | 4.6/5 |

| 7. Dremio | High-Speed SQL | Multi-Cloud / On-prem | Hybrid | Data Reflections | 4.5/5 |

| 8. Onehouse | Real-time / Hudi | AWS, Google Cloud | Cloud | OneTable Interop | N/A |

| 9. Cloudera CDP | Hybrid / On-premises | Multi-Cloud / On-prem | Hybrid | Shared Data Exp (SDX) | 4.3/5 |

| 10. Oracle Lakehouse | Oracle Power Users | OCI | Cloud | Autonomous Database | 4.4/5 |

Evaluation & Scoring of Lakehouse Platforms

To provide a clear technical comparison, we have scored each tool on a scale of 1 to 10 across our key criteria. The “Weighted Total” is calculated based on the weights established in the introduction.

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| Databricks | 10 | 6 | 10 | 9 | 10 | 9 | 7 | 8.85 |

| Snowflake | 8 | 10 | 10 | 10 | 9 | 9 | 7 | 8.75 |

| Google BigLake | 9 | 7 | 8 | 10 | 9 | 8 | 8 | 8.40 |

| Amazon Lake Formation | 8 | 6 | 9 | 9 | 8 | 9 | 9 | 8.15 |

| Microsoft Fabric | 8 | 9 | 9 | 9 | 8 | 9 | 8 | 8.55 |

| Starburst | 8 | 7 | 9 | 9 | 10 | 8 | 7 | 8.20 |

| Dremio | 8 | 8 | 8 | 8 | 10 | 8 | 9 | 8.35 |

| Onehouse | 9 | 7 | 8 | 8 | 9 | 8 | 8 | 8.15 |

| Cloudera CDP | 9 | 5 | 9 | 10 | 8 | 9 | 6 | 8.00 |

| Oracle Lakehouse | 8 | 7 | 7 | 10 | 9 | 8 | 8 | 8.05 |

How to Interpret the Scores:

- Performance (10%): A score of 10 for Databricks and Starburst reflects their dominance in large-scale distributed query execution.

- Ease of Use (15%): Snowflake and Microsoft Fabric lead here due to their SaaS nature and intuitive interfaces.

- Core Features (25%): Databricks receives a 10 for its comprehensive machine learning and engineering integration.

Which Lakehouse Platform Tool Is Right for You?

Solo / Freelancer

If you are a solo data consultant or a freelancer building a modern data stack for a client, Snowflake or Dremio Cloud are the best choices. Snowflake’s zero-maintenance model means you won’t spend half your billable hours managing clusters, while Dremio Cloud offers a very generous “starter” tier for small-scale SQL exploration on S3.

SMB

For Small and Medium Businesses that are already integrated into a specific cloud ecosystem, the “native” choice is usually the best. If you are on Azure, Microsoft Fabric provides an all-in-one experience that includes Power BI, making it extremely cost-effective. If you are on AWS, Amazon Lake Formation allows you to grow as your data grows without huge upfront costs.

Mid-Market

Companies that have specialized data engineering teams but want to avoid the “big cloud” tax should evaluate Onehouse or Starburst. Onehouse is excellent for teams that need to handle real-time data and frequent updates (like e-commerce or fintech), while Starburst is ideal for teams that need to query data across multiple disparate systems without moving it.

Enterprise

For large enterprises with multi-cloud or hybrid-cloud requirements, Databricks and Cloudera CDP are the primary contenders. Databricks is the winner for organizations that prioritize machine learning and GenAI, while Cloudera is the go-to for organizations that must maintain significant on-premises data lakes while slowly migrating to the cloud.

Budget vs Premium

- Budget: Dremio and Starburst (Galaxy) offer highly efficient compute that can significantly lower your monthly cloud bill if managed correctly.

- Premium: Snowflake and Databricks are premium services that charge a higher markup for their advanced automation and “one-click” simplicity.

Feature Depth vs Ease of Use

- Feature Depth: Databricks and Cloudera offer the most “knobs” and tools for every possible data scenario.

- Ease of Use: Snowflake and Microsoft Fabric provide the smoothest, most “app-like” experience for users.

Integrations & Scalability

- Top Integrations: Snowflake and AWS Lake Formation are at the center of the largest partnership ecosystems in the world.

- Top Scalability: Databricks and Cloudera have been proven at the multi-petabyte scale in some of the world’s most demanding environments.

Security & Compliance Needs

Organizations with extreme security needs (Government, Healthcare) should prioritize Google Cloud BigLake for its cross-cloud security or Amazon Lake Formation for its highly granular cell-level permissions.

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between a Data Warehouse and a Lakehouse?

A data warehouse is optimized for structured data and high-speed SQL, while a data lake is for storing raw files of any type. A Lakehouse combines these, allowing you to run SQL queries and machine learning directly on the raw files in the lake using a metadata layer that provides warehouse-like features.

2. Do I need to move all my data into a Lakehouse platform?

Not necessarily. Modern platforms like Starburst and Google BigLake allow for “federated” querying, meaning you can leave your data where it is (S3, GCS, or on-prem) and query it through the Lakehouse interface without expensive data movement.

3. Which open table format should I choose: Iceberg, Delta, or Hudi?

The choice is becoming less critical as tools like Onehouse OneTable and Databricks UniForm allow you to write in one format and read in others. Generally, Delta Lake is the standard for Databricks users, while Iceberg is gaining massive traction among Snowflake and AWS users.

4. How does a Lakehouse help with Generative AI?

Lakehouses are ideal for GenAI because they can store the unstructured data (text, images) needed for training alongside the structured metadata. Many platforms now include built-in vector search and LLM integrations to facilitate building AI applications.

5. Is a Lakehouse more expensive than a traditional Data Warehouse?

Storage is typically much cheaper in a Lakehouse because it uses open object stores like S3. However, the compute costs for complex queries can be similar. The real savings come from reducing the need for expensive and time-consuming ETL pipelines.

6. Can I run real-time dashboards on a Lakehouse?

Yes. Technologies like Onehouse and Databricks Lakeflow have significantly reduced ingestion latency, allowing for sub-second data updates. Query engines like Dremio and Starburst then provide the high-speed execution needed for interactive dashboards.

7. What is “Zero-ETL” and how does it work?

Zero-ETL is a feature where the platform automatically synchronizes data from an operational database (like MySQL or PostgreSQL) to the Lakehouse in the background, removing the need for developers to write and maintain manual data pipelines.

8. Does a Lakehouse require a specific cloud provider?

While many Lakehouse platforms are cloud-native, tools like Cloudera CDP, Starburst, and Dremio can be deployed on-premises or in hybrid environments, providing flexibility for organizations with strict data residency requirements.

9. How do these platforms handle data governance?

Lakehouse platforms provide a centralized metadata layer (like Unity Catalog or Lake Formation) where you can define security policies. These policies are then enforced regardless of which tool or engine is accessing the data.

10. Can non-technical users use a Lakehouse?

Yes. Most modern Lakehouse platforms provide user-friendly SQL editors and integrate directly with BI tools like Power BI and Tableau. Newer “Copilot” features also allow users to generate queries and reports using natural language.

Conclusion

The Lakehouse platform has effectively ended the debate between data lakes and data warehouses. By providing a single, unified architecture for all data types and workloads, platforms like Databricks, Snowflake, and Microsoft Fabric have drastically simplified the data professional’s job.As you evaluate these tools, remember that the “best” platform is the one that fits into your existing cloud strategy and team skill set. We recommend starting with a proof-of-concept focused on “open” table formats, as this ensures your data remains portable and future-proof as the landscape continues to evolve.