Find the Best Cosmetic Hospitals — Choose with Confidence

Discover top cosmetic hospitals in one place and take the next step toward the look you’ve been dreaming of.

“Your confidence is your power — invest in yourself, and let your best self shine.”

Compare • Shortlist • Decide smarter — works great on mobile too.

Introduction

MLOps (Machine Learning Operations) platforms are integrated suites of tools designed to streamline the entire lifecycle of machine learning, from data preparation and model training to deployment, monitoring, and retraining. Think of MLOps as the intersection of Machine Learning, DevOps, and Data Engineering. While standard DevOps focuses on the lifecycle of code, MLOps handles the added complexity of data and model versioning, ensuring that AI models remain accurate and reliable once they hit production.

In the current technological landscape, organizations are moving away from “artisan” AI—where models are built in isolation—toward industrialized AI. This shift is driven by the need to manage thousands of models simultaneously without increasing headcount proportionally. A robust MLOps platform provides the “assembly line” necessary to ensure that models are reproducible, auditable, and capable of delivering consistent business value without the risk of “model drift” or silent failures.

Real-world use cases:

- Automated Retraining Pipelines: Detecting when a recommendation engine’s accuracy drops due to shifting consumer trends and automatically triggering a new training run with fresh data.

- Compliance & Governance: Maintaining a full lineage of which dataset and which version of code produced a specific model in highly regulated sectors like banking.

- Resource Optimization: Managing expensive GPU clusters dynamically to ensure that high-priority training jobs get resources while idling nodes are shut down to save costs.

Buyer evaluation criteria:

- Experiment Tracking: The ability to log every parameter, code version, and result during the research phase.

- Model Registry: A centralized “version control” system for models that manages transitions from staging to production.

- Deployment Orchestration: Support for A/B testing, canary deployments, and blue-green strategies.

- Monitoring & Observability: Tools to track data drift, concept drift, and system health in real-time.

- Feature Store: A centralized repository for sharing and discovering features across different ML projects.

- Pipeline Automation: Support for Directed Acyclic Graphs (DAGs) to automate complex multi-step workflows.

- Data Versioning: The ability to snapshot the exact state of data used for a specific training run.

- Collaborative Infrastructure: Multi-user support with role-based access control for shared projects.

Mandatory paragraph

- Best for: Data scientists, ML engineers, and IT operations teams in organizations that have moved past the initial AI experimentation phase and are now deploying models into mission-critical production environments.

- Not ideal for: Pure research labs that never intend to put models into a production environment or small teams with only one or two static models that do not require frequent updates.

Key Trends in MLOps Platforms

- Rise of LLMOps: Specialized workflows for managing Large Language Models, including prompt versioning, vector database integration, and fine-tuning at scale.

- Serverless MLOps: A transition toward platforms that abstract away the underlying Kubernetes or VM management, allowing engineers to focus purely on the pipeline logic.

- Standardization of Feature Stores: The emergence of specialized layers that ensure the same data features used during training are available with low latency during real-time inference.

- Automated Model Governance: Built-in toolkits that automatically generate compliance documentation and bias reports to satisfy emerging global AI regulations.

- Edge MLOps Consolidation: Tools specifically designed to manage the deployment and monitoring of models on millions of distributed IoT and mobile devices.

- Unified Data and AI Governance: A trend toward merging data catalogs with model registries to provide a single view of the entire information supply chain.

- Shift-Left Security in ML: Integrating vulnerability scanning for model weights and containerized ML environments early in the development cycle.

- Declarative MLOps: Moving toward “Infrastructure as Code” for ML, where entire pipelines are defined in YAML or Python and managed via GitOps.

How We Selected These Tools (Methodology)

- Industrial Maturity: We prioritized tools that have a proven track record in high-stakes production environments.

- Full Lifecycle Coverage: Preference was given to platforms that cover the “Inner Loop” (development) and the “Outer Loop” (production).

- Integration Flexibility: We evaluated how well these tools connect with standard data lakes, warehouses, and CI/CD systems.

- Operational Scalability: The ability of the platform to handle increasing numbers of models and larger datasets without performance degradation.

- Security & Compliance: We looked for robust RBAC, audit trails, and encryption standards.

- Market Momentum: Analysis of community adoption, GitHub activity (for open source), and enterprise customer growth.

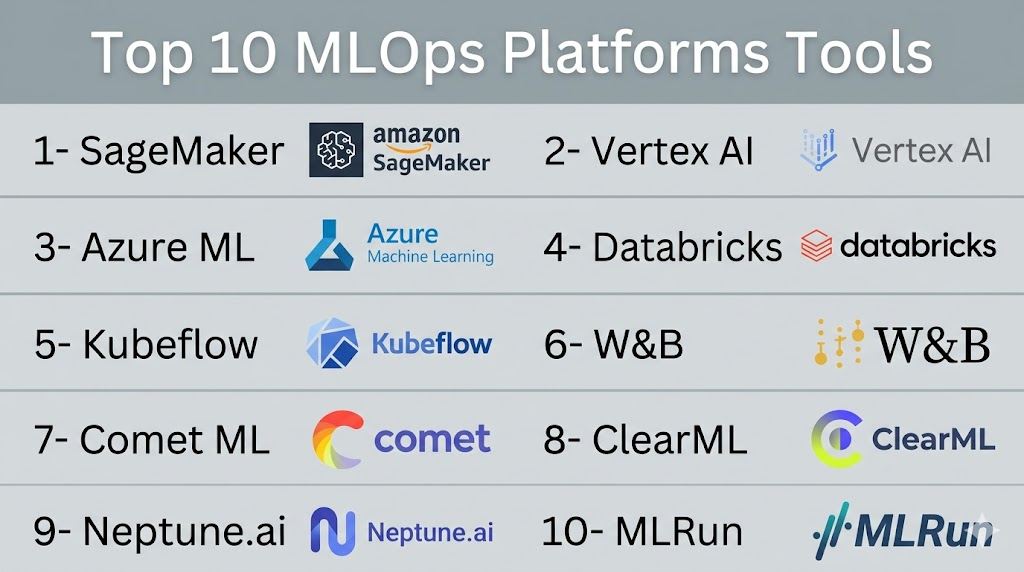

Top 10 MLOps Platforms Tools

#1 — Amazon SageMaker

Short description:

Amazon SageMaker is the most comprehensive managed service in the MLOps space, designed specifically for the AWS ecosystem. it provides a unified environment for the entire ML lifecycle, including specialized tools for data labeling, feature engineering, experiment tracking, and automated model deployment. It is built for organizations that want to eliminate the operational burden of managing their own ML infrastructure.

Key Features

- SageMaker Pipelines: A purpose-built CI/CD service for machine learning.

- Model Monitor: Automatically detects concept drift in production models.

- Feature Store: A fully managed repository to store, share, and manage features.

- SageMaker Projects: Provides templates for standardized MLOps workflows using Jenkins or AWS CodePipeline.

- Inference Recommender: Automatically selects the best instance type for your specific model performance needs.

Pros

- Deepest integration with the AWS stack (S3, IAM, CloudWatch).

- Massive scale: can handle training jobs across thousands of GPUs.

Cons

- The “SageMaker Studio” UI can be complex and intimidating for new users.

- Potential for significant vendor lock-in within the AWS environment.

Platforms / Deployment

- Web / API / CLI

- Cloud (AWS)

Security & Compliance

- VPC Isolation, IAM, KMS Encryption, MFA, CloudTrail Audit Logs.

- SOC 2, ISO 27001, HIPAA, FedRAMP, PCI-DSS.

Integrations & Ecosystem

SageMaker is the hub of the AWS AI ecosystem and connects with almost all modern data tools.

- AWS Glue, Redshift, and S3.

- Kubernetes (via SageMaker Operators).

- Integration with Bedrock for Generative AI workflows.

Support & Community

AWS Premium Support tiers are available. There is a massive global community of certified AWS practitioners and extensive documentation.

#2 — Google Vertex AI

Short description:

Vertex AI is Google Cloud’s unified AI platform that integrates MLOps features with Google’s world-class data and AI infrastructure. It is designed for teams that want to use the same tools that power Google’s own AI products. It excels in its support for TensorFlow, JAX, and the latest foundation models, offering a highly automated “serverless” experience for MLOps.

Key Features

- Vertex AI Pipelines: Based on Kubeflow, allowing for portable and reproducible workflows.

- Model Registry: A centralized repository to manage the lifecycle of your models.

- Vertex AI Metadata: Automatically tracks artifacts and lineage for every execution.

- Vizier: A black-box optimization service for hyperparameter tuning.

- Vertex Explainable AI: Provides tools to understand model predictions and detect bias.

Pros

- Superior integration with BigQuery for “Zero-ETL” machine learning.

- Access to specialized TPU hardware for high-speed training.

Cons

- Pricing for specialized services like AutoML can be high.

- Less documentation for third-party cloud integrations compared to AWS.

Platforms / Deployment

- Web / API

- Cloud (GCP)

Security & Compliance

- CMEK, VPC Service Controls, IAM, SSO.

- SOC 2, ISO 27001, HIPAA, FedRAMP High.

Integrations & Ecosystem

Heavily focused on the GCP and Open Source AI stack.

- BigQuery, Dataflow, and Cloud Storage.

- Kubeflow and TFX (TensorFlow Extended).

- Deep integration with Hugging Face.

Support & Community

Standard Google Cloud support tiers. It has a fast-growing community, especially among researchers and GenAI developers.

#3 — Azure Machine Learning

Short description:

Azure Machine Learning is Microsoft’s enterprise-grade platform for the end-to-end ML lifecycle. It stands out for its deep integration with Azure DevOps and GitHub, making it a natural fit for software engineering teams that want to treat ML as a standard part of their CI/CD process. It is highly regarded for its robust security and compliance features.

Key Features

- Machine Learning Pipelines: Allows for the creation of complex, reusable workflows.

- Model Management: Tracks model versions and lineage across environments.

- Responsible AI Dashboard: A comprehensive tool for debugging and explaining models.

- Data Asset Management: Versions and tracks datasets used for training.

- Managed Online Endpoints: Simplifies the deployment and scaling of real-time models.

Pros

- Best-in-class integration with Azure DevOps and GitHub Actions.

- Strongest focus on enterprise governance and Responsible AI.

Cons

- The transition between the “Classic” and “v2” interfaces can be confusing.

- Performance in the Web UI can occasionally be laggy with large numbers of assets.

Platforms / Deployment

- Web / Windows / macOS / Linux

- Cloud (Azure)

Security & Compliance

- Entra ID (Azure AD), VNET Support, RBAC, Managed Identities.

- SOC 2, ISO 27001, HIPAA, GDPR, FedRAMP.

Integrations & Ecosystem

Central to the Microsoft data stack and developer tools.

- Azure Synapse, Power BI, and Data Lake Storage.

- GitHub and Azure DevOps.

- Native OpenAI service integration.

Support & Community

Microsoft Enterprise Support. Wide availability of certified consultants and extensive documentation.

#4 — Databricks (Mosaic AI)

Short description:

Databricks provides a unified Data and AI platform built on the “Lakehouse” architecture. Its MLOps capabilities, now enhanced by the Mosaic AI suite, allow teams to manage data engineering and machine learning in a single environment. It is the primary contributor to MLflow, the industry standard for open-source MLOps.

Key Features

- MLflow Integration: Built-in support for experiment tracking, model registry, and serving.

- Unity Catalog: Provides a unified governance layer for data, models, and features.

- Mosaic AI Model Serving: A serverless environment for deploying high-performance models.

- Feature Store: Integrated directly into the Delta Lake architecture.

- Collaborative Notebooks: Shared environments for data scientists and engineers.

Pros

- Eliminates the “handoff” problem between data engineers and ML engineers.

- Built on open-source standards (Spark, MLflow, Delta Lake).

Cons

- Infrastructure costs (DBUs) can be difficult to predict.

- Can be overkill for organizations that don’t need Spark-level scaling.

Platforms / Deployment

- Web / API

- Cloud (AWS, Azure, GCP)

Security & Compliance

- SSO/SAML, MFA, Unity Catalog Governance, Encryption.

- SOC 2, ISO 27001, HIPAA, GDPR.

Integrations & Ecosystem

Vibrant ecosystem across all three major cloud providers.

- Delta Lake, Spark, and dbt.

- Tableau and Power BI.

- MLflow (Native).

Support & Community

Professional enterprise support. Massive community influence due to the popularity of Spark and MLflow.

#5 — Kubeflow

Short description:

Kubeflow is the leading open-source MLOps platform built on top of Kubernetes. It is designed to make deployments of machine learning workflows on Kubernetes simple, portable, and scalable. It is ideal for organizations that want to maintain complete control over their infrastructure or run MLOps in on-premise data centers.

Key Features

- Kubeflow Pipelines: A platform for building and deploying portable ML workflows.

- Katib: A cloud-native system for hyperparameter tuning.

- Central Dashboard: A unified UI for accessing all Kubeflow components.

- Notebooks: Integrated support for Jupyter, VS Code, and RStudio.

- KFServing (KServe): A standardized model serving stack on Kubernetes.

Pros

- Completely free and open source (no software licensing costs).

- Can be deployed anywhere Kubernetes runs (Cloud, On-prem, Edge).

Cons

- High operational complexity; requires a dedicated Kubernetes team to maintain.

- Lack of “out-of-the-box” support and SLAs.

Platforms / Deployment

- Linux / Windows / macOS (via Kubernetes)

- Self-hosted / Hybrid / Cloud

Security & Compliance

- Istio-based security, RBAC, Namespace isolation.

- Varies / Depends on underlying infrastructure.

Integrations & Ecosystem

Deeply integrated with the Cloud Native Computing Foundation (CNCF) stack.

- Argo Workflows and Tekton.

- Prometheus and Grafana for monitoring.

- TensorFlow, PyTorch, and JAX.

Support & Community

Driven by a massive open-source community. Commercial support is available through companies like Arrikto and Canonical.

#6 — Weights & Biases (W&B)

Short description:

Weights & Biases is a developer-first MLOps platform that focuses on experiment tracking, model management, and collaborative research. Unlike the large cloud suites, W&B is designed to be “infrastructure agnostic,” meaning it can track experiments running on a local laptop, a private cluster, or any public cloud.

Key Features

- W&B Prompts: Specialized tools for visualizing and debugging LLM pipelines.

- Experiments: Automatically logs code, hyperparameters, and environment state.

- Artifacts: Tracks dataset and model versioning with full lineage.

- W&B Launch: Simplifies the execution of ML jobs on remote compute resources.

- Sweeps: A distributed hyperparameter optimization service.

Pros

- Incredibly easy to set up (one line of code integration).

- The most polished and useful visualization interface in the industry.

Cons

- Does not provide its own compute resources (you must bring your own).

- Pricing can be steep for large teams with many active experiments.

Platforms / Deployment

- Web / API / CLI

- Cloud / Self-hosted (Private Instance)

Security & Compliance

- SSO, MFA, Encryption-at-rest, Audit logs.

- SOC 2 Type II.

Integrations & Ecosystem

The “Switzerland” of MLOps, integrating with every major framework.

- PyTorch, TensorFlow, Keras, Hugging Face.

- SageMaker, Vertex AI, and Kubernetes.

- GitHub and Slack.

Support & Community

Excellent developer-centric support. It has massive mindshare among the world’s leading AI research teams.

#7 — Comet ML

Short description:

Comet ML is an MLOps platform that helps data scientists and teams track, compare, and optimize their machine learning experiments. It is highly focused on productivity and providing actionable insights into why certain models perform better than others. It is a direct competitor to Weights & Biases with a strong emphasis on enterprise-wide collaboration.

Key Features

- Comet LLM: A dedicated suite for tracking and evaluating Large Language Models.

- MPM (Model Production Monitoring): Tracks models in production for drift and accuracy.

- Experiment Management: Real-time logging of metrics, assets, and code.

- Panels: Customizable visualizations to compare experiments across teams.

- Data Versioning: Tracks datasets used in every experiment for perfect reproducibility.

Pros

- Excellent support for multi-tenant enterprise environments.

- Powerful “Diff” tools to compare code and parameters between different runs.

Cons

- Like W&B, it is a tracking layer, not a compute provider.

- The community ecosystem is slightly smaller than that of Weights & Biases.

Platforms / Deployment

- Web / API

- Cloud / Self-hosted

Security & Compliance

- SSO, MFA, RBAC, Encryption-at-rest.

- SOC 2 Type II.

Integrations & Ecosystem

Integrates seamlessly into the modern data science stack.

- Scikit-learn, PyTorch, TensorFlow.

- SageMaker and Azure ML.

- Snowflake and Google Cloud Storage.

Support & Community

Highly responsive customer success teams. Active community of data scientists and researchers.

#8 — ClearML

Short description:

ClearML is an open-source, end-to-end MLOps platform that prides itself on being “zero-integration,” meaning it can wrap around existing code with minimal changes. It provides a unique combination of experiment tracking, data versioning, orchestration, and model serving, making it one of the most versatile tools on the market.

Key Features

- ClearML Orchestrator: Turns any machine (Cloud or On-prem) into a worker node instantly.

- Experiment Manager: Automated tracking of everything from git commits to console output.

- Data Manager: Version-controlled data management for local and cloud storage.

- Model Serving: Optimized serving for real-time and batch inference.

- Hyper-parameter Optimization: Integrated tuning service with easy setup.

Pros

- One of the few tools that provides both tracking and orchestration for free (open source).

- Very flexible: works as well for a single developer as it does for an enterprise.

Cons

- The UI can be less polished than some of its commercial SaaS competitors.

- Initial configuration of the “ClearML Server” can be tricky for beginners.

Platforms / Deployment

- Web / API / CLI

- Cloud (ClearML Cloud) / Self-hosted

Security & Compliance

- RBAC, SSO (Enterprise), SSL/TLS.

- Not publicly stated.

Integrations & Ecosystem

Focused on the engineering and DevOps side of machine learning.

- Kubernetes, Slurm, and Docker.

- PyTorch, TensorFlow, and XGBoost.

- S3, GCS, and Azure Blob.

Support & Community

Active Slack community. Commercial tiers offer professional support and managed hosting.

#9 — Neptune.ai

Short description:

Neptune.ai is a metadata store for MLOps designed specifically for research and production teams who need to organize their model building. It acts as a single source of truth for all experiment metadata, allowing teams to compare results, share insights, and manage models efficiently. It is built to handle millions of experiments without performance lag.

Key Features

- Experiment Tracking: A fast and flexible logger for any type of ML project.

- Model Registry: Manage the lifecycle and versioning of your production models.

- Collaboration: Easy-to-share links for specific experiments or comparison dashboards.

- Custom UI: Allows users to build their own dashboards to visualize specific metrics.

- Data Version Control (DVC) Integration: Seamlessly tracks data versions alongside model code.

Pros

- Extremely lightweight and focuses purely on doing “Metadata” better than anyone else.

- Highly performant even with massive amounts of logged data.

Cons

- Limited built-in orchestration or deployment features.

- Not as “all-in-one” as the large cloud platforms.

Platforms / Deployment

- Web / API

- Cloud / Self-hosted

Security & Compliance

- SSO, MFA, Encryption-at-rest.

- SOC 2 Type II.

Integrations & Ecosystem

Deeply integrated with the data science library ecosystem.

- Optuna, Scikit-learn, and LightGBM.

- DVC and GitHub.

- PyTorch Lightning and Catalyst.

Support & Community

Excellent customer support with a focus on helping teams migrate from manual tracking.

#10 — MLRun (Iguazio)

Short description:

MLRun is an open-source MLOps framework (now part of McKinsey’s Iguazio acquisition) that simplifies the transition from local code to a production-ready pipeline. It is unique in its focus on “feature engineering at the edge” and its ability to turn Python functions into scalable, containerized microservices automatically.

Key Features

- Feature Store: A high-performance store for real-time and batch feature processing.

- Function Marketplace: A library of pre-built, production-hardened ML functions.

- Nuclio Integration: High-performance “Serverless” functions for data processing.

- Real-time Monitoring: Integrated tracking of model performance and drift.

- Workflow Orchestration: Supports multi-step pipelines across diverse compute resources.

Pros

- Strongest tool for real-time and high-performance MLOps.

- Excellent for building complex “AI-as-a-Service” applications.

Cons

- The learning curve for the MLRun architecture can be steep.

- The community is smaller than that of Kubeflow or MLflow.

Platforms / Deployment

- Linux / Web

- Cloud / Self-hosted / Hybrid

Security & Compliance

- RBAC, Encryption, IAM integration.

- Not publicly stated.

Integrations & Ecosystem

Focused on the data engineering and real-time processing stack.

- Kubernetes and Nuclio.

- Kafka and Spark.

- Pandas and Scikit-learn.

Support & Community

Commercial support is provided through Iguazio (a McKinsey Company). The open-source community is active but specialized.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| #1 — SageMaker | AWS Enterprises | Web, API | Cloud | Managed Pipelines | N/A |

| #2 — Vertex AI | GCP / GenAI | Web, API | Cloud | TPU Hardware Access | N/A |

| #3 — Azure ML | MSFT Ecosystem | Web, Windows | Cloud | Responsible AI Dashboard | N/A |

| #4 — Databricks | Spark / Data Teams | Web, API | Cloud | Unity Catalog | 4.5/5 |

| #5 — Kubeflow | K8s / On-prem | Linux, Windows | Hybrid | Portability | 4.6/5 |

| #6 — W&B | Research / LLMOps | Web, API | Hybrid | Visualization | 4.8/5 |

| #7 — Comet ML | Enterprise Teams | Web, API | Hybrid | Model Production Mon. | 4.7/5 |

| #8 — ClearML | DevOps / Budget | Web, API | Hybrid | Zero-Integration Setup | 4.7/5 |

| #9 — Neptune.ai | Metadata Storage | Web, API | Hybrid | Lightweight Tracking | 4.6/5 |

| #10 — MLRun | Real-time / Edge | Linux, Web | Hybrid | Serverless Functions | N/A |

Evaluation & Scoring of MLOps Platforms

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| #1 — SageMaker | 10 | 5 | 10 | 10 | 10 | 9 | 7 | 8.85 |

| #2 — Vertex AI | 10 | 6 | 9 | 10 | 10 | 9 | 8 | 8.85 |

| #3 — Azure ML | 9 | 7 | 10 | 10 | 9 | 9 | 8 | 8.75 |

| #4 — Databricks | 9 | 6 | 9 | 9 | 10 | 8 | 7 | 8.25 |

| #5 — Kubeflow | 8 | 3 | 8 | 8 | 9 | 5 | 10 | 7.40 |

| #6 — W&B | 7 | 10 | 10 | 9 | 8 | 9 | 8 | 8.45 |

| #7 — Comet ML | 8 | 8 | 8 | 9 | 8 | 8 | 7 | 7.85 |

| #8 — ClearML | 8 | 8 | 8 | 7 | 8 | 7 | 10 | 8.05 |

| #9 — Neptune | 7 | 9 | 9 | 9 | 7 | 8 | 8 | 8.05 |

| #10 — MLRun | 9 | 5 | 8 | 8 | 10 | 7 | 8 | 7.90 |

Interpretation:

- 8.5+: Industry-leading platforms that provide a complete, managed lifecycle with maximum security.

- 8.0 – 8.4: Highly specialized or developer-first tools that are world-class in specific areas (like tracking).

- 7.5 – 7.9: Robust frameworks that are excellent for real-time or hybrid-cloud but may have a steeper learning curve.

- Below 7.5: Powerful open-source tools that require significant internal expertise to manage.

Which MLOps Platforms Tool Is Right for You?

Solo / Freelancer

If you are an individual consultant or a freelancer, you don’t need a heavy infrastructure platform. #6 — Weights & Biases or #9 — Neptune.ai are the best options because they are free for personal projects and allow you to stay organized without any IT setup.

SMB

For a small business with a growing data team, #8 — ClearML offers the best value. It is open source, easy to install, and provides the orchestration features you need to manage a small cluster of GPUs without the high cost of a managed cloud service.

Mid-Market

Companies that are scaling their AI efforts and have a dedicated MLOps team should look at #4 — Databricks or #7 — Comet ML. These tools offer the collaboration and governance features needed for multiple teams to work together safely.

Enterprise

If your organization is already on AWS, Azure, or GCP, the native tools—#1 — SageMaker, #3 — Azure ML, or #2 — Vertex AI—are almost always the correct choice due to their security and compliance depth. For organizations that must remain cloud-agnostic or run on-premise, #5 — Kubeflow or #10 — MLRun are the industry standards.

Budget vs Premium

- Budget: #5 — Kubeflow and #8 — ClearML are the clear winners as they provide professional-grade features for free (excluding infrastructure costs).

- Premium: #1 — SageMaker and #4 — Databricks are premium investments that trade software cost for massive increases in team productivity and automation.

Feature Depth vs Ease of Use

If you want the absolute deepest feature set for complex pipelines, #1 — SageMaker is the winner. If you want the tool that will be the easiest for your data scientists to adopt tomorrow, #6 — Weights & Biases wins every time.

Integrations & Scalability

For organizations that need to scale their data processing alongside their models, #4 — Databricks provides the best unified experience. For organizations building real-time, low-latency AI applications at scale, #10 — MLRun is the top choice.

Security & Compliance Needs

#3 — Azure ML and #8 — Domino Data Lab (not featured in top 10 but notable) are generally regarded as having the most mature governance and “Responsible AI” toolkits for highly regulated industries.

Frequently Asked Questions (FAQs)

1. What is the main difference between DevOps and MLOps?

DevOps focuses on the lifecycle of code and software, prioritizing continuous integration and delivery. MLOps adds two new variables: data and models. MLOps must manage data versioning and model performance over time (drift), which traditional DevOps tools are not designed to handle.

2. Can I use these platforms for Large Language Models (LLMs)?

Yes, the modern MLOps landscape has evolved into “LLMOps.” Platforms like SageMaker, Vertex AI, and Weights & Biases now include specialized features for fine-tuning foundation models, managing prompt versions, and evaluating LLM outputs for safety and accuracy.

3. Do I need to be an expert in Kubernetes to use MLOps platforms?

It depends on the tool. Managed services like SageMaker and Vertex AI hide Kubernetes from the user completely. However, if you choose an open-source framework like Kubeflow, you will need a strong Kubernetes engineering team to manage the deployment and scaling.

4. How much do MLOps platforms typically cost?

The cost varies wildly. Open-source tools like ClearML are free to use. Cloud-native platforms like SageMaker can cost anywhere from $100 to $10,000+ per month depending on how many models you are training, the type of GPUs you are using, and the volume of data you are processing.

5. What is a Feature Store and why do I need one?

A Feature Store is a central place to store and manage features (the inputs for your ML models). It ensures that the exact same data transformation logic used during training is also used during real-time inference, preventing “training-serving skew” which is a common cause of model failure.

6. Is it better to build our own MLOps stack or buy a platform?

Generally, unless you are a large tech company with highly unique requirements, it is better to buy or use an existing platform. Building a custom stack from scratch often leads to a “franken-system” that is difficult to maintain and lacks the governance and security of established platforms.

7. How long does it take to implement an MLOps platform?

A managed cloud service like Vertex AI or SageMaker can be turned on in minutes, but establishing a full production pipeline usually takes 1 to 3 months of engineering effort. Self-hosted installations of Kubeflow can take several months of coordination between DevOps and Data teams.

8. What is “Model Drift” and how do platforms help?

Model Drift occurs when the relationship between the model’s inputs and outputs changes over time (for example, consumer behavior changes during a holiday). MLOps platforms help by monitoring production data and automatically alerting the team when a model’s accuracy drops, often triggering a retraining pipeline.

9. Can I run MLOps platforms on my own servers?

Yes, platforms like Kubeflow, ClearML, and MLRun are specifically designed to be “cloud-agnostic” and can run in your own data center as long as you have a Kubernetes environment set up. This is essential for companies with strict data sovereignty requirements.

10. How do MLOps platforms help with AI regulation and ethics?

Modern platforms include “Model Cards” and “Governance Hubs” that automatically track the lineage of data and code. They also include bias-detection toolkits that help ensure your models are fair and explainable, which is increasingly required by laws such as the EU AI Act.

Conclusion

The transition from experimental AI to production-grade AI is impossible without a standardized MLOps platform. Whether you choose the massive scale and integration of the major cloud providers like Amazon, Google, and Microsoft, or the developer-first agility of Weights & Biases and ClearML, the goal remains the same: reliability, reproducibility, and scalability.

The “best” platform is ultimately determined by your existing infrastructure and the technical maturity of your team. If you are already all-in on a specific cloud, their native tools provide the path of least resistance. However, if you require extreme flexibility or want to avoid vendor lock-in, the open-source ecosystem has reached a level of maturity that can rival any commercial offering. Your next step should be to audit your current “model-to-production” time and run a pilot with one of these platforms to see how much of that manual labor can be automated.