Find the Best Cosmetic Hospitals — Choose with Confidence

Discover top cosmetic hospitals in one place and take the next step toward the look you’ve been dreaming of.

“Your confidence is your power — invest in yourself, and let your best self shine.”

Compare • Shortlist • Decide smarter — works great on mobile too.

Introduction

LLM Orchestration Frameworks are specialized software libraries and platforms designed to manage the complex workflows required to build production-ready applications powered by Large Language Models. In plain English, if an LLM is the “brain,” an orchestration framework acts as the “nervous system.” It connects the model to external data sources, memory, APIs, and other software components, allowing developers to create “chains” or “graphs” of logic that go far beyond a simple chat interface.

These platforms have become essential because raw models, while powerful, are stateless and isolated. They do not know about your private company data, they cannot browse the live web without assistance, and they cannot perform multi-step reasoning tasks reliably on their own. Orchestration frameworks provide the scaffolding to implement Retrieval-Augmented Generation (RAG), autonomous agents, and stateful memory systems, transforming a text-generator into a functional business tool.

Real-world use cases:

- Enterprise Search Engines: Connecting internal document repositories to an LLM to provide cited, accurate answers to employee queries.

- Autonomous Customer Support Agents: Creating systems that can check order statuses, process returns, and update databases without human intervention.

- Automated Content Pipelines: Building workflows that research a topic, draft a technical article, generate social media snippets, and format the output for a CMS.

- Code Generation Assistants: Integrating with IDEs and repositories to suggest context-aware code completions based on a specific codebase’s architecture.

- Financial Data Analysis: Ingesting live market feeds and historical spreadsheets to generate real-time risk assessments and executive summaries.

Buyer evaluation criteria:

- Framework Flexibility: The ability to swap different models (e.g., switching from OpenAI to Anthropic) without rewriting the entire application.

- Data Connector Density: The number of pre-built integrations for databases, cloud storage, and SaaS applications.

- State Management: How well the tool handles long-term memory and conversational context across multiple user sessions.

- Agentic Capabilities: Support for autonomous reasoning and the ability for the model to use tools (API calling).

- Observability & Debugging: Built-in tools to trace every step of a chain to find where a logic error or “hallucination” occurred.

- Scalability: The framework’s overhead and performance when handling thousands of concurrent user requests.

- Community Momentum: The frequency of updates and the availability of third-party plugins or documentation.

- Production Readiness: Features like caching, rate-limiting, and robust error handling for API failures.

Best for: AI engineers, full-stack developers, and enterprise architects who are moving past simple prompts into building complex, data-driven applications. It is ideal for teams that need to integrate AI into existing software ecosystems.

Not ideal for: Organizations that only need a basic chatbot interface like ChatGPT or Claude for simple tasks, where the overhead of an orchestration layer would add unnecessary complexity and cost.

Key Trends in LLM Orchestration Frameworks

- Shift Toward Agentic Workflows: Moving from linear “chains” to autonomous “agents” that can decide which tools to use and how to handle unexpected errors.

- Stateful and Cyclic Graphs: The rise of frameworks that support cycles and complex state management, allowing for iterative refinement of outputs.

- Low-Code Visualization: A growing trend of “drag-and-drop” canvases that allow non-developers to visualize and build LLM pipelines.

- Local-First Execution: Better support for running open-source models locally using frameworks optimized for hardware like Apple Silicon or NVIDIA edge devices.

- RAG to Agentic RAG: Evolution from simple document retrieval to agents that can evaluate the quality of retrieved data and re-search if the information is insufficient.

- Standardization of Interfaces: The industry is moving toward standardized ways to represent “prompts” and “tools” to make frameworks more interchangeable.

- Multi-Agent Coordination: Specialized frameworks designed to let different “specialized agents” (e.g., a coder agent and a reviewer agent) collaborate on a single task.

- Observability First: Integrating deep tracing and evaluation metrics directly into the development loop to measure model accuracy and latency.

How We Selected These Tools (Methodology)

- Market Adoption: We prioritized frameworks with significant GitHub stars, active developer forks, and documented enterprise use cases.

- Feature Completeness: Selection was based on the ability to handle the full lifecycle from prompt management to production deployment.

- Developer Experience: We evaluated the quality of the SDKs, documentation, and the “time-to-hello-world” for new engineers.

- Interoperability: Preference was given to tools that are model-agnostic and support a wide range of vector databases and embedding providers.

- Innovation Velocity: We looked for projects that are updated weekly to keep pace with the rapidly changing AI landscape.

- Scalability Signals: We chose tools that have demonstrated performance in high-concurrency environments and large-scale data processing.

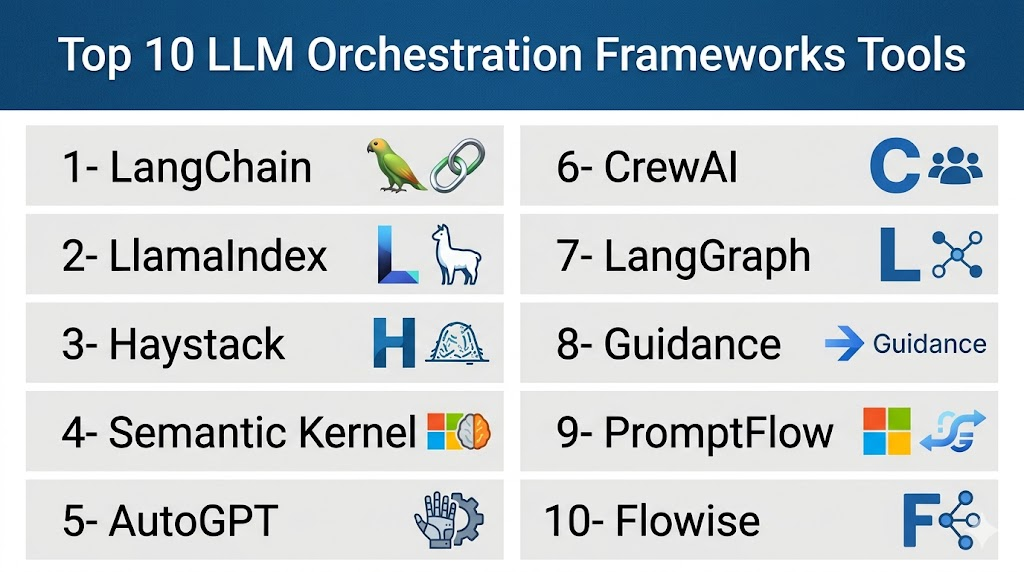

Top 10 LLM Orchestration Frameworks Tools

#1 — LangChain

Short description:

LangChain is the most widely used orchestration framework in the world, designed to simplify the creation of applications using LLMs through “chains.” It provides a massive library of modular components for prompts, models, and memory. It is built for developers who need a comprehensive, highly flexible toolkit to build anything from a simple Q&A bot to a complex, multi-tool autonomous agent.

Key Features

- Modular Components: Highly granular abstractions for LLMs, vector stores, and document loaders.

- LangChain Expression Language (LCEL): A declarative way to compose chains, providing built-in support for streaming and async.

- Vast Integration Library: Hundreds of connectors for virtually every database, API, and model provider.

- LangSmith Integration: Seamless connection to an observability platform for tracing and evaluating chains.

- Built-in Templates: Pre-configured architectures for common tasks like RAG or summarization.

Pros

- The largest ecosystem and community support in the entire AI industry.

- Model-agnostic design allows for easy swapping of backend LLMs.

- Supports both Python and JavaScript/TypeScript.

Cons

- The library has become very large and can feel “bloated” or overly abstract for simple tasks.

- Breaking changes can occur due to the rapid pace of updates.

Platforms / Deployment

- Windows / macOS / Linux

- Cloud / Self-hosted / Hybrid

Security & Compliance

- SSO/SAML (via LangSmith), RBAC (via LangGraph Cloud), Encryption.

- SOC 2 (LangSmith), GDPR.

Integrations & Ecosystem

LangChain acts as the central hub for the modern AI stack, connecting to almost every tool in the market.

- OpenAI, Anthropic, Google Gemini, Ollama.

- Pinecone, Milvus, Weaviate, Chroma.

- Serper, Slack, Google Drive, Notion.

Support & Community

Extensive documentation, a massive YouTube tutorial ecosystem, and a very active GitHub and Discord community.

#2 — LlamaIndex

Short description:

LlamaIndex (formerly GPT Index) is a specialized framework focused on connecting custom data sources to LLMs. While LangChain is a general-purpose orchestrator, LlamaIndex excels at data ingestion, indexing, and retrieval. It is the premier choice for developers building RAG systems that need to handle complex, large-scale, or unstructured data across various formats.

Key Features

- Data Connectors (LlamaHub): Over a hundred loaders for files, APIs, and databases.

- Advanced Indexing: Support for vector, tree, and keyword-based indexing structures.

- Query Engines: Powerful interfaces to query your data and get natural language responses.

- Data Agents: Knowledge-augmented agents that can use tools to research and reason over data.

- Evaluators: Built-in tools to measure the quality of retrieved data and the final response.

Pros

- Unrivaled performance and ease-of-use for RAG-specific use cases.

- Strong focus on data privacy and local data handling.

- Deeply integrated with the data science ecosystem (Pandas, etc.).

Cons

- Not as versatile as LangChain for non-data-centric orchestration tasks.

- The documentation can sometimes be difficult to navigate due to the volume of features.

Platforms / Deployment

- Windows / macOS / Linux

- Cloud / Self-hosted

Security & Compliance

- RBAC, Encryption, Data-masking support.

- Not publicly stated.

Integrations & Ecosystem

Centered around the “LlamaHub” ecosystem which serves as a repository for data connectors.

- Snowflake, BigQuery, MongoDB.

- S3, Google Drive, Box.

- Integrates with LangChain for complex hybrid workflows.

Support & Community

Very strong community of data engineers and AI researchers. High-quality documentation and active Slack support.

#3 — Haystack

Short description:

Haystack, developed by Deepset, is an open-source NLP framework designed for building production-ready pipelines for search and question answering. It is built with an enterprise-first mindset, prioritizing modularity, scalability, and ease of deployment. It is ideal for organizations that want to build “search-as-a-service” or high-performance RAG applications with a clean, well-defined architecture.

Key Features

- Pipeline 2.0: A modern, graph-based architecture for building modular and reusable workflows.

- Document Stores: Abstractions for various databases like Elasticsearch, OpenSearch, and Pinecone.

- Pre-built Nodes: Ready-to-use components for summarization, translation, and rankers.

- REST API Integration: Built-in support to turn any Haystack pipeline into a production API endpoint.

- Metadata Filtering: Powerful tools to filter and narrow down search results based on structured data.

Pros

- Excellent engineering standards and a clean, Pythonic API.

- Highly scalable and designed for production environments from the start.

- Superior support for hybrid search (combining keyword and vector search).

Cons

- Smaller ecosystem of third-party integrations compared to LangChain.

- Can be overkill for very small, experimental projects.

Platforms / Deployment

- Windows / macOS / Linux

- Cloud / Self-hosted / Kubernetes

Security & Compliance

- MFA, SSO support, Audit logging.

- SOC 2, GDPR (via commercial offerings).

Integrations & Ecosystem

Focused on enterprise-grade search and data storage providers.

- Elasticsearch, OpenSearch, Milvus.

- Hugging Face, OpenAI, Cohere.

- Ray for distributed computing.

Support & Community

Strong enterprise backing. Documentation is exceptionally clear, and the core team is very active on Discord and GitHub.

#4 — Semantic Kernel

Short description:

Semantic Kernel is Microsoft’s open-source SDK that lets you easily combine conventional programming languages (like C#, Python, and Java) with LLMs. It is designed to be the “enterprise-grade” orchestrator, focusing on safety, reliability, and integration with professional software development lifecycles. It is the natural choice for .NET developers and enterprises already using the Microsoft stack.

Key Features

- Planners: Dynamic agents that can automatically create a plan to achieve a goal by combining functions.

- Connectors: Pre-built modules to connect to various AI models and vector databases.

- Native Functions: Allows the LLM to call existing code written in C#, Python, or Java.

- Memory: Built-in abstractions for managing short-term and long-term semantic memory.

- Stepwise Planning: High-level reasoning for complex multi-step tasks.

Pros

- The best choice for .NET and Java enterprise environments.

- Strong focus on “Responsible AI” and secure orchestration.

- Very lightweight and easy to integrate into existing professional applications.

Cons

- Python and Java support, while growing, often lags behind the C# implementation.

- The community is smaller than the Python-heavy LangChain ecosystem.

Platforms / Deployment

- Windows / macOS / Linux / Azure

- Cloud / Self-hosted / Hybrid

Security & Compliance

- Azure Active Directory (Entra ID), MFA, RBAC, Managed Identities.

- ISO 27001, SOC 2, HIPAA (via Azure).

Integrations & Ecosystem

Deeply integrated with the Microsoft Azure AI and Power Platform ecosystem.

- Azure OpenAI, Hugging Face.

- Azure AI Search, Pinecone, Qdrant.

- Microsoft 365 and Teams.

Support & Community

Backed by Microsoft’s engineering teams. Professional support via Azure agreements and an active GitHub community.

#5 — AutoGPT

Short description:

AutoGPT is an experimental, open-source application that showcases the capabilities of the GPT-4 model. It is designed as an autonomous AI agent that can perform tasks by breaking them down into sub-tasks and using the internet and other tools. While often seen as a demo, its underlying architecture has sparked a massive trend in autonomous orchestration and task-based AI.

Key Features

- Autonomous Iteration: The agent can self-prompt and reason until a goal is achieved.

- Internet Access: Built-in capabilities for web searching and information gathering.

- File Management: Can read, write, and execute files on the local system.

- Memory Management: Short-term and long-term memory via vector database integration.

- Plugin System: Expandable architecture to add new capabilities like image generation or email.

Pros

- The “gold standard” for understanding autonomous agent logic.

- Massive community of experimenters pushing the boundaries of AI agency.

- Completely open-source and free to use.

Cons

- Can be expensive to run due to the high volume of recursive API calls.

- Often gets stuck in “logic loops” without manual intervention.

Platforms / Deployment

- Windows / macOS / Linux / Docker

- Self-hosted

Security & Compliance

- Not publicly stated. (High risk due to autonomous file execution).

Integrations & Ecosystem

Relies on a wide variety of third-party APIs for its agency.

- Google Search, DuckDuckGo.

- Redis, Milvus, Weaviate.

- Twitter and Email plugins.

Support & Community

One of the most starred repositories on GitHub. Support is purely community-driven through Discord and GitHub issues.

#6 — CrewAI

Short description:

CrewAI is a framework for orchestrating role-playing, autonomous AI agents. Unlike LangChain which focuses on components, CrewAI focuses on “teams.” You define a crew of agents (e.g., a Researcher, a Writer, and a Reviewer), assign them tasks, and they work together to achieve a goal. It is built for developers who need to implement complex, multi-agent collaborative workflows.

Key Features

- Role-Based Agents: Define specific personas with unique goals and backstories.

- Task Management: Orchestrate how tasks are passed between agents.

- Process Driven: Supports sequential, hierarchical, and consensual processing.

- Tool Usage: Agents can be assigned specific LangChain or custom tools.

- Memory and Caching: Prevents redundant API calls by caching agent results.

Pros

- Very intuitive for modeling human-like team dynamics.

- Easy to set up complex collaborative tasks with minimal code.

- High level of flexibility in how agents interact.

Cons

- Multi-agent systems are inherently slower and more expensive than single-chain systems.

- Requires careful prompting to prevent agents from arguing or hallucinating in a loop.

Platforms / Deployment

- Windows / macOS / Linux

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated.

Integrations & Ecosystem

Built on top of LangChain, so it inherits most of its integrations.

- All LangChain tools and models.

- Supports local models via Ollama.

- Integrates with standard Python libraries.

Support & Community

Rapidly growing community. Excellent documentation and a very engaged creator who provides frequent updates.

#7 — LangGraph

Short description:

LangGraph is a library built on top of LangChain that enables building stateful, multi-actor applications with LLMs by using cyclic graphs. While standard LangChain is primarily a Directed Acyclic Graph (DAG), LangGraph allows for cycles, which are essential for agentic behavior where a model needs to repeat a step or wait for a specific condition.

Key Features

- Cyclic Workflows: Allows agents to loop back to previous steps for refinement.

- Persistence: Built-in support for saving and loading the state of a thread.

- Human-in-the-loop: Native support for interrupting a process to get human approval before continuing.

- Stateful Management: Granular control over the internal state of a multi-step process.

- Parallelism: Execute multiple nodes in the graph simultaneously.

Pros

- The most powerful way to build “production-grade” agents that require reliability.

- Superior handling of long-running, multi-session interactions.

- Deeply integrated with the existing LangChain ecosystem.

Cons

- Significantly higher learning curve than basic LangChain.

- Requires a deep understanding of graph theory and state machines.

Platforms / Deployment

- Windows / macOS / Linux

- Cloud (via LangGraph Cloud) / Self-hosted

Security & Compliance

- RBAC, SSO, Audit logs (via LangGraph Cloud).

- SOC 2 (via LangSmith ecosystem).

Integrations & Ecosystem

Full access to the LangChain partner network.

- All LangChain models and vector stores.

- LangSmith for debugging and observability.

- Checkpointers for Redis and Postgres.

Support & Community

Backed by the LangChain core team. High-level technical documentation and community support via Discord.

#8 — Guidance

Short description:

Guidance is a programming paradigm developed by Microsoft Research that allows for better control of LLMs by interleaving generation, prompting, and logical control. It focuses on “constrained generation,” ensuring the model follows a specific format (like JSON or a specific template) every single time. It is designed for developers who need extreme precision and reliability in model outputs.

Key Features

- Token-Level Control: Allows for forcing the model to pick from a specific set of tokens.

- Interleaved Logic: Embed Python-like logic directly within the prompt template.

- Format Guarantees: Ensures output is valid JSON or matches a regex pattern perfectly.

- Speculative Decoding: Speeds up generation by predicting common tokens.

- Rich UI Components: Built-in visualization for the generation process.

Pros

- Unmatched reliability for getting structured data from LLMs.

- Significant performance improvements by reducing the tokens the model needs to “think” about.

- Works with both OpenAI-style APIs and local models.

Cons

- Not a full “orchestrator” in the sense of managing databases or tools.

- Requires a more technical understanding of how LLM tokens work.

Platforms / Deployment

- Windows / macOS / Linux / Jupyter

- Self-hosted / Local

Security & Compliance

- Not publicly stated.

Integrations & Ecosystem

Focused on the model-layer interaction.

- OpenAI, Hugging Face, Llama.cpp.

- Integrates with Python and Jupyter environments.

Support & Community

Strong backing from Microsoft Research. Community is primarily active on GitHub and research forums.

#9 — PromptFlow

Short description:

PromptFlow is a suite of development tools designed to streamline the entire development cycle of AI applications, from ideation to deployment. It is an Azure-native tool (now open-source) that provides a visual interface for building “flows” that connect LLMs, prompts, and Python code. It is built for teams that prioritize enterprise-grade engineering and observability.

Key Features

- Visual DAG Editor: Drag-and-drop interface for building and visualizing LLM flows.

- Batch Testing: Run large-scale evaluations on your flows using massive datasets.

- Built-in Evaluators: Standard metrics for grounding, relevance, and safety.

- Deployment Management: One-click deployment to Azure Managed Endpoints or Kubernetes.

- Unified Workspace: Shared environment for teams to collaborate on prompts and flows.

Pros

- The best visual editor for professional enterprise environments.

- Unrivaled integration with Azure’s security and monitoring tools.

- Excellent for data-driven evaluation and iterative improvement.

Cons

- While open-source, the best experience is locked behind the Azure ecosystem.

- Can feel heavy for developers who prefer a pure code-based workflow.

Platforms / Deployment

- Windows / macOS / Linux / Web

- Cloud (Azure) / Self-hosted / Kubernetes

Security & Compliance

- Entra ID, RBAC, Managed Identities, VNET isolation.

- ISO 27001, SOC 2, HIPAA, FedRAMP.

Integrations & Ecosystem

Centered around the Azure AI and Microsoft data stack.

- Azure OpenAI, Azure AI Search.

- CosmosDB, Blob Storage.

- Microsoft Fabric.

Support & Community

Professional support via Microsoft Azure. Active open-source community on GitHub.

#10 — Flowise

Short description:

Flowise is an open-source, low-code UI tool for building LLM applications using LangChain. It allows users to build complex chains and agents by dragging and dropping blocks on a canvas. It is designed for rapid prototyping and for teams where non-developers need to participate in the AI development process.

Key Features

- Drag & Drop Interface: Visual representation of LangChain components.

- API Endpoints: Instantly turn a visual flow into a usable REST API.

- Pre-built Market: Dozens of templates for RAG, memory-based bots, and tools.

- Custom Tooling: Create your own custom tools and nodes within the UI.

- Docker Support: Easy deployment to any cloud provider using containers.

Pros

- Extremely fast “time-to-prototype.”

- Excellent for visualizing complex logic that might be confusing in code.

- Completely free and open-source.

Cons

- Debugging complex logic errors in a visual interface can be more difficult than in code.

- Not as flexible as pure code for highly custom business logic.

Platforms / Deployment

- Windows / macOS / Linux / Docker

- Cloud / Self-hosted

Security & Compliance

- Basic Auth, RBAC.

- Not publicly stated.

Integrations & Ecosystem

Inherits the entire LangChain ecosystem of models and databases.

- OpenAI, Anthropic, Google, Hugging Face.

- Pinecone, Supabase, Redis, Postgres.

- WhatsApp, Slack, Discord integrations.

Support & Community

Very active and rapidly growing community. Excellent documentation and a high volume of community-contributed templates.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| LangChain | General Orchestration | Multi-platform | Hybrid | LCEL Expression Language | 4.6/5 |

| LlamaIndex | Data-heavy RAG | Multi-platform | Hybrid | LlamaHub Connectors | 4.7/5 |

| Haystack | Enterprise Search | Multi-platform | Hybrid | Pipeline 2.0 Graph | 4.5/5 |

| Semantic Kernel | .NET / Java Apps | Multi-platform | Hybrid | Stepwise Planners | 4.4/5 |

| AutoGPT | Agent Research | Docker / OS | Self-hosted | Autonomous Iteration | N/A |

| CrewAI | Collaborative Teams | Multi-platform | Hybrid | Role-Playing Agents | 4.6/5 |

| LangGraph | Stateful Agents | Multi-platform | Hybrid | Human-in-the-loop | 4.8/5 |

| Guidance | Format Precision | Multi-platform | Local | Token-Level Control | N/A |

| PromptFlow | Azure Enterprises | Web / OS | Cloud | Visual DAG Editor | 4.3/5 |

| Flowise | Rapid Prototyping | Docker / OS | Self-hosted | Low-Code UI | 4.5/5 |

Evaluation & Scoring of LLM Orchestration Frameworks

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| LangChain | 10 | 6 | 10 | 8 | 8 | 10 | 9 | 8.85 |

| LlamaIndex | 9 | 7 | 9 | 8 | 9 | 9 | 8 | 8.45 |

| Haystack | 9 | 7 | 8 | 9 | 9 | 8 | 8 | 8.25 |

| Semantic Kernel | 8 | 8 | 8 | 10 | 8 | 9 | 8 | 8.40 |

| AutoGPT | 6 | 4 | 7 | 2 | 5 | 5 | 10 | 5.80 |

| CrewAI | 8 | 9 | 9 | 7 | 7 | 8 | 9 | 8.20 |

| LangGraph | 10 | 4 | 10 | 9 | 9 | 9 | 8 | 8.45 |

| Guidance | 6 | 7 | 6 | 6 | 10 | 7 | 9 | 7.15 |

| PromptFlow | 9 | 8 | 9 | 10 | 8 | 9 | 7 | 8.60 |

| Flowise | 7 | 10 | 9 | 7 | 8 | 8 | 10 | 8.45 |

Scores are comparative. Interpretation: High scores in “Core” represent framework completeness. High “Ease” scores indicate accessibility for non-experts. High “Security” scores reflect enterprise-readiness and compliance support. Weighted total provides a balanced view for a typical enterprise buyer.

Which LLM Orchestration Framework Tool Is Right for You?

Solo / Freelancer

If you are a solo developer building prototypes or niche applications, Flowise or CrewAI offer the best path to results. Flowise allows you to visualize your logic quickly, while CrewAI lets you set up impressive multi-agent workflows with very little code, making your solo projects appear much more sophisticated.

SMB

Small to medium businesses should look at LlamaIndex or LangChain. LlamaIndex is the winner if your primary goal is to search through company documents (RAG). LangChain is better if you are building a wider variety of AI-driven features across your product.

Mid-Market

For mid-sized companies with a strong engineering culture, Haystack is an excellent choice. Its clean API and production-first focus mean your data engineers won’t spend half their day fighting with abstractions. It scales well without the “bloat” that can sometimes plague larger frameworks.

Enterprise

Enterprises should prioritize Semantic Kernel or PromptFlow. Semantic Kernel is built for professional developers in .NET or Java environments and provides the governance large IT departments require. PromptFlow offers the best visual collaboration and evaluation tools for large teams working within the Azure ecosystem.

Budget vs Premium

Almost all these tools are open-source and free to start. The “premium” cost comes in the form of cloud hosting and observability tools. LangSmith and Azure PromptFlow are the premium tiers that add significant cost but provide the monitoring necessary for multi-million user applications.

Feature Depth vs Ease of Use

LangGraph and Haystack offer the most feature depth for complex applications, but require a significant investment in learning. Flowise is the easiest to use but has a lower ceiling for highly customized, logic-heavy business requirements.

Integrations & Scalability

LangChain is the king of integrations. For scalability, Haystack and Databricks (via MLflow/LangChain integration) are designed to handle high-throughput analytical and search workloads most effectively.

Security & Compliance Needs

Semantic Kernel and PromptFlow are the clear winners here. They are built by companies (Microsoft) that understand the regulatory needs of Fortune 500 companies and provide the isolation, audit logs, and encryption required by law.

Frequently Asked Questions (FAQs)

1. What is the difference between an LLM and an orchestration framework?

An LLM like GPT-4 or Claude is the core engine that generates text and reasons based on its training data. An orchestration framework like LangChain is the infrastructure that connects that engine to your specific world—your databases, your files, and your business logic. Think of the LLM as a car’s engine and the framework as the car’s steering, chassis, and dashboard.

2. Do I need to know Python to use these orchestration frameworks?

The vast majority of the ecosystem is built on Python, so it is the most useful language to know. However, frameworks like LangChain have excellent JavaScript/TypeScript support, and Microsoft’s Semantic Kernel allows developers to work in C# and Java. If you don’t know any code, low-code tools like Flowise allow you to build workflows visually.

3. Why would I use LangGraph instead of standard LangChain?

Standard LangChain is great for linear paths (Task A -> Task B -> Task C). However, complex agents often need to loop back (e.g., if Task B fails, go back to Task A and try again). LangGraph is specifically designed to handle these “cyclic” workflows and manage the “state” or memory of the process as it moves through those loops.

4. Can these frameworks help me reduce my API costs?

Yes, many frameworks include features like caching, which stores the answers to common questions so you don’t have to pay the model to answer them twice. They also allow for “LLM routing,” where a cheaper model (like GPT-3.5 or Llama 3) handles simple tasks and only calls the expensive model (like GPT-4) for difficult reasoning.

5. Is my data sent to the framework’s servers when I use them?

If you use open-source frameworks like LangChain, Haystack, or LlamaIndex locally, your data stays on your infrastructure. The framework is just code running on your machine. However, the data will still be sent to the LLM provider (like OpenAI) unless you are running a completely local model via a tool like Ollama or Hugging Face.

6. What is “Human-in-the-loop” and why is it important?

“Human-in-the-loop” is a feature in frameworks like LangGraph and PromptFlow that allows an AI process to pause and wait for a human to approve or edit a step before continuing. This is critical for high-stakes tasks like sending an email to a client, executing a financial transaction, or publishing code to a production server.

7. How do these tools handle long-term memory?

Frameworks manage memory by storing previous parts of a conversation in a database (like Redis or Postgres). When a user asks a follow-up question, the framework retrieves the relevant “past memories” and feeds them to the LLM so it has context. Specialized vector databases allow the agent to remember facts from weeks or months ago.

8. Can I switch from OpenAI to an open-source model easily with these tools?

Yes, that is one of the primary benefits. These frameworks use “abstractions,” meaning you write code to talk to a generic “Model” class. You can then simply change one line of configuration to point that class to an open-source model running on your own server instead of the OpenAI API.

9. What is the biggest challenge when using an orchestration framework?

The biggest challenge is “abstraction complexity.” Because these frameworks try to handle everything, they can sometimes make simple tasks feel more complicated than they need to be. Developers often find themselves debugging the framework’s internal logic rather than the AI’s response, which is why choosing a framework that matches your team’s technical skill is vital.

10. Are these frameworks ready for large-scale enterprise production?

Most of these frameworks are being used in production today, but they require careful engineering. You must implement your own rate-limiting, error-handling for API timeouts, and observability tools to ensure the system is reliable. Frameworks like PromptFlow and Haystack are specifically built with these production requirements in mind.

Conclusion

The era of the “simple prompt” is giving way to the era of the “complex system.” LLM Orchestration Frameworks are the essential tools that allow developers to build these systems with reliability and scale. While LangChain remains the dominant force due to its massive ecosystem, specialized tools like LlamaIndex for data-heavy tasks and LangGraph for iterative agents are carving out critical niches.

The “best” framework depends entirely on your specific destination. If you are building a search-heavy application for a large company, Haystack or LlamaIndex is likely your best partner. If you are a .NET shop, Semantic Kernel is the clear choice. Regardless of the tool, the key is to focus on observability and evaluation; in a world of probabilistic AI models, being able to trace and measure your “nervous system” is the only way to ensure your application remains sane and effective. Start by building a simple RAG pipeline, get comfortable with the abstractions, and gradually layer in agency and state as your project’s needs evolve.