Find the Best Cosmetic Hospitals — Choose with Confidence

Discover top cosmetic hospitals in one place and take the next step toward the look you’ve been dreaming of.

“Your confidence is your power — invest in yourself, and let your best self shine.”

Compare • Shortlist • Decide smarter — works great on mobile too.

Introduction

Retrieval-Augmented Generation (RAG) tools combine large language models with external knowledge retrieval systems to produce more accurate, context-aware, and up-to-date responses. By leveraging external data sources, these tools improve the quality of AI-generated content, reduce hallucinations, and enable organizations to integrate proprietary knowledge into generative AI workflows.

RAG tooling has become essential as businesses increasingly adopt generative AI for customer support, content creation, and knowledge management. Using RAG, teams can ensure AI outputs are grounded in relevant data while maintaining the speed and flexibility of LLMs.

Real-world use cases include:

- Enterprise knowledge retrieval for support agents or chatbots.

- Content generation grounded in proprietary data sources.

- Research assistance combining LLMs with domain-specific databases.

- Question-answering systems with updated and verified information.

- Multi-modal AI workflows linking documents, tables, and structured knowledge graphs.

Evaluation Criteria for Buyers:

- Core retrieval and generation capabilities

- Model and knowledge source flexibility

- Ease of integration with existing data pipelines

- Latency and scalability of query responses

- Security and compliance for sensitive data

- Monitoring and observability for AI outputs

- Multi-format data support (text, PDF, databases, vector stores)

- Cost and resource efficiency

- Extensibility via APIs or SDKs

- Vendor support and documentation

Best for: AI engineers, data scientists, knowledge managers, enterprise developers, and organizations building AI-powered search, question-answering, or content systems.

Not ideal for: Projects without large knowledge bases or for casual experimentation where simple LLM usage suffices.

Key Trends in RAG Tooling

- Integration with vector databases and knowledge graphs for enhanced retrieval.

- Multi-LLM orchestration for combining generation with retrieval strategies.

- Fine-tuning and embedding workflows for domain-specific knowledge.

- Cloud-native, scalable deployments supporting enterprise-scale queries.

- Hybrid retrieval combining local, cloud, and third-party datasets.

- Observability and monitoring frameworks to track model outputs.

- Embedding-based similarity search gaining adoption for accurate retrieval.

- Support for multi-modal RAG including text, images, and structured data.

- Open-source and managed solutions coexisting to suit diverse enterprise needs.

How We Selected These Tools (Methodology)

- Market adoption and recognition among enterprises and developers.

- Feature completeness in retrieval, generation, embedding support, and orchestration.

- Performance metrics including latency, throughput, and reliability.

- Security and compliance features, especially for sensitive or proprietary data.

- Integration with popular ML frameworks, vector stores, and cloud services.

- Scalability across small projects, SMBs, and large enterprises.

- Vendor support, documentation quality, and community engagement.

- Observability, monitoring, and evaluation features for generated content.

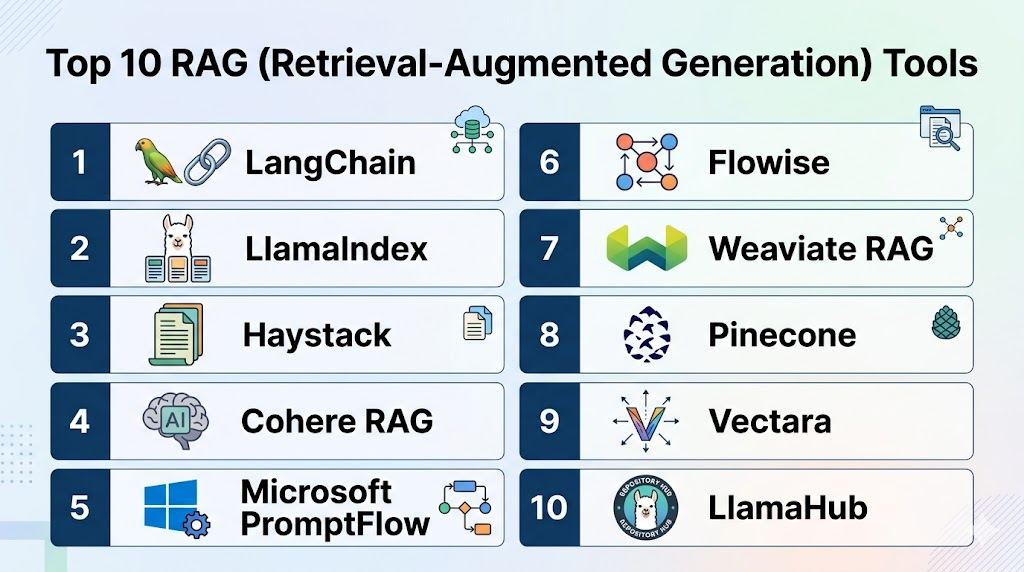

Top 10 RAG (Retrieval-Augmented Generation) Tools

#1 — LangChain

Short description:

LangChain is a developer-focused RAG framework enabling AI apps to connect LLMs with external knowledge sources, orchestrate workflows, and manage data retrieval pipelines.

Key Features

- LLM orchestration with retrieval strategies

- Integration with vector stores and databases

- Multi-step workflows for question answering

- Embedding and indexing pipelines

- API and SDK support for customization

Pros

- Flexible, developer-first framework

- Supports multiple LLMs and data sources

- Large open-source community and documentation

Cons

- Requires coding expertise

- Self-hosting and scaling may require technical setup

Platforms / Deployment

- Web, Cloud, Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Supports a wide array of vector databases, embeddings, and LLM providers.

- Pinecone, Weaviate, FAISS

- OpenAI, Cohere, Hugging Face LLMs

- Custom API connectors

Support & Community

- Strong open-source community, active tutorials, forum support

#2 — LlamaIndex (formerly GPT Index)

Short description:

LlamaIndex simplifies building RAG pipelines by structuring documents and integrating them with LLMs for retrieval-based responses.

Key Features

- Data connectors for multiple file formats

- Document indexing and embedding support

- Query abstraction layer for LLMs

- Supports local and cloud vector stores

- Pre-built templates for common RAG tasks

Pros

- Easy-to-use for developers and data scientists

- Supports multiple LLM providers

- Rapid prototyping of RAG applications

Cons

- Limited enterprise monitoring features

- Documentation may be less detailed than LangChain

Platforms / Deployment

- Web, Cloud, Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Compatible with FAISS, Pinecone, Weaviate

- Hugging Face and OpenAI LLMs

- REST API for embedding ingestion

Support & Community

- Open-source community and tutorials

#3 — Haystack

Short description:

Haystack is an open-source framework for building production-ready RAG and search systems, providing pipelines, retrievers, and generators.

Key Features

- Modular pipelines for retrieval and generation

- Support for dense and sparse retrieval

- Integrations with multiple vector stores

- Evaluation tools for QA systems

- Multi-modal data support

Pros

- Production-ready RAG pipelines

- Extensive vector database integration

- Active developer community

Cons

- Self-hosting can be complex

- Requires familiarity with ML pipelines

Platforms / Deployment

- Web, Cloud, Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- FAISS, Milvus, Pinecone

- Hugging Face Transformers, OpenAI LLMs

- ElasticSearch, SQL, NoSQL

Support & Community

- Documentation, GitHub issues, community support

#4 — Cohere RAG

Short description:

Cohere provides managed RAG solutions combining retrieval from embeddings with their LLMs for enterprise-ready applications.

Key Features

- Managed embeddings and search API

- Integration with proprietary data

- Real-time response generation

- Fine-tuning and customization

- Multi-Language support

Pros

- Enterprise-ready and managed

- Easy integration with Cohere LLMs

- Scalable for production workloads

Cons

- Limited to Cohere ecosystem

- Less flexibility than open-source frameworks

Platforms / Deployment

- Web, Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Vector databases like Pinecone

- Custom knowledge sources

- API for embedding ingestion

Support & Community

- Managed support, documentation, customer onboarding

#5 — Microsoft PromptFlow / Semantic Kernel

Short description:

Microsoft tools provide RAG capabilities through orchestration of prompts and retrieval across knowledge stores, suitable for enterprise integrations.

Key Features

- Prompt orchestration and RAG pipelines

- Integration with Azure Cognitive Search

- Multi-step workflows

- Embedding and indexing pipelines

- SDK and template support

Pros

- Tight integration with Azure ecosystem

- Enterprise-grade security and monitoring

- Supports hybrid cloud deployments

Cons

- Limited outside Microsoft ecosystem

- Learning curve for non-Azure developers

Platforms / Deployment

- Web, Cloud, Hybrid

Security & Compliance

- SOC 2, ISO 27001, enterprise-level RBAC

Integrations & Ecosystem

- Azure Cognitive Search, vector databases

- Microsoft LLMs and OpenAI API

- API connectors for knowledge ingestion

Support & Community

- Enterprise support, documentation, forums

#6 — Flowise

Short description:

Flowise is an open-source RAG tool for low-code AI application building, combining retrievers and LLMs in visual pipelines.

Key Features

- Visual workflow builder for RAG

- Integrates with multiple vector stores

- Supports embeddings and indexing

- Low-code approach for rapid prototyping

- Monitoring and debugging tools

Pros

- Low-code for faster development

- Open-source and flexible

- Supports multiple LLMs and retrieval backends

Cons

- May lack enterprise support

- Limited scalability for large datasets

Platforms / Deployment

- Web, Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- FAISS, Pinecone, Milvus

- OpenAI, Hugging Face LLMs

- API connectivity for custom data sources

Support & Community

- Open-source community and tutorials

#7 — Weaviate RAG

Short description:

Weaviate is a vector database with native RAG capabilities, allowing AI models to retrieve from structured and unstructured data efficiently.

Key Features

- Vector search and semantic retrieval

- Multi-modal data support

- Real-time API queries

- LLM orchestration via integrations

- Schema and knowledge graph management

Pros

- Strong vector search and RAG integration

- Scalable for enterprise workloads

- Multi-modal and hybrid data support

Cons

- Learning curve for complex setups

- Additional integration required for LLM orchestration

Platforms / Deployment

- Web, Cloud, Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Hugging Face, OpenAI, Cohere

- Python SDK, REST API

- Integration with BI and dashboards

Support & Community

- Active open-source community and documentation

#8 — Pinecone

Short description:

Pinecone is a managed vector database for retrieval-heavy RAG applications, offering fast similarity search for AI generation workflows.

Key Features

- Real-time vector similarity search

- Scalable managed service

- Integration with LLMs and embeddings

- Multi-region deployment

- API for rapid integration

Pros

- High performance and low-latency retrieval

- Fully managed service

- Supports multi-modal embeddings

Cons

- Limited built-in orchestration

- Cost may grow with scale

Platforms / Deployment

- Web, Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- OpenAI, Hugging Face, LangChain, LlamaIndex

- Python and REST APIs

- Integration with knowledge stores

Support & Community

- Managed support, documentation, tutorials

#9 — Vectara

Short description:

Vectara provides managed semantic search with RAG capabilities, combining LLMs and retrieval for enterprise AI applications.

Key Features

- Semantic vector search

- Multi-domain and multi-language support

- Managed LLM retrieval pipelines

- API for embedding ingestion

- Real-time querying

Pros

- Enterprise-ready and managed

- Fast retrieval across large datasets

- Multi-language capabilities

Cons

- Less flexible than open-source frameworks

- Pricing depends on query volume

Platforms / Deployment

- Web, Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- OpenAI, Hugging Face LLMs

- Python SDK and REST API

- Integration with enterprise knowledge sources

Support & Community

- Managed support and documentation

#10 — LlamaHub / AutoGPT Integration

Short description:

LlamaHub provides pre-built connectors for RAG workflows, enabling LLMs to retrieve and generate from external knowledge efficiently.

Key Features

- Knowledge connectors for web, databases, and APIs

- Pre-built RAG templates

- Embedding and retrieval pipelines

- Multi-LLM orchestration

- Easy integration with LangChain and AutoGPT

Pros

- Rapid setup for RAG pipelines

- Supports multiple LLMs

- Open-source and extendable

Cons

- Limited monitoring and observability

- Self-hosting may require expertise

Platforms / Deployment

- Web, Cloud, Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- LangChain, LlamaIndex, AutoGPT

- Vector databases like Pinecone, Weaviate

- Custom API connectors

Support & Community

- Documentation, tutorials, active GitHub community

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| LangChain | Developer RAG workflows | Web | Cloud/Self-hosted | Flexible orchestration | N/A |

| LlamaIndex | Document-based RAG | Web | Cloud/Self-hosted | Easy indexing pipelines | N/A |

| Haystack | Production QA pipelines | Web | Cloud/Self-hosted | Modular retrieval & generation | N/A |

| Cohere RAG | Managed enterprise RAG | Web | Cloud | Managed embeddings | N/A |

| Microsoft PromptFlow | Enterprise Azure RAG | Web | Cloud/Hybrid | Prompt orchestration | N/A |

| Flowise | Low-code RAG | Web | Self-hosted | Visual pipeline builder | N/A |

| Weaviate RAG | Vector & multi-modal RAG | Web | Cloud/Self-hosted | Native semantic retrieval | N/A |

| Pinecone | Vector similarity retrieval | Web | Cloud | Fast vector search | N/A |

| Vectara | Managed semantic search | Web | Cloud | Multi-language retrieval | N/A |

| LlamaHub | Pre-built connectors | Web | Cloud/Self-hosted | Easy RAG integration | N/A |

Evaluation & Scoring of RAG Tooling

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| LangChain | 9 | 8 | 9 | 7 | 8 | 7 | 8 | 8.3 |

| LlamaIndex | 8 | 8 | 8 | 7 | 8 | 7 | 8 | 7.9 |

| Haystack | 8 | 7 | 8 | 7 | 7 | 7 | 8 | 7.7 |

| Cohere RAG | 8 | 8 | 7 | 7 | 8 | 8 | 7 | 7.8 |

| Microsoft PromptFlow | 8 | 7 | 7 | 8 | 8 | 7 | 7 | 7.6 |

| Flowise | 7 | 8 | 7 | 7 | 7 | 6 | 8 | 7.3 |

| Weaviate RAG | 8 | 7 | 8 | 7 | 8 | 7 | 7 | 7.6 |

| Pinecone | 8 | 8 | 8 | 7 | 9 | 7 | 7 | 7.9 |

| Vectara | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.5 |

| LlamaHub | 7 | 8 | 7 | 7 | 7 | 6 | 7 | 7.1 |

Interpretation: Higher weighted totals indicate stronger RAG capabilities, integration support, and ease of deployment. Scores are comparative, not absolute.

Which RAG Tool Is Right for You?

Solo / Freelancer

- LangChain or LlamaIndex offer flexible, lightweight frameworks for personal or small-scale RAG projects.

SMB

- Flowise or Haystack provide low-code or manageable RAG pipelines suitable for small teams.

Mid-Market

- Weaviate RAG or Pinecone deliver scalable, efficient retrieval pipelines with enterprise integrations.

Enterprise

- Microsoft PromptFlow, Cohere RAG, or LlamaHub support large-scale, production-ready RAG deployments with monitoring and multi-cloud support.

Budget vs Premium

- Open-source frameworks are cost-effective but require technical expertise; managed enterprise tools provide support and compliance at higher cost.

Feature Depth vs Ease of Use

- LangChain and Flowise balance ease and customization, while Microsoft PromptFlow and Cohere offer deep enterprise features but a steeper learning curve.

Integrations & Scalability

- Enterprise-grade tools support multi-cloud, hybrid deployments, vector databases, and extensive APIs. SMB/developer tools may have simpler integrations.

Security & Compliance Needs

- Enterprise tools offer better enterprise-grade security, but open-source frameworks may require additional configuration for sensitive data.

Frequently Asked Questions (FAQs)

1. What is RAG tooling used for?

RAG tools combine language models with external knowledge to provide accurate, context-aware AI outputs. They are widely used for enterprise QA, content generation, and knowledge management.

2. Are these tools suitable for small projects?

Yes, frameworks like LangChain and Flowise can be used by solo developers or small teams, though enterprise tools are optimized for large-scale deployments.

3. How do RAG tools integrate with data sources?

They connect with vector databases, document stores, APIs, and cloud services to retrieve relevant knowledge for generation.

4. Can multiple LLMs be used simultaneously?

Yes, many frameworks support orchestration across multiple LLMs to improve response accuracy and reliability.

5. What deployment options exist?

RAG tools can be cloud-native, hybrid, or self-hosted depending on enterprise requirements and data sensitivity.

6. Are these tools open-source or managed?

Both exist. LangChain, LlamaIndex, and Haystack are open-source; Cohere RAG and Microsoft PromptFlow are managed enterprise solutions.

7. How do these tools improve AI accuracy?

By retrieving relevant knowledge, RAG reduces hallucinations and ensures outputs are grounded in up-to-date or proprietary information.

8. What is the role of embeddings in RAG?

Embeddings represent text or data in vector form, enabling similarity search and relevance-based retrieval for the generative model.

9. Can RAG handle multi-modal data?

Yes, modern RAG tools support text, tables, PDFs, and sometimes images for retrieval and generation.

10. How do I scale RAG pipelines?

Scalable RAG deployment requires managed vector stores, cloud or hybrid deployment, and monitoring to ensure low latency for large query volumes.

Conclusion

RAG Tooling has become critical for organizations seeking to combine generative AI with reliable, contextually relevant knowledge retrieval. Choosing the right tool depends on scale, technical resources, and domain requirements. Developers and SMBs may prioritize flexible open-source frameworks like LangChain and LlamaIndex, while mid-market and enterprise teams benefit from managed, scalable solutions like Cohere RAG, Microsoft PromptFlow, or Pinecone. Organizations should shortlist suitable platforms, pilot them with representative data, validate performance, and ensure integration with existing pipelines before full deployment. Properly implemented, RAG tooling enables trustworthy, high-quality generative AI applications at scale.