Find the Best Cosmetic Hospitals — Choose with Confidence

Discover top cosmetic hospitals in one place and take the next step toward the look you’ve been dreaming of.

“Your confidence is your power — invest in yourself, and let your best self shine.”

Compare • Shortlist • Decide smarter — works great on mobile too.

Introduction

AI Safety & Evaluation Tools are platforms and frameworks designed to assess, monitor, and mitigate risks in AI systems. They help organizations ensure that AI models behave reliably, ethically, and in alignment with business goals. By providing mechanisms to evaluate model performance, robustness, bias, and safety, these tools support responsible AI deployment and long-term trust.

The importance of AI safety has grown as AI adoption expands across sensitive domains such as healthcare, finance, and autonomous systems. Unsafe or poorly evaluated AI can lead to biased decisions, operational failures, or regulatory violations. AI Safety & Evaluation Tools offer structured frameworks to test AI behavior under various conditions, track performance, and generate actionable insights.

Real-world use cases include:

- Stress-testing AI models for edge-case behavior and robustness.

- Detecting and mitigating bias or unfair outputs in decision-making systems.

- Evaluating AI performance in safety-critical applications such as autonomous vehicles or medical diagnostics.

- Validating model outputs against regulatory standards and organizational policies.

- Monitoring AI in production to detect drift, errors, or unsafe predictions.

Evaluation Criteria for Buyers:

- Core safety and evaluation features

- Ease of use and accessibility for data teams

- Integration with existing ML pipelines

- Security and compliance support

- Scalability for large model portfolios

- Reporting and auditing capabilities

- Model explainability and interpretability

- Support for multi-modal and multi-platform AI

- Real-time monitoring and alerting

- Customizability for organization-specific safety policies

Best for: AI engineers, MLOps teams, risk and compliance officers, data science leaders, and organizations deploying AI in regulated or high-stakes domains.

Not ideal for: Small-scale or experimental AI projects with low risk tolerance where manual checks may suffice.

Key Trends in AI Safety & Evaluation Tools

- Growing adoption of automated AI evaluation frameworks for safety and bias detection.

- Integration with model monitoring tools for real-time safety assessment.

- Increased focus on explainable AI (XAI) and interpretability features.

- Emergence of standardized AI safety benchmarks across industries.

- Tools offering both pre-deployment testing and post-deployment monitoring.

- Multi-modal AI evaluation for language, vision, and combined models.

- Cloud-native platforms supporting hybrid and multi-cloud AI operations.

- Open-source and enterprise solutions coexisting for flexibility and scalability.

- Expansion of safety-focused APIs for integration with existing MLOps pipelines.

How We Selected These Tools (Methodology)

- Assessed market adoption and reputation in AI safety evaluation.

- Reviewed core capabilities for risk mitigation, bias detection, and model validation.

- Evaluated reliability, uptime, and performance under production conditions.

- Considered security posture, compliance features, and data protection.

- Examined integrations with popular AI/ML frameworks and pipelines.

- Analyzed applicability across enterprise, SMB, and developer-focused scenarios.

- Checked vendor support, documentation, and community engagement.

- Ensured coverage of multi-modal AI and real-time monitoring.

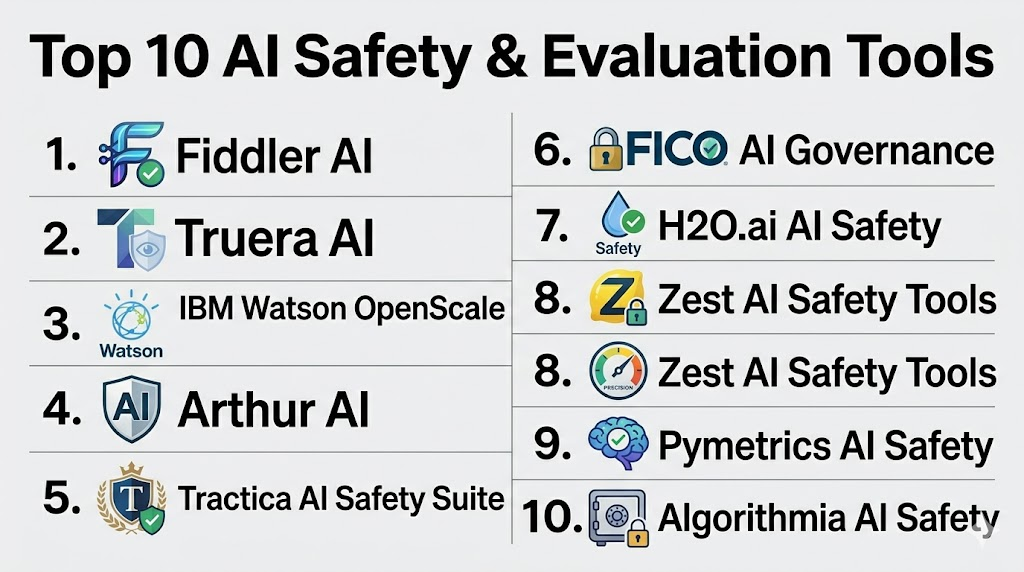

Top 10 AI Safety & Evaluation Tools

#1 — Fiddler AI

Short description:

Fiddler AI provides monitoring, evaluation, and explainability for AI models in production. It enables organizations to detect bias, drift, and safety risks across business-critical AI applications.

Key Features

- Real-time model monitoring

- Bias and fairness detection

- Explainable AI dashboards

- Policy and compliance enforcement

- Integration with multiple ML platforms

Pros

- Strong visualization for model performance

- Enterprise-ready reporting and audit features

- Supports hybrid and cloud deployment

Cons

- Pricing may be high for small teams

- Technical learning curve for non-data stakeholders

Platforms / Deployment

- Web, Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Integrates with cloud ML platforms, pipelines, and BI dashboards.

- Python SDK & REST API

- Cloud services: AWS, Azure, GCP

- Data pipelines for monitoring and retraining

Support & Community

- Enterprise support, onboarding programs, active documentation

#2 — Truera AI

Short description:

Truera AI focuses on model intelligence and safety evaluation, offering transparency, fairness monitoring, and explainability for enterprise AI systems.

Key Features

- Bias detection and fairness reporting

- Performance monitoring across multiple dimensions

- Explainable AI dashboards

- Policy enforcement for risk mitigation

- Continuous audit and alerting

Pros

- Comprehensive bias and performance monitoring

- Real-time alerts for unsafe outputs

- Integrates with existing MLOps workflows

Cons

- May require technical expertise for setup

- Smaller ecosystem than some enterprise alternatives

Platforms / Deployment

- Web, Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- MLflow, DataRobot, and cloud ML pipelines

- API-based integration with monitoring and dashboards

- Support for multiple model formats

Support & Community

- Enterprise support and active documentation

#3 — IBM Watson OpenScale

Short description:

IBM Watson OpenScale delivers AI governance and safety monitoring with bias detection, explainability, and compliance reporting for enterprise AI deployments.

Key Features

- Continuous monitoring and bias detection

- Explainable AI insights for business users

- Compliance and audit reporting

- Integration with hybrid and cloud environments

- Policy enforcement workflows

Pros

- Enterprise-grade governance and evaluation

- Supports multi-cloud and hybrid environments

- Deep integration with IBM AI ecosystem

Cons

- Complexity requires trained personnel

- Premium pricing for SMBs

Platforms / Deployment

- Web, Cloud, Hybrid

Security & Compliance

- SOC 2, ISO 27001, enterprise RBAC & encryption

Integrations & Ecosystem

- IBM Cloud services

- APIs for hybrid ML deployments

- Reporting dashboards

Support & Community

- Strong enterprise support, documentation, and training

#4 — Arthur AI

Short description:

Arthur AI offers monitoring, safety evaluation, and explainability for production models. It focuses on drift detection, bias alerts, and compliance dashboards.

Key Features

- Real-time model performance monitoring

- Bias and fairness assessment

- Explainable AI insights

- Policy enforcement and alerting

- Reporting for audit and compliance

Pros

- Strong real-time safety monitoring

- Hybrid deployment support

- Bias detection across multiple dimensions

Cons

- Smaller ecosystem than enterprise platforms

- Cost scales with number of monitored models

Platforms / Deployment

- Web, Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Cloud ML services, BI dashboards, MLOps pipelines

- REST APIs for integration

- Monitoring tools for hybrid environments

Support & Community

- Documentation and customer support

#5 — Tractica AI Safety Suite

Short description:

Tractica provides a suite for AI evaluation, including robustness testing, risk assessment, and bias mitigation for enterprise models.

Key Features

- Model robustness and stress testing

- Bias and fairness analytics

- Risk scoring and policy enforcement

- Integration with CI/CD pipelines

- Explainability dashboards

Pros

- Enterprise-level safety evaluation

- Multi-model support

- Actionable risk insights

Cons

- Technical setup may be complex

- Limited SMB-focused offerings

Platforms / Deployment

- Web, Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- CI/CD pipelines, cloud ML services, API support

- Dashboard integration for reporting

- Data pipeline connectivity

Support & Community

- Enterprise support and technical documentation

#6 — FICO AI Governance

Short description:

FICO provides AI evaluation tools with a focus on financial models, risk mitigation, and compliance reporting.

Key Features

- Bias and fairness monitoring

- Regulatory reporting

- Explainable AI dashboards

- Model approval workflows

- Integration with enterprise AI systems

Pros

- Finance-focused governance and safety

- Supports compliance with financial regulations

- Enterprise-grade reporting

Cons

- Limited to financial sector applications

- Cost can be high for smaller teams

Platforms / Deployment

- Web, Cloud

Security & Compliance

- SOC 2, Not publicly stated

Integrations & Ecosystem

- Enterprise AI systems

- Financial data warehouses

- Reporting dashboards

Support & Community

- Professional services and documentation

#7 — H2O.ai AI Safety

Short description:

H2O.ai safety tools evaluate AI models for bias, performance, and robustness. Suitable for both open-source and enterprise environments.

Key Features

- Model validation and fairness checks

- Explainable AI dashboards

- Policy enforcement workflows

- Integration with AI pipelines

- Risk scoring and audit reporting

Pros

- Supports open-source and enterprise models

- Scalable deployment options

- Strong explainability

Cons

- Limited pre-built regulatory templates

- Requires technical expertise

Platforms / Deployment

- Web, Cloud, Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- H2O Driverless AI

- BI and reporting tools

- API and SDK support

Support & Community

- Documentation and active community

#8 — Zest AI Safety Tools

Short description:

Zest AI provides evaluation tools for credit models, focusing on fairness, explainability, and compliance.

Key Features

- Bias detection and fairness monitoring

- Explainable AI dashboards

- Policy enforcement for regulated use cases

- Integration with financial systems

- Audit-ready reporting

Pros

- Finance-focused safety evaluation

- Easy-to-read dashboards

- Supports regulatory compliance

Cons

- Limited to credit/finance applications

- Not suitable for general AI use

Platforms / Deployment

- Web, Cloud

Security & Compliance

- SOC 2, Not publicly stated

Integrations & Ecosystem

- Financial data systems

- Enterprise AI pipelines

- Reporting dashboards

Support & Community

- Customer support and documentation

#9 — Pymetrics AI Safety

Short description:

Pymetrics evaluates HR AI models for fairness, bias, and compliance in recruitment and talent assessment.

Key Features

- Bias detection in hiring models

- Compliance reporting

- Explainable AI dashboards

- Policy enforcement workflows

- Integration with HR systems

Pros

- Focused on HR and recruitment AI

- Transparency in candidate evaluation

- Easy integration with HRIS

Cons

- Limited to talent/HR domain

- Smaller ecosystem for integrations

Platforms / Deployment

- Web, Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- HRIS platforms, ATS, reporting dashboards

Support & Community

- Documentation and customer support

#10 — Algorithmia AI Safety

Short description:

Algorithmia offers AI evaluation and monitoring, focusing on risk, drift, and safety in MLOps pipelines for developers and enterprises.

Key Features

- Model monitoring and alerting

- Governance policies for safety

- Bias and fairness evaluation

- Integration with CI/CD pipelines

- Audit logging

Pros

- Developer-friendly

- Integrates with MLOps pipelines

- Supports multiple model types

Cons

- Fewer enterprise compliance templates

- Requires technical setup

Platforms / Deployment

- Web, Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- CI/CD platforms

- ML orchestration tools

- API extensibility

Support & Community

- Documentation, forums, professional support

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Fiddler AI | Enterprise monitoring | Web | Cloud | Real-time explainability | N/A |

| Truera AI | Bias & performance monitoring | Web | Cloud | Continuous audit & monitoring | N/A |

| IBM Watson OpenScale | Large enterprise | Web | Cloud/Hybrid | Compliance & bias reporting | N/A |

| Arthur AI | Production monitoring | Web | Cloud | Drift & bias detection | N/A |

| Tractica AI Safety Suite | Enterprise risk evaluation | Web | Cloud | Multi-model robustness testing | N/A |

| FICO AI Governance | Finance models | Web | Cloud | Regulatory compliance tracking | N/A |

| H2O.ai AI Safety | Open-source + enterprise | Web | Cloud/Hybrid | Model validation & explainability | N/A |

| Zest AI Safety Tools | Finance AI | Web | Cloud | Explainable AI for credit | N/A |

| Pymetrics AI Safety | HR & talent | Web | Cloud | Recruitment fairness dashboards | N/A |

| Algorithmia AI Safety | Developer pipelines | Web | Cloud | CI/CD integration for safety | N/A |

Evaluation & Scoring of AI Safety & Evaluation Tools

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| Fiddler AI | 9 | 8 | 8 | 7 | 8 | 8 | 7 | 8.2 |

| Truera AI | 8 | 8 | 7 | 7 | 7 | 7 | 7 | 7.5 |

| IBM Watson OpenScale | 9 | 7 | 8 | 8 | 8 | 8 | 6 | 7.9 |

| Arthur AI | 8 | 8 | 7 | 7 | 7 | 7 | 7 | 7.5 |

| Tractica AI | 8 | 7 | 7 | 7 | 7 | 7 | 7 | 7.4 |

| FICO AI Governance | 8 | 7 | 6 | 8 | 7 | 7 | 6 | 7.1 |

| H2O.ai AI Safety | 8 | 7 | 7 | 7 | 7 | 7 | 8 | 7.4 |

| Zest AI Safety | 7 | 8 | 6 | 8 | 7 | 6 | 6 | 7.0 |

| Pymetrics AI Safety | 7 | 8 | 6 | 7 | 7 | 6 | 6 | 6.9 |

| Algorithmia AI Safety | 7 | 8 | 8 | 7 | 7 | 6 | 7 | 7.3 |

Interpretation: Higher weighted totals indicate better overall safety coverage, usability, and integration in AI pipelines. Scores are comparative, not absolute.

Which AI Safety & Evaluation Tools Tool Is Right for You?

Solo / Freelancer

- Lightweight tools like Truera AI or Fiddler AI are sufficient for small-scale AI evaluation projects.

SMB

- Platforms such as Arthur AI or Algorithmia AI Safety offer straightforward monitoring and evaluation features with manageable setup.

Mid-Market

- H2O.ai AI Safety or Tractica AI provide scalable safety and evaluation frameworks, integrating well with existing AI workflows.

Enterprise

- IBM Watson OpenScale, FICO AI Governance, and Fiddler AI provide comprehensive monitoring, bias detection, compliance reporting, and enterprise-grade auditability.

Budget vs Premium

- Open-source or developer-first tools offer lower costs but require technical expertise. Enterprise solutions provide full governance, support, and regulatory features at a higher price point.

Feature Depth vs Ease of Use

- IBM Watson OpenScale provides rich features but may require specialized training. Fiddler AI and Arthur AI balance feature depth with user-friendly dashboards.

Integrations & Scalability

- Enterprise-grade platforms support multi-cloud, hybrid deployments, and extensive API integrations. SMB/developer tools may have simpler integration options.

Security & Compliance Needs

- Highly regulated industries benefit from SOC 2 / ISO 27001 compliant platforms. Other organizations may prioritize monitoring, bias detection, and explainability.

Frequently Asked Questions (FAQs)

1. What pricing models do AI safety tools use?

Most platforms are subscription-based, often tiered by the number of monitored models, users, or evaluation volume.

2. How long does implementation take?

Implementation varies from a few days for cloud-native tools to several weeks for enterprise hybrid deployments, depending on integrations and policy configuration.

3. Can these tools monitor models in real time?

Yes, tools like Fiddler AI, Arthur AI, and Truera AI provide real-time alerts for drift, bias, and unsafe outputs.

4. Are these tools suitable for small teams?

Developer-focused tools like Algorithmia AI Safety or Truera AI are suitable for small teams, while enterprise platforms may be overkill.

5. What integrations are typically supported?

Most platforms integrate with ML frameworks, cloud services, data pipelines, CI/CD systems, and dashboards.

6. Do these tools provide audit logs?

Yes, platforms include audit trails for monitoring decisions, bias checks, and compliance reporting.

7. Can AI bias be reduced using these tools?

Yes, bias detection, fairness metrics, and mitigation strategies are core features in most AI safety platforms.

8. Are open-source options viable?

Open-source tools work well for technically skilled teams but may require additional setup for compliance and monitoring.

9. How do I migrate between tools?

Migration requires exporting policies, model data, and historical logs. API compatibility and vendor support are key for smooth transitions.

10. Are these tools industry-specific?

Some platforms specialize in finance, HR, or healthcare, while enterprise-grade solutions provide cross-industry support.

Conclusion

AI Safety & Evaluation Tools are essential for organizations deploying AI responsibly. They provide mechanisms to assess model performance, detect bias, monitor safety, and maintain compliance with regulations. Selection depends on company size, industry, technical resources, and regulatory requirements. Enterprise teams may prioritize comprehensive safety and auditability, whereas SMBs or solo developers may value ease of use and integration. Organizations should shortlist a few platforms, run pilots on key AI models, and validate safety, bias detection, and integration features before full-scale adoption to ensure trustworthy AI operations.