Find the Best Cosmetic Hospitals — Choose with Confidence

Discover top cosmetic hospitals in one place and take the next step toward the look you’ve been dreaming of.

“Your confidence is your power — invest in yourself, and let your best self shine.”

Compare • Shortlist • Decide smarter — works great on mobile too.

Introduction

Deep learning frameworks are specialized software libraries designed to simplify the creation, training, and deployment of artificial neural networks. These frameworks provide high-level abstractions for complex mathematical operations, such as backpropagation and gradient descent, allowing developers to focus on architectural design rather than low-level implementation. By leveraging hardware acceleration through GPUs and TPUs, these tools enable the processing of massive datasets to solve problems in computer vision, natural language processing, and autonomous systems.

The evolution of these platforms has democratized artificial intelligence, moving it from academic research into the core of enterprise technology. Modern frameworks offer a mix of imperative and declarative programming styles, catering to both researchers who need flexibility and engineers who require production stability. As deep learning becomes a standard component of software stacks, these frameworks act as the foundational engine for intelligent applications across every industry.

Real-world use cases:

- Autonomous Driving: Training perception models to identify pedestrians and obstacles in real-time.

- Medical Imaging: Developing diagnostic tools that detect anomalies in X-rays and MRI scans.

- Recommendation Engines: Powering personalized content delivery on social media and e-commerce platforms.

- Natural Language Translation: Building real-time language bridge tools for global communication.

- Financial Forecasting: Analyzing market trends and historical data to predict stock movements and risk.

Evaluation criteria for buyers:

- Ease of Use: The simplicity of the API and the quality of the learning curve for new developers.

- Performance: Speed of training and inference, especially when scaled across multiple nodes.

- Community Support: The volume of available tutorials, pre-trained models, and forum discussions.

- Deployment Options: Ease of moving models from a local environment to mobile, web, or cloud production.

- Hardware Compatibility: Native support for various accelerators like NVIDIA GPUs or specialized AI chips.

- Flexibility: The ability to customize layers, loss functions, and training loops for research.

- Library Ecosystem: Availability of specialized extensions for vision, audio, or text.

- Scalability: Efficiency in handling distributed training on massive clusters.

- Visualization Tools: Quality of integrated dashboards for monitoring training progress.

- Documentation: The clarity and completeness of official guides and API references.

Mandatory paragraph

- Best for: Data scientists, AI researchers, and software engineers building complex predictive models or automated systems that require high-dimensional data processing.

- Not ideal for: Simple statistical analysis, small-scale linear regression tasks, or organizations without access to significant computational resources.

Key Trends in Deep Learning Frameworks

- Standardization on Python: While multiple languages are supported, Python has become the primary interface for almost all major deep learning development.

- Pre-trained Model Hubs: A shift toward “transfer learning,” where users download massive, pre-trained models and fine-tune them for specific tasks.

- Automated Machine Learning (AutoML): Integration of features that automatically find the best architecture and hyperparameters for a given dataset.

- Compiler Optimization: Advanced graph compilers that optimize the mathematical operations for specific hardware backends automatically.

- Edge AI Focus: Increasing support for compressing models to run on mobile devices and IoT sensors with limited battery life.

- Dynamic vs. Static Graphs: A convergence where frameworks now offer both dynamic graphs for research flexibility and static graphs for production speed.

- Distributed Training Efficiency: Significant improvements in how frameworks manage communication between hundreds of GPUs during massive model training.

- AI Ethics and Explainability: New modules focused on identifying bias in models and providing “reasons” for specific AI-driven decisions.

How We Selected These Tools (Methodology)

To identify the premier deep learning frameworks, we evaluated the current market based on technical capability and professional adoption. Our methodology prioritized:

- Architectural Robustness: We focused on tools that provide reliable state management and gradient computation.

- Industry Integration: We chose frameworks that are used by top technology companies for their core products.

- Documentation Quality: We assessed how easily a technical team could implement a production-ready solution using only official resources.

- Hardware Support: Priority was given to frameworks with native support for high-performance computing clusters.

- Ecosystem Vitality: We looked for tools with a large volume of third-party contributions and pre-trained model availability.

- Maintenance Frequency: We selected active projects with consistent update cycles and security patches.

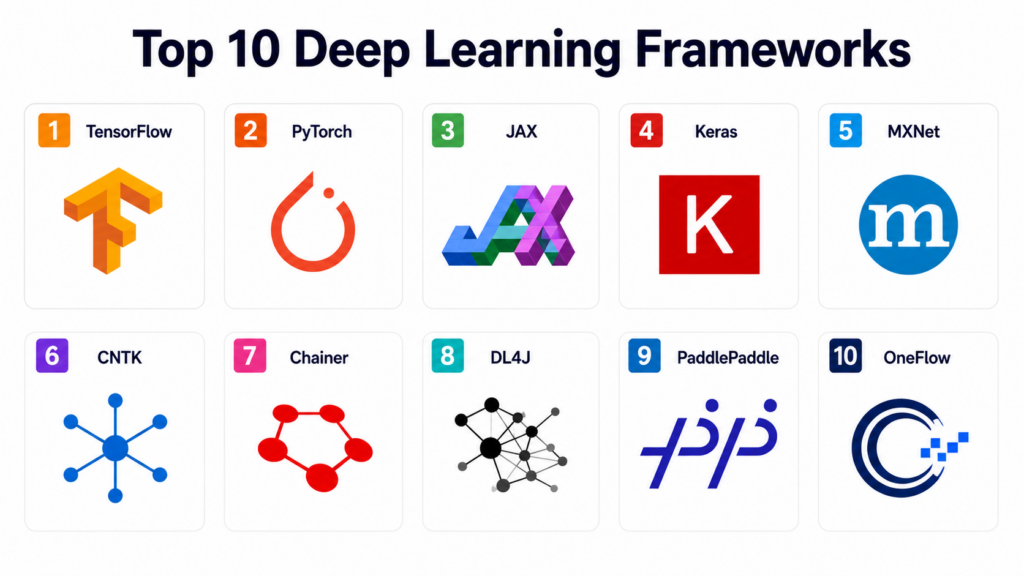

Top 10 Deep Learning Frameworks

#1 — TensorFlow

TensorFlow is a comprehensive open-source platform developed by Google that offers a flexible ecosystem of tools, libraries, and community resources for building and deploying machine learning models.

Key Features

- TensorFlow Serving: Specialized system for deploying models in production environments.

- TensorFlow Lite: A lightweight solution for mobile and embedded devices.

- Keras Integration: High-level API for fast prototyping and easy model building.

- TensorBoard: Suite of visualization tools to inspect and understand the learning process.

- Strong Scalability: Native support for distributed training across thousands of CPUs and GPUs.

- Eager Execution: An imperative programming interface that evaluates operations immediately.

Pros

- Massive ecosystem with solutions for every stage of the AI lifecycle.

- Extensive industrial support and a large pool of certified developers.

Cons

- Can have a steeper learning curve due to its vast API surface.

- Debugging can be complex compared to more imperative-first frameworks.

Platforms / Deployment

- Windows / macOS / Linux / iOS / Android

- Cloud / Edge / Browser

Security & Compliance

- SSO, RBAC, Managed environment security via Google Cloud.

- Not publicly stated.

Integrations & Ecosystem

TensorFlow is a core pillar of the Google Cloud AI ecosystem and works with most major data tools.

- Google Cloud Platform

- Keras

- Apache Spark

- Kubernetes

Support & Community

Extensive official documentation, professional certifications, and a global network of Google Developer Groups.

#2 — PyTorch

PyTorch is a flexible deep learning framework originally developed by Meta’s AI Research lab, known for its dynamic computational graph and ease of use in academic research.

Key Features

- Dynamic Computational Graph: Allows users to change the network behavior on the fly during execution.

- TorchServe: An industrial-grade model serving framework for deploying PyTorch models at scale.

- Distributed Data Parallel: Highly efficient module for training models across multiple GPUs and nodes.

- Native Python Support: Deeply integrated with the Python data science stack like NumPy and SciPy.

- TorchScript: A way to create serializable and optimizable models from PyTorch code.

- Strong Research Ecosystem: The primary choice for most modern AI research papers and experiments.

Pros

- Extremely intuitive and Pythonic API that developers love.

- Superior debugging capabilities due to its imperative nature.

Cons

- Historically seen as slightly less “production-ready” than its competitors, though this has changed.

- Smaller number of built-in high-level deployment tools compared to the TensorFlow suite.

Platforms / Deployment

- Windows / macOS / Linux

- Cloud / Mobile / Edge

Security & Compliance

- Standard Python environment security and cloud provider controls.

- Not publicly stated.

Integrations & Ecosystem

PyTorch is the foundation for many high-level libraries used in the AI community.

- Hugging Face Transformers

- Weights & Biases

- Fast.ai

- Amazon SageMaker

Support & Community

Active forums, a massive GitHub presence, and the primary framework supported by the academic community.

#3 — JAX

JAX is a research-oriented library that combines a NumPy-like API with high-performance compilers to enable high-performance machine learning research.

Key Features

- Autograd: Automatically differentiates native Python and NumPy code.

- XLA Compiler: Uses Accelerated Linear Algebra to optimize code for GPUs and TPUs.

- JIT Compilation: Just-In-Time compilation to speed up function execution significantly.

- Vectorization: Parallelizes functions across multiple data points with simple decorators.

- Functional Programming: Follows a pure functional paradigm for predictable and reproducible research.

- TPU Native: Built from the ground up to take full advantage of Google’s Tensor Processing Units.

Pros

- Unrivaled performance for specialized mathematical and scientific computing.

- Extremely lightweight and doesn’t impose a rigid framework structure.

Cons

- Requires a shift in mindset toward functional programming, which can be difficult.

- Lacks the high-level “ready-to-go” APIs found in Keras or PyTorch.

Platforms / Deployment

- Linux / macOS

- Cloud

Security & Compliance

- Varies / N/A.

- Not publicly stated.

Integrations & Ecosystem

JAX is becoming the engine for many next-generation research libraries.

- Flax (Neural network library)

- Haiku

- Optax

- DeepMind Research Projects

Support & Community

Growing rapidly within the high-end research community, backed by Google Research and DeepMind.

#4 — Keras

Keras is a high-level deep learning API that provides a simple, modular, and extensible interface for building models, now acting as a multi-backend interface.

Key Features

- User-Centric Design: Optimized for humans, reducing cognitive load for model developers.

- Multi-Backend Support: Can run on top of TensorFlow, JAX, or PyTorch.

- Modular Architecture: Models are understood as a sequence of standalone, fully configurable modules.

- Simple Prototyping: Allows users to go from idea to result in the shortest possible time.

- Built-in Preprocessing: Comprehensive layers for data normalization and augmentation.

- Export Flexibility: Models can be easily exported for browser, mobile, or server-side deployment.

Pros

- The easiest entry point for anyone learning deep learning.

- Highly readable code that is easy for teams to maintain.

Cons

- Not ideal for researchers who need to manipulate low-level tensor operations.

- Abstraction can sometimes hide performance bottlenecks from the developer.

Platforms / Deployment

- Windows / macOS / Linux / iOS / Android

- Cloud / Browser

Security & Compliance

- Dependent on the chosen backend (TensorFlow/PyTorch/JAX).

- Not publicly stated.

Integrations & Ecosystem

Works as the high-level bridge for the major deep learning engines.

- TensorFlow

- AutoKeras

- Core ML (Apple)

- TensorRT

Support & Community

One of the most widely documented APIs in existence with massive community support.

#5 — MXNet

Apache MXNet is a lean, flexible, and ultra-scalable deep learning framework designed for efficiency on both cloud infrastructure and mobile devices.

Key Features

- Hybrid Front-end: Supports both imperative and symbolic programming styles.

- Gluon API: A high-level, easy-to-use interface for building neural networks.

- Distributed Training: Scalable across multiple GPUs and hosts with high efficiency.

- Language Support: Deep support for Python, R, Scala, Julia, C++, and more.

- Low Memory Footprint: Highly optimized for resource-constrained environments.

- TVM Integration: Uses the TVM compiler to optimize models for any hardware backend.

Pros

- Superior scalability for large enterprise deployments.

- The best choice for organizations that need multi-language support beyond Python.

Cons

- Community and tutorial ecosystem is smaller than TensorFlow or PyTorch.

- Fewer pre-trained models available in public hubs.

Platforms / Deployment

- Windows / macOS / Linux / iOS / Android

- Cloud / Edge

Security & Compliance

- Standard Apache license security and cloud IAM integrations.

- Not publicly stated.

Integrations & Ecosystem

As the primary framework of choice for AWS, it has deep cloud roots.

- Amazon SageMaker

- AWS DeepLens

- Apache Spark

- Kubernetes

Support & Community

Managed under the Apache Software Foundation with strong corporate backing from Amazon.

#6 — Microsoft CNTK

The Microsoft Cognitive Toolkit (CNTK) is a commercial-grade distributed deep learning framework that describes neural networks as a series of computational steps.

Key Features

- BrainScript: A specialized language for describing neural network architectures.

- Efficient Memory Management: Highly optimized for handling large datasets in limited RAM.

- High Performance: Often outperforms other frameworks on massive speech and text tasks.

- C++ and Python APIs: Provides both high-level and low-level access to the engine.

- Extensive Component Library: Pre-built components for standard neural network layers.

- Seamless Azure Integration: Optimized for deployment on Microsoft’s cloud infrastructure.

Pros

- Exceptionally fast for large-scale production training of speech and text models.

- Strongly typed and predictable, which is beneficial for enterprise software engineering.

Cons

- Microsoft has moved its focus more toward PyTorch, leading to slower updates.

- Community adoption has significantly declined compared to other open-source tools.

Platforms / Deployment

- Windows / Linux

- Cloud / On-prem

Security & Compliance

- Azure Active Directory integration and standard Windows security.

- Not publicly stated.

Integrations & Ecosystem

Deeply integrated into the Microsoft and Azure data landscape.

- Azure Machine Learning

- SQL Server Machine Learning Services

- Power BI

- ONNX

Support & Community

Limited community support compared to the major leaders, with official support provided through Azure.

#7 — Chainer

Chainer is a Python-based deep learning framework that pioneered the “Define-by-Run” approach, offering a flexible and intuitive way to build complex networks.

Key Features

- Define-by-Run: Creates the computational graph on the fly, allowing for variable-length inputs.

- CuPy Integration: Uses CuPy for high-performance GPU acceleration with a NumPy-compatible API.

- ChainerRL: A comprehensive library for deep reinforcement learning.

- ChainerCV: Specialized modules for computer vision tasks.

- Flexible Architectures: Easy to implement models with complex control flow like loops and conditionals.

- Lightweight Core: Designed to be easy to read and understand for technical users.

Pros

- The original pioneer of the dynamic graph approach that inspired PyTorch.

- Very strong for reinforcement learning and research involving dynamic inputs.

Cons

- Development has officially moved to a maintenance-only mode.

- Industry adoption is largely localized to specific research groups and the Japanese market.

Platforms / Deployment

- Linux / macOS

- Cloud

Security & Compliance

- Varies / N/A.

- Not publicly stated.

Integrations & Ecosystem

Historically influential, with a focus on research and high-performance computing.

- CuPy

- Intel MKL-DNN

- NVIDIA NCCL

- ONNX

Support & Community

Legacy community support is available, but new development is encouraged on other platforms.

#8 — Deeplearning4j

Eclipse Deeplearning4j (DL4J) is the first commercial-grade, open-source, distributed deep learning library written for Java and the JVM.

Key Features

- JVM Native: Built specifically for Java and Scala developers.

- Distributed on Spark: Native integration with Apache Spark for massive parallel training.

- DataVec: A tool for vectorizing data from various sources like images, text, and CSVs.

- ND4J: A powerful scientific computing library for Java, similar to NumPy.

- Model Import: Can import models trained in TensorFlow or Keras for execution on the JVM.

- Enterprise Focused: Designed for stability and integration with enterprise Java applications.

Pros

- The best choice for organizations with a heavy investment in Java infrastructure.

- Provides a full-stack solution for data science within the JVM.

Cons

- The deep learning community is Python-centric, so finding help and examples is harder.

- More verbose code compared to Python-based frameworks.

Platforms / Deployment

- Windows / Linux / macOS / Android

- Cloud / JVM

Security & Compliance

- Standard JVM security and enterprise Java protocols.

- Not publicly stated.

Integrations & Ecosystem

Designed to live within the Hadoop and Spark big data ecosystems.

- Apache Spark

- Apache Flink

- Hadoop

- Kafka

Support & Community

Professional support through Konduit and a dedicated community under the Eclipse Foundation.

#9 — PaddlePaddle

PaddlePaddle (Parallel Distributed Deep Learning) is a comprehensive platform developed by Baidu, featuring an industrial-grade framework and a rich ecosystem.

Key Features

- PaddleHub: A large repository of pre-trained models for easy deployment.

- PaddleServing: Specialized high-performance service for model deployment.

- Distributed Training Engine: Optimized for training massive models on large GPU clusters.

- Paddle Lite: A high-performance framework for mobile, embedded, and IoT devices.

- Model Compression: Built-in tools for quantization and pruning to make models smaller.

- Industry Focus: Features models specifically optimized for manufacturing and finance.

Pros

- Excellent performance for large-scale distributed training.

- Very strong support and optimization for Chinese language and industry needs.

Cons

- Documentation and community support outside of China are less extensive.

- Can be harder to find English-language tutorials for advanced features.

Platforms / Deployment

- Windows / Linux / macOS / iOS / Android

- Cloud / Edge / IoT

Security & Compliance

- Standard enterprise security controls.

- Not publicly stated.

Integrations & Ecosystem

Deeply integrated into the Baidu AI ecosystem and industrial software.

- Baidu Cloud

- PaddleNLP

- PaddleVideo

- EasyDL

Support & Community

Massive community support in Asia, with increasing efforts toward global documentation.

#10 — OneFlow

OneFlow is a high-performance deep learning framework specifically designed for massive-scale model training and distributed computing.

Key Features

- SBP (Split, Broadcast, Partial-sum): A unique abstraction for managing distributed data and model states.

- Actor System: Uses an actor-based architecture to manage asynchronous communication efficiently.

- Zero-Overhead Strategy: Minimizes the overhead between the framework and the hardware.

- Automatic Parallelism: Can automatically determine the best way to split a model across GPUs.

- OneFlow-Serving: Optimized for high-throughput model inference.

- Pythonic API: Maintains compatibility and a similar feel to PyTorch for easy transition.

Pros

- Superior performance for training extremely large models like LLMs.

- Simplifies the complex task of distributed training for engineers.

Cons

- A newer player in the market with a much smaller community.

- Lacks the extensive library of high-level modules found in TensorFlow or PyTorch.

Platforms / Deployment

- Linux

- Cloud / Data Center

Security & Compliance

- Standard cloud security integrations.

- Not publicly stated.

Integrations & Ecosystem

Focused on the high-end computing and massive-scale AI market.

- NVIDIA CUDA

- InfiniBand

- Docker

- ONNX

Support & Community

Technical support available through GitHub and a specialized community of high-performance computing experts.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| TensorFlow 1 | Production / Ecosystem | Multi-Platform | Cloud, Edge, Web | TensorFlow Serving | 4.7/5 |

| PyTorch 2 | Research / Research | Multi-Platform | Cloud, Mobile | Dynamic Graphs | 4.8/5 |

| JAX 3 | Scientific Research | Linux, macOS | Cloud | XLA Acceleration | 4.6/5 |

| Keras 4 | Rapid Prototyping | Multi-Platform | Web, Mobile | High-level API | 4.9/5 |

| MXNet 5 | Scalability / AWS | Multi-Platform | Cloud, Edge | Hybrid Front-end | 4.4/5 |

| CNTK 6 | Speech / Text | Windows, Linux | Cloud, On-prem | BrainScript | 4.1/5 |

| Chainer 7 | Reinforcement Learning | Linux, macOS | Cloud | Define-by-Run | 4.0/5 |

| DL4J 8 | Enterprise Java | Multi-Platform | JVM, Spark | Native JVM Support | 4.3/5 |

| PaddlePaddle 9 | Industrial Scale | Multi-Platform | Cloud, IoT | PaddleHub | 4.5/5 |

| OneFlow 10 | Massive Models | Linux | Data Center | Auto Parallelism | N/A |

Evaluation & Scoring of Deep Learning Frameworks

The scoring below evaluates each framework against professional requirements for model development and production stability.

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| TensorFlow | 10 | 7 | 10 | 9 | 9 | 10 | 9 | 9.05 |

| PyTorch | 9 | 9 | 10 | 8 | 9 | 10 | 9 | 9.05 |

| JAX | 10 | 4 | 7 | 7 | 10 | 7 | 8 | 7.75 |

| Keras | 7 | 10 | 9 | 8 | 8 | 10 | 9 | 8.50 |

| MXNet | 9 | 6 | 9 | 8 | 10 | 8 | 7 | 8.30 |

| CNTK | 8 | 5 | 7 | 9 | 9 | 6 | 6 | 6.85 |

| Chainer | 7 | 7 | 6 | 7 | 8 | 5 | 6 | 6.65 |

| DL4J | 8 | 6 | 9 | 9 | 8 | 8 | 7 | 7.75 |

| PaddlePaddle | 9 | 7 | 8 | 8 | 9 | 8 | 8 | 8.25 |

| OneFlow | 9 | 5 | 6 | 7 | 10 | 6 | 7 | 7.35 |

Scoring Interpretation:

The “Weighted Total” is a balance of features, performance, and accessibility. TensorFlow and PyTorch lead significantly due to their massive support and comprehensive integration ecosystems. Keras scores high for developers where speed of development is more critical than low-level control.

Which Deep Learning Framework Tool Is Right for You?

Solo / Freelancer

For an individual starting out, Keras or PyTorch are the best options. Keras offers the fastest way to build functioning models, while PyTorch provides the most intuitive “Python-like” experience for complex tasks.

SMB

Small and Medium Businesses should focus on PyTorch or TensorFlow. These platforms have the largest talent pools, meaning it will be easier and cheaper to hire engineers who can maintain and scale your AI systems.

Mid-Market

Companies with growing data needs should evaluate TensorFlow for its production-ready serving tools and MXNet if they are heavily invested in the AWS ecosystem.

Enterprise

For large-scale industrial applications, TensorFlow or PaddlePaddle offer the most robust distributed training and model management tools. If your enterprise is a “Java shop,” Deeplearning4j is the only logical choice to maintain architectural consistency.

Budget vs Premium

- Budget: All these frameworks are open-source and free, but PyTorch and TensorFlow offer the best “budget” value because of the massive amount of free training resources available.

- Premium: Managed services like Databricks, AWS SageMaker, or Google Vertex AI provide the premium, high-cost environment to run these frameworks effectively.

Feature Depth vs Ease of Use

- Deep Depth: TensorFlow, JAX.

- Easy to Use: Keras, PyTorch.

Integrations & Scalability

- Top Integrations: TensorFlow, PyTorch.

- Top Scalability: OneFlow, PaddlePaddle, MXNet.

Security & Compliance Needs

Organizations with high security needs should prioritize TensorFlow or CNTK, as they have the longest histories of integration into enterprise-grade cloud security frameworks.

Frequently Asked Questions (FAQs)

1. What is the difference between machine learning and deep learning?

Machine learning is a broad field of AI where computers learn from data. Deep learning is a specific sub-field that uses multi-layered neural networks to solve complex problems like image and speech recognition.

2. Do I need a GPU to run these frameworks?

While you can run all of them on a CPU for learning, training professional-grade models on large datasets is practically impossible without a high-performance GPU or TPU.

3. Which framework is better for beginners?

Keras is widely considered the best for beginners due to its simple, modular API. PyTorch is the second-best choice for those who want to understand the underlying mechanics more clearly.

4. Can I move models between different frameworks?

Yes, the ONNX (Open Neural Network Exchange) format allows you to export a model from one framework and run it in another, though some complex custom layers may require manual adjustments.

5. What is “Transfer Learning”?

Transfer learning is the process of taking a model trained on a massive dataset (like all of Wikipedia) and fine-tuning it on your specific, smaller dataset. This is the standard way modern AI is built.

6. Are these frameworks used for AI in mobile apps?

Yes, TensorFlow Lite and PyTorch Mobile are specialized versions of these frameworks designed specifically to run efficiently on smartphones and tablets.

7. Why is Python the dominant language for these tools?

Python offers a perfect balance of readability for humans and the ability to connect to high-performance math libraries written in C++ and CUDA that handle the actual heavy lifting.

8. What is a “Computational Graph”?

A computational graph is a series of mathematical operations represented as nodes and edges. It allows the framework to understand how data flows and how to calculate gradients for training.

9. How do I choose between TensorFlow and PyTorch?

Choose TensorFlow if you need a “full-stack” production environment with established industrial tools. Choose PyTorch if you need flexibility, easy debugging, and want to stay at the cutting edge of research.

10. Do these frameworks require a lot of data?

Deep learning generally requires much more data than traditional statistics. While transfer learning reduces this need, you still typically need thousands of examples for a model to become highly accurate.

Conclusion

The selection of a deep learning framework is a foundational choice that determines your team’s development speed and your system’s production stability. While TensorFlow and PyTorch currently dominate the market, the rise of specialized tools like JAX for research and PaddlePaddle for industrial scale shows that the field is still rapidly evolving.For most organizations, the decision will come down to a balance between “Ease of Use” and “Ecosystem Support.” We recommend starting with Keras or PyTorch to prove your AI concepts before scaling into the more complex, distributed capabilities of TensorFlow or OneFlow.