Find the Best Cosmetic Hospitals — Choose with Confidence

Discover top cosmetic hospitals in one place and take the next step toward the look you’ve been dreaming of.

“Your confidence is your power — invest in yourself, and let your best self shine.”

Compare • Shortlist • Decide smarter — works great on mobile too.

Introduction

Data observability is the organizational ability to understand, diagnose, and manage the health of data throughout its entire lifecycle. In a modern landscape where data pipelines have become increasingly complex and distributed, observability goes beyond simple monitoring. It encompasses a holistic view of data health through five key pillars: freshness, distribution, volume, schema, and lineage. As enterprises transition toward data-driven decision-making, the cost of “bad data”—broken dashboards, incorrect financial reports, or biased ML models—has become astronomical. Data observability tools provide the automated “eyes” that alert engineering teams to data downtime before it impacts the business.

This category is critical now because the volume of data being ingested has outpaced the ability of manual testing to keep up. With the rise of the modern data stack involving multiple transformations across various platforms, a failure in one upstream source can trigger a catastrophic “silent failure” downstream. Data observability platforms act as a proactive safety net, ensuring that data is not just present, but reliable, accurate, and timely.

Real-World Use Cases:

- Preventing “Silent” Pipeline Failures: Automatically detecting when a third-party API changes its schema, causing downstream SQL models to produce null values.

- SLA Management: Ensuring that executive dashboards are refreshed by 8 AM every morning by monitoring data freshness and ingestion delays.

- ML Model Integrity: Monitoring the distribution of features used in training sets to detect “drift” that could lead to inaccurate AI predictions.

- Cost Optimization: Identifying “zombie” tables or unused data assets that are consuming expensive storage and compute resources.

Buyer Evaluation Criteria:

- Ease of Integration: How quickly the tool can connect to existing warehouses (Snowflake, BigQuery) and orchestrators (Airflow).

- Automated Lineage: The ability to automatically map data dependencies from source to BI tool.

- Anomaly Detection: The sophistication of machine learning models used to set baseline expectations without manual thresholding.

- Incident Management: Built-in workflows for alerting, triaging, and resolving data quality issues.

- Data Privacy: Whether the tool requires access to raw data or only monitors metadata to ensure compliance.

- Scalability: The platform’s ability to monitor thousands of tables without degrading performance or significantly increasing costs.

- Programmability: Support for “Data Quality as Code” via APIs or SDKs for developer-centric teams.

- Root Cause Analysis: Capabilities that help engineers quickly trace a failure back to the specific offending query or source.

Mandatory paragraph

- Best for: Data engineers, analytics engineers, and data platform owners at mid-to-large enterprises who manage complex, multi-stage data pipelines and require high reliability for executive reporting.

- Not ideal for: Small teams with very simple, single-source data setups where a basic SQL test script or built-in warehouse monitoring is sufficient.

Key Trends in Data Observability Tools

- Shift from Reactive to Proactive: Modern tools are moving beyond alerting on failures to predicting potential “data downtime” by analyzing historical trends in ingestion and processing.

- Data Contracts Adoption: A growing trend where observability tools enforce “contracts” between data producers and consumers to prevent breaking changes at the source.

- GenAI-Assisted Root Cause Analysis: Leveraging large language models to explain why a query failed or why data distribution shifted, significantly reducing the Mean Time to Detection (MTTD).

- Observability at the Source: Moving monitoring upstream into operational databases to catch errors before they even reach the analytical data warehouse.

- FinOps Integration: Platforms are increasingly adding features to monitor the “cost” of data quality, showing the financial impact of broken pipelines in terms of wasted compute.

- Open Standard Interoperability: Increased support for open standards like OpenLineage, allowing for a more unified view across different vendor tools.

- Self-Healing Pipelines: The emergence of experimental features that can automatically roll back a transformation or trigger a re-run if a data quality check fails.

- Metadata-Only Monitoring: To satisfy strict security requirements, tools are prioritizing “zero-trust” architectures that never actually see or store sensitive raw data.

How We Selected These Tools (Methodology)

To identify the leading players in the data observability space, we utilized a multi-dimensional evaluation logic:

- Market Adoption & Mindshare: We prioritized platforms that are frequently cited in industry surveys and have significant deployment footprints in major tech organizations.

- Feature Completeness: We looked for “end-to-end” solutions that cover all five pillars of observability (Freshness, Volume, Schema, Distribution, Lineage).

- Machine Learning Sophistication: We evaluated the accuracy of the anomaly detection algorithms and their ability to minimize “alert fatigue.”

- Integration Density: Preference was given to tools with a wide array of native connectors for the modern data stack (Snowflake, Databricks, dbt, Airflow, Looker).

- Security & Governance: We assessed the level of SOC 2 compliance and the robustness of role-based access controls (RBAC).

- Developer Experience: We factored in the availability of CLI tools, APIs, and documentation quality for teams that prefer code-based workflows.

- Segment Versatility: The list includes a balance of enterprise-grade heavyweights and leaner, SMB-focused solutions.

- Performance Impact: We analyzed whether the monitoring process introduces significant latency or compute overhead to the customer’s warehouse.

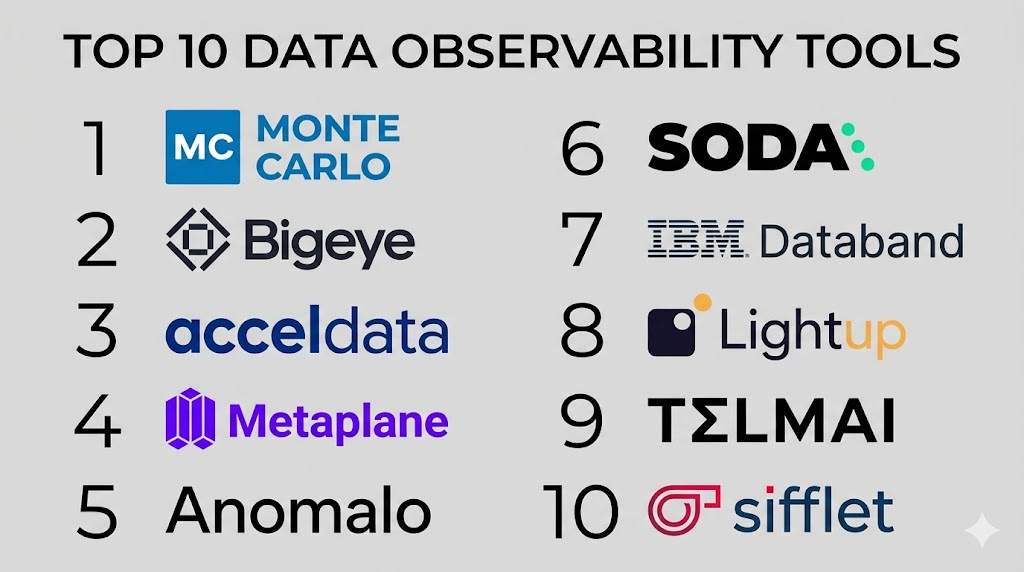

Top 10 Data Observability Tools Tools

#1 — Monte Carlo

Short description:

Monte Carlo is often cited as the pioneer of the data observability category. It provides an end-to-end platform that uses machine learning to automatically learn about an organization’s data environment. It is built for large-scale data teams that need to reduce data downtime through automated monitoring, alerting, and cross-platform lineage.

Key Features

- Automated Anomaly Detection: Uses ML to monitor freshness, volume, and schema without requiring manual thresholds.

- End-to-End Lineage: Automatically maps dependencies from the operational database to the BI dashboard.

- Incident Management: Built-in dashboard for triaging, assigning, and resolving data issues.

- Data Health Insights: Provides executive-level reporting on data reliability and uptime across the org.

- Circuit Breakers: Integrates with orchestrators to stop pipelines if data quality tests fail.

Pros

- Exceptional “set-it-and-forget-it” automated monitoring.

- Comprehensive lineage that covers the entire stack from source to BI.

- High-quality customer success and professional services.

Cons

- Pricing can be high for organizations with a massive number of tables.

- Initial setup of lineage for complex, legacy systems can take time.

Platforms / Deployment

- Web / Cloud

- Cloud (SaaS)

Security & Compliance

- SSO/SAML, MFA, RBAC, Encryption-at-rest.

- SOC 2 Type II, HIPAA, GDPR.

Integrations & Ecosystem

Monte Carlo features one of the most mature integration ecosystems in the data space.

- Snowflake, BigQuery, Redshift, Databricks.

- dbt, Airflow, Prefect, Dagster.

- Looker, Tableau, PowerBI.

Support & Community

Premium enterprise support with dedicated account managers. They host an active community and a well-regarded industry blog on data engineering best practices.

#2 — Bigeye

Short description:

Bigeye is a data observability platform designed specifically for high-growth data teams and large enterprises. It focuses heavily on “data-level” observability, providing deep metrics on the actual values within columns. It is ideal for teams that need to ensure the accuracy of financial reports or machine learning models.

Key Features

- Automated Metric Suggestions: Analyzes your data and suggests the most relevant metrics (null rates, uniqueness, etc.) to monitor.

- Autometrics: Automatically tracks thousands of metrics across tables without manual configuration.

- Deep Value Monitoring: Detects anomalies in data distribution and outliers within specific columns.

- SLA Tracking: Allows teams to define and monitor specific data reliability SLAs for different business units.

- Delta Monitoring: Compares data across different environments (e.g., staging vs. production) to catch issues early.

Pros

- Strong focus on data “correctness” rather than just pipeline health.

- Extremely granular control over individual column metrics.

- User-friendly interface that appeals to both analysts and engineers.

Cons

- Can be overkill for teams that only care about basic pipeline uptime.

- The high volume of metrics can lead to alert noise if not properly tuned.

Platforms / Deployment

- Web

- Cloud (SaaS)

Security & Compliance

- SSO, RBAC, Data encryption.

- SOC 2 Type II compliant.

Integrations & Ecosystem

Focuses on the most popular cloud data warehouses and transformation layers.

- Snowflake, BigQuery, Redshift.

- dbt (cloud and core).

- Slack, PagerDuty, Microsoft Teams for alerting.

Support & Community

Solid documentation and a responsive support team. They actively engage with the data community through webinars and technical content.

#3 — Acceldata

Short description:

Acceldata is a comprehensive “Data Operations” platform that goes beyond simple observability to include compute performance and cost monitoring. It is designed for large enterprises running complex data platforms like Hadoop, Snowflake, or Databricks. It provides a “full-stack” view of data health, from the infrastructure layer to the data quality layer.

Key Features

- Multi-Layer Observability: Monitors data quality, pipeline performance, and infrastructure health in one view.

- Operational Intelligence: Provides insights into warehouse performance and query optimization.

- Data Reliability: Automated ML-based monitoring for data drift and schema changes.

- Cost Governance: Tracks and predicts data spending across cloud and on-premise environments.

- No-Code Rules: Allows business users to define data quality rules without writing SQL.

Pros

- Unique ability to correlate pipeline failures with infrastructure performance.

- Excellent for hybrid cloud or complex on-premise (Hadoop) environments.

- Comprehensive cost management features for Snowflake and Databricks.

Cons

- The platform is broad, which can make the UI feel complex for new users.

- Requires more configuration than “SaaS-only” observability tools.

Platforms / Deployment

- Web / Linux (Agents)

- Cloud / Self-hosted / Hybrid

Security & Compliance

- SSO/SAML, RBAC, Encryption-at-rest/transit.

- SOC 2, ISO 27001.

Integrations & Ecosystem

Strong support for both modern cloud stacks and legacy enterprise data systems.

- Snowflake, Databricks, Google Cloud.

- Hadoop (Cloudera/Hortonworks).

- Kafka, Spark, Airflow.

Support & Community

Enterprise-grade support tiers. They are well-regarded for their ability to help large organizations modernize their legacy data operations.

#4 — Metaplane

Short description:

Metaplane is often described as the “easiest to use” data observability tool, specifically targeting teams using the Modern Data Stack. It is built to be set up in minutes, providing automated monitoring for Snowflake, BigQuery, and other cloud warehouses. It is ideal for mid-market companies that need immediate visibility without a heavy implementation project.

Key Features

- Instant Setup: Connects to your data stack in minutes with automated initial profiling.

- Automated Baselines: Uses ML to automatically set expectations for freshness and volume.

- Downstream Impact Analysis: Shows which dashboards in Looker or Tableau will be affected by a table failure.

- Slack-First Workflow: Designed to alert and resolve issues directly within Slack channels.

- Metadata-Only Approach: Connects via metadata, ensuring raw data never leaves your environment.

Pros

- Extremely fast time-to-value for small to mid-sized teams.

- Clean, intuitive UI that requires very little training.

- Affordable entry-point pricing compared to enterprise rivals.

Cons

- Less granular control over custom complex rules than some enterprise tools.

- Lineage depth might not be sufficient for highly complex, multi-hop legacy pipelines.

Platforms / Deployment

- Web

- Cloud (SaaS)

Security & Compliance

- SSO, RBAC, Encryption.

- SOC 2 Type II compliant.

Integrations & Ecosystem

Deeply integrated with the “Modern Data Stack” favorites.

- Snowflake, BigQuery, Redshift, MotherDuck.

- dbt, Fivetran, Airbyte.

- Looker, Tableau, Sigma, Mode.

Support & Community

Highly responsive support, often via shared Slack channels. They have built a strong community of “Analytics Engineers” through their content and podcasts.

#5 — Anomalo

Short description:

Anomalo focuses on the “unsupervised” detection of data quality issues. It is designed to find “the data problems you didn’t know you had.” By using deep learning to profile every table, it can detect subtle shifts in data distribution and outliers that traditional rule-based tools would miss. It is highly favored by data science teams.

Key Features

- Unsupervised Learning: Automatically builds models of your data to detect anomalies without any manual input.

- Root Cause Analysis: Provides a visualization of exactly which rows and columns contributed to an anomaly.

- Validation Rules: Allows for “low-code” creation of specific business logic tests.

- Data Freshness & Volume: Standard observability pillars handled with high precision.

- Visual Data Profiling: Generates rich visualizations for every table to help users understand data distribution.

Pros

- Industry-leading ability to find subtle data drift and distribution issues.

- Minimal manual configuration required to start finding real bugs.

- Excellent explanatory visualizations for why an anomaly occurred.

Cons

- Higher compute cost on the warehouse due to the deep profiling required.

- May be more complex for business users than simple “uptime” monitors.

Platforms / Deployment

- Web

- Cloud (SaaS) / Private VPC

Security & Compliance

- SSO, MFA, RBAC, Encryption.

- SOC 2 Type II compliant.

Integrations & Ecosystem

Tightly integrated with enterprise cloud data platforms.

- Snowflake, BigQuery, Databricks.

- dbt, Airflow.

- Slack, PagerDuty for alerting.

Support & Community

Provides dedicated engineering support. The community is largely composed of data scientists and senior data engineers.

#6 — Soda

Short description:

Soda is a developer-centric data observability platform that emphasizes “Data Quality as Code.” It allows teams to define checks using a human-readable language (SodaCL) that can be embedded into Git workflows and CI/CD pipelines. It is the go-to choice for engineering teams that want to treat data quality like software quality.

Key Features

- SodaCL: A YAML-based domain-specific language for defining data quality checks.

- Soda Cloud: A centralized platform for managing alerts, history, and team collaboration.

- Git Integration: Allows checks to be version-controlled and run as part of the software development lifecycle.

- Self-Serve Data Contracts: Enables teams to publish and enforce data quality expectations.

- Open Source Core: A free-to-use CLI and library for local testing.

Pros

- Perfect for teams that prioritize automation and CI/CD.

- SodaCL is very intuitive and easy for both engineers and analysts to read.

- Offers a free open-source tier for getting started.

Cons

- Requires more “coding” effort than purely automated ML-based tools.

- The UI for non-technical users is not as robust as some “SaaS-first” competitors.

Platforms / Deployment

- CLI / Web / Python Library

- Cloud / Self-hosted (OSS)

Security & Compliance

- SSO, RBAC, Encryption.

- SOC 2 Type II (Cloud).

Integrations & Ecosystem

Extensive integrations across the developer stack and data warehouse layer.

- dbt, Airflow, GitHub Actions.

- Snowflake, BigQuery, Spark, Athena.

- Slack, MS Teams.

Support & Community

Extremely active Slack community and open-source contributor base. Professional support is available for Cloud customers.

#7 — IBM Databand

Short description:

Databand, an IBM company, focuses on “Pipeline Observability.” While other tools focus on the data in the warehouse, Databand focuses on the processes that move the data. It provides deep visibility into Airflow, Spark, and Snowflake to identify where a pipeline is lagging or failing before the data is even written.

Key Features

- Run-Level Observability: Tracks the execution of individual tasks and jobs in real-time.

- Impact Mapping: Shows how a failed Airflow task impacts downstream datasets.

- Data Profiling: Integrated data quality checks within the pipeline execution.

- Alerting & Incident Management: Centralized hub for managing pipeline-related issues.

- Metadata Integration: Collects logs and metrics from distributed compute environments without overhead.

Pros

- Unrivaled for monitoring complex Airflow and Spark environments.

- Helps identify performance bottlenecks in the pipeline, not just data errors.

- Backed by the stability and reach of IBM’s enterprise support.

Cons

- The UI can feel “enterprise-heavy” and less modern than startup competitors.

- Less focus on “business-level” data distribution anomalies compared to Anomalo.

Platforms / Deployment

- Web / Python SDK

- Cloud (SaaS) / Self-hosted

Security & Compliance

- SSO/SAML, MFA, RBAC.

- SOC 2, ISO 27001 (via IBM).

Integrations & Ecosystem

Focused on the engineering and orchestration layer.

- Apache Airflow (Deep integration), MWAA.

- Apache Spark, Databricks.

- Snowflake, Redshift.

Support & Community

Access to IBM’s global support infrastructure. Documentation is comprehensive and enterprise-focused.

#8 — Lightup

Short description:

Lightup is a high-performance data observability tool designed for massive data volumes. It utilizes a “push-down” architecture that runs queries directly on the data warehouse, minimizing data movement. It is optimized for teams that need to monitor millions of metrics across hundreds of petabytes of data without breaking the bank.

Key Features

- Push-down Execution: Runs checks inside your warehouse to avoid data transfer costs.

- AI-Powered Monitoring: Automatically learns data patterns to detect outliers and drift.

- Data Quality Indicators (DQIs): Standardized way to measure and report data health across the org.

- SQL-First custom checks: Allows for easy creation of complex rules using standard SQL.

- Self-Service Dashboards: Provides visibility into data health for non-technical stakeholders.

Pros

- Highly cost-efficient for organizations with extreme data scale.

- Minimal configuration needed to start monitoring at scale.

- Strong focus on data privacy (data stays in your warehouse).

Cons

- Lineage features are not as visually advanced as Monte Carlo’s.

- Smaller community and third-party plugin ecosystem than the market leaders.

Platforms / Deployment

- Web

- Cloud (SaaS) / Private VPC

Security & Compliance

- SSO, RBAC, Encryption.

- SOC 2 compliant.

Integrations & Ecosystem

Focuses on high-performance cloud warehouses.

- Snowflake, BigQuery, Databricks.

- PostgreSQL, MySQL (Operational monitoring).

- dbt, Airflow.

Support & Community

Responsive technical support. The community is growing among high-scale enterprise data teams.

#9 — Telmai

Short description:

Telmai is a data observability platform built for the “Open Data Architecture.” It is designed to work across data lakes, warehouses, and streaming sources without requiring a centralized repository. It specializes in monitoring heterogeneous data formats (JSON, Parquet) and complex nested structures, making it a favorite for modern data lake environments.

Key Features

- Cross-Source Observability: Monitors data as it moves between lakes (S3/GCS) and warehouses.

- Deep Schema Monitoring: Detects changes in complex, nested JSON or Avro schemas.

- Time-Travel Analysis: Compares current data against historical snapshots to find subtle changes.

- Anomaly Discovery: ML-based detection of volume, freshness, and value drift.

- Data Lineage: Visualizes the flow of data across diverse storage systems.

Pros

- Outstanding for organizations using Data Lakes (Iceberg, Hudi, Delta Lake).

- Handles unstructured and semi-structured data better than most rivals.

- Architecture-agnostic; doesn’t force you into a specific cloud stack.

Cons

- Can be more complex to configure than “Snowflake-only” tools.

- The alerting system is powerful but requires tuning to avoid noise.

Platforms / Deployment

- Web

- Cloud (SaaS) / Hybrid

Security & Compliance

- SSO, RBAC, Encryption.

- SOC 2 compliant.

Integrations & Ecosystem

Broad support for the big data ecosystem.

- AWS S3, Google Cloud Storage, Azure Data Lake.

- Snowflake, BigQuery, Redshift, Databricks.

- Apache Kafka, Spark.

Support & Community

High-touch engineering support. They are thought leaders in the “Data Lakehouse” observability space.

#10 — Sifflet

Short description:

Sifflet is a European-based data observability platform that emphasizes a “Full-Stack” approach, connecting data quality directly to business outcomes. It provides a unique balance of automated ML-based monitoring and a highly collaborative interface for data and business teams to resolve issues together.

Key Features

- Full-Stack Lineage: Connects upstream data sources all the way to final business metrics in BI tools.

- Automated Data Monitoring: Out-of-the-box checks for the 5 pillars of observability.

- Business Impact Assessment: Shows exactly how a data failure affects specific company KPIs.

- Data Catalog Integration: Enriches existing data catalogs with real-time health scores.

- Collaboration Hub: Integrated workflows for teams to document and solve data incidents.

Pros

- Best-in-class UI/UX for collaborative incident resolution.

- Excellent bridge between technical data health and business impact.

- Very strong lineage visualization.

Cons

- Smaller market presence in North America compared to Monte Carlo.

- Some advanced developer features (like CLI) are still evolving.

Platforms / Deployment

- Web

- Cloud (SaaS)

Security & Compliance

- SSO, RBAC, Encryption.

- GDPR compliant (Strong focus), SOC 2.

Integrations & Ecosystem

Integrates with the modern data stack and popular BI tools.

- Snowflake, BigQuery, Redshift, Databricks.

- dbt, Airflow, Dagster.

- Tableau, Looker, PowerBI.

Support & Community

Dedicated support team with a strong focus on the European market. Very active in modern data stack communities.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| Monte Carlo | Large Enterprises | Web, API | Cloud | End-to-End Lineage | 4.8/5 |

| Bigeye | Deep Value Accuracy | Web | Cloud | Autometrics | 4.6/5 |

| Acceldata | Hybrid/Compute Obs. | Linux, Web | Hybrid | Infrastructure Correlation | 4.5/5 |

| Metaplane | SMB / MDS Teams | Web | Cloud | 10-Minute Setup | 4.9/5 |

| Anomalo | Data Science / ML | Web | Cloud | Unsupervised Discovery | 4.7/5 |

| Soda | Dev-Centric / CI/CD | CLI, Python | Hybrid | SodaCL (Data as Code) | 4.6/5 |

| IBM Databand | Pipeline/Airflow | Python SDK, Web | Cloud | Run-Level Visibility | 4.4/5 |

| Lightup | High-Scale / Low-Cost | Web | Cloud | Push-down Execution | 4.5/5 |

| Telmai | Data Lakes / JSON | Web | Hybrid | Cross-Source Monitoring | 4.6/5 |

| Sifflet | Business Alignment | Web | Cloud | KPI Impact Mapping | 4.7/5 |

Evaluation & Scoring of Data Observability Tools

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| Monte Carlo | 10 | 7 | 10 | 10 | 8 | 10 | 6 | 8.65 |

| Bigeye | 9 | 8 | 9 | 9 | 8 | 9 | 7 | 8.45 |

| Acceldata | 10 | 5 | 8 | 9 | 7 | 9 | 7 | 7.80 |

| Metaplane | 7 | 10 | 9 | 9 | 9 | 9 | 9 | 8.50 |

| Anomalo | 10 | 7 | 8 | 9 | 6 | 8 | 7 | 8.05 |

| Soda | 8 | 7 | 10 | 9 | 9 | 8 | 9 | 8.45 |

| IBM Databand | 9 | 6 | 9 | 10 | 8 | 9 | 6 | 8.05 |

| Lightup | 8 | 8 | 7 | 9 | 10 | 8 | 9 | 8.20 |

| Telmai | 9 | 6 | 8 | 9 | 8 | 9 | 8 | 8.05 |

| Sifflet | 9 | 9 | 9 | 9 | 8 | 9 | 8 | 8.70 |

How to Interpret These Scores:

The scoring is comparative and weighted toward modern business needs. “Core Features” (25%) reflects the breadth across the 5 pillars. “Ease of Use” (15%) and “Integrations” (15%) determine how quickly a team can actually derive value. A tool like Monte Carlo scores high on features and support but lower on value due to its premium price. Metaplane scores lower on core feature depth compared to giants but exceptionally high on ease and value for its target market.

Which Data Observability Tools Tool Is Right for You?

Solo / Freelancer

If you are managing a few data pipelines for a client, you likely don’t need a full-blown observability platform. However, if you want a professional setup, the open-source Soda Core is your best bet. It’s free, scriptable, and allows you to prove to your clients that the data you are delivering is accurate.

SMB

For small-to-mid-sized businesses with a lean data team, Metaplane is the standout choice. Its rapid setup and Slack-centric workflow mean you can have observability in place before your first major dashboard break without needing a dedicated engineer to manage the tool.

Mid-Market

Companies with growing data teams and increasing complexity should look at Soda or Sifflet. Soda is excellent if your team is engineering-heavy and wants to build data quality into their CI/CD. Sifflet is superior if you need to demonstrate the business impact of data quality to non-technical stakeholders.

Enterprise

For global organizations with petabyte-scale data and hundreds of engineers, Monte Carlo or Acceldata are the primary contenders. Monte Carlo provides the most complete “turnkey” solution with deep lineage. Acceldata is the better choice if you have a hybrid environment and need to monitor compute performance alongside data health.

Budget vs Premium

- Budget: Soda (OSS) and Metaplane offer the most accessible entry points.

- Premium: Monte Carlo and Anomalo are premium investments that pay off through advanced ML and high-touch support for complex environments.

Feature Depth vs Ease of Use

If you need deep, unsupervised discovery of data anomalies, Anomalo is the feature-depth leader. If you just want to know when a table fails to refresh with zero setup friction, Metaplane wins on ease of use.

Integrations & Scalability

Lightup and Telmai are built specifically for scalability in massive or heterogeneous data environments. If your stack is strictly the Modern Data Stack (Snowflake/dbt), Monte Carlo has the most mature integrations.

Security & Compliance Needs

Organizations with strict “Zero Trust” requirements should prioritize Lightup or Metaplane, as their metadata-only architecture ensures that raw, sensitive data never leaves their secure warehouse environment.

Frequently Asked Questions (FAQs)

1. What is the difference between data monitoring and data observability?

Data monitoring is reactive and typically checks for known failure modes using pre-defined rules (e.g., “Is this column null?”). Data observability is proactive and uses machine learning to understand the “unknown unknowns”—detecting anomalies in data behavior that you wouldn’t have thought to write a rule for.

2. Does data observability replace data testing tools like dbt tests?

No, they are complementary. Data testing (like dbt or Great Expectations) is used to validate known business logic during the transformation phase. Data observability acts as a continuous safety net across the entire pipeline, catching issues that occur in production after tests have already passed.

3. How much compute overhead does an observability tool add to my warehouse?

This varies significantly by tool. “Push-down” architectures like Lightup or metadata-only tools like Metaplane add very little overhead. Deep profiling tools like Anomalo add more compute cost because they run complex queries to understand data distribution, but this is usually offset by the savings from avoiding bad data decisions.

4. Can these tools help with GDPR and data privacy compliance?

Yes, many tools help by identifying where PII (Personally Identifiable Information) exists through schema monitoring and data profiling. They can also ensure that data is not accidentally leaked into unauthorized tables and provide audit logs showing who accessed what data and when.

5. How long does it take to implement a data observability platform?

Lightweight SaaS tools like Metaplane can be connected and providing initial alerts in under 30 minutes. Enterprise-wide deployments involving lineage mapping across thousands of tables and integration with legacy on-premise systems can take several weeks or months of phased rollout.

6. What are the “5 Pillars of Data Observability”?

The five pillars are Freshness (is the data up to date?), Distribution (is the data within expected ranges?), Volume (did we get the expected amount of data?), Schema (did the table structure change?), and Lineage (where did this data come from and where is it going?).

7. Do I need to be a coder to use these tools?

It depends on the tool. Metaplane and Bigeye are very user-friendly for analysts. Soda and Databand are designed with a “code-first” approach for engineers. Most modern platforms offer a mix, allowing engineers to use APIs while analysts use the web-based UI.

8. How do these tools handle “Alert Fatigue”?

Leading tools use machine learning to filter out “noise.” Instead of alerting every time a value is slightly off, they build statistical baselines and only alert when a shift is statistically significant. They also group related failures together into single incidents to prevent a flood of individual notifications.

9. What is “Data Downtime” and how is it measured?

Data downtime refers to the total time that data is broken, missing, or inaccurate. It is typically measured by the Mean Time to Detection (how long until we knew it was broken) plus the Mean Time to Resolution (how long until it was fixed). Observability tools aim to minimize both.

10. Can I use these tools with streaming data like Kafka?

Yes, tools like Telmai and Acceldata have native support for monitoring streaming data. They can check for schema changes and data quality issues in real-time as events flow through the system, rather than waiting for the data to be written to a warehouse.

Conclusion

Data observability has moved from a “nice-to-have” feature to a critical component of the enterprise data stack. As the complexity of data pipelines continues to increase, the ability to detect and resolve issues before they reach the consumer is what separates high-performing data teams from those constantly stuck in “firefighting” mode. Whether you choose a highly automated enterprise giant like Monte Carlo, a developer-centric framework like Soda, or a rapid-setup tool like Metaplane, the goal remains the same: building trust in data.The “best” tool is ultimately the one that your team will actually use. A perfect technical solution that is ignored due to alert fatigue or a complex UI is no solution at all. We recommend starting with a clear audit of your most critical data assets, then running a pilot with one or two tools that align with your team’s technical level and your organization’s budget. By investing in observability, you aren’t just buying a tool; you are buying the confidence that your business is being run on a foundation of reliable information.