Find the Best Cosmetic Hospitals — Choose with Confidence

Discover top cosmetic hospitals in one place and take the next step toward the look you’ve been dreaming of.

“Your confidence is your power — invest in yourself, and let your best self shine.”

Compare • Shortlist • Decide smarter — works great on mobile too.

Introduction

Vector Database Platforms are specialized databases optimized for storing, indexing, and searching high-dimensional vector data. They are increasingly used for AI and machine learning applications, such as semantic search, recommendation systems, computer vision, and natural language processing (NLP). Unlike traditional databases, vector databases focus on efficient similarity searches using techniques like nearest neighbor search, cosine similarity, and Euclidean distance.

Real-world use cases include semantic search over unstructured text, AI-powered recommendation engines, image and video similarity searches, embedding storage for machine learning models, and retrieval-augmented generation (RAG) pipelines for LLMs. Buyers evaluating vector databases should consider query performance, indexing techniques, scalability, cloud and hybrid deployment options, integration with ML frameworks, support for embeddings, API availability, security, and cost.

Best for: AI engineers, data scientists, ML/LLM developers, enterprises managing large-scale embeddings, and organizations requiring high-speed similarity search.

Not ideal for: traditional relational data workloads, small projects without embeddings, or teams without AI/ML use cases.

Key Trends in Vector Database Platforms

- Increasing adoption for LLM and semantic search applications.

- Cloud-native and fully managed vector database services.

- Integration with ML/AI pipelines and embedding generation frameworks.

- High-performance approximate nearest neighbor (ANN) search techniques.

- Hybrid storage for memory-optimized and disk-based vectors.

- Scalable clustering for multi-region high availability.

- Support for open-source and commercial frameworks.

- AI-driven performance optimization and query acceleration.

- Security features: encryption, RBAC, and audit logging.

- Subscription and pay-as-you-go models for cloud deployment.

How We Selected These Tools (Methodology)

- Evaluated market adoption among enterprises and AI startups.

- Assessed feature completeness: ANN algorithms, query speed, API support, and scaling.

- Reviewed performance benchmarks and reliability signals.

- Examined integrations with ML/AI frameworks and pipelines.

- Considered security, compliance, and access controls.

- Evaluated ease of deployment and operational simplicity.

- Focused on multi-cloud and hybrid deployment support.

- Prioritized automation, monitoring, and performance tuning features.

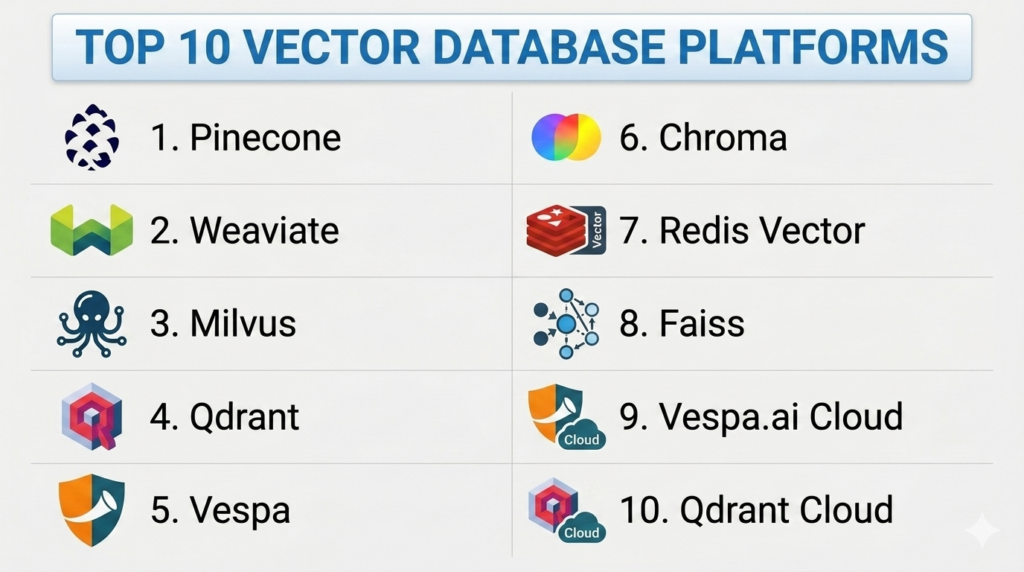

Top 10 Vector Database Platforms

#1 — Pinecone

Short description: Pinecone is a fully managed vector database optimized for high-speed similarity search, embeddings storage, and ML-powered applications.

Key Features

- Fully managed cloud-native vector database.

- High-performance ANN search for large-scale embeddings.

- Multi-dimensional indexing with low-latency queries.

- Integration with ML frameworks and LLM pipelines.

- Automatic scaling and replication.

Pros

- Fully managed and highly scalable.

- Easy integration with AI/ML workflows.

Cons

- Limited control over low-level database internals.

- Pricing scales with query volume and vector storage.

Platforms / Deployment

- Cloud (AWS, GCP)

- Cloud-native

Security & Compliance

- AES encryption in transit and at rest

- ISO 27001, SOC 2

Integrations & Ecosystem

- TensorFlow, PyTorch, OpenAI embeddings

- APIs for Python, JavaScript

- Cloud analytics and orchestration tools

Support & Community

- Vendor support, documentation, and active community.

#2 — Weaviate

Short description: Weaviate is an open-source, cloud-native vector database providing semantic search, AI integration, and scalable ANN search for embeddings.

Key Features

- Open-source vector database with cloud-managed options.

- Built-in ML model support and semantic search.

- Multi-tenancy and high availability.

- Graph-like queries over vector and structured data.

- RESTful and GraphQL APIs.

Pros

- Open-source flexibility with enterprise options.

- Supports hybrid search combining vectors and structured data.

Cons

- Enterprise-grade features may require paid subscriptions.

- Advanced setup can be complex for beginners.

Platforms / Deployment

- Windows / Linux / Cloud

- On-premises / Cloud / Hybrid

Security & Compliance

- Role-based access, TLS encryption

- Not publicly stated for SOC 2

Integrations & Ecosystem

- OpenAI, HuggingFace, TensorFlow embeddings

- Cloud deployment on AWS, GCP, Azure

- APIs for multiple languages

Support & Community

- Open-source community and professional support options.

#3 — Milvus

Short description: Milvus is an open-source vector database optimized for large-scale embedding storage and high-performance similarity searches.

Key Features

- Scalable, distributed vector database.

- Multiple ANN indexing algorithms.

- High-throughput and low-latency search.

- Cloud and on-premises deployment.

- Integration with AI frameworks for embeddings.

Pros

- Highly scalable and suitable for large datasets.

- Open-source with active community contributions.

Cons

- Requires operational expertise for on-prem deployment.

- Paid cloud options necessary for enterprise support.

Platforms / Deployment

- Windows / Linux / Cloud

- On-premises / Cloud / Hybrid

Security & Compliance

- SSL/TLS encryption

- Not publicly stated for SOC 2

Integrations & Ecosystem

- TensorFlow, PyTorch, OpenAI embeddings

- RESTful and gRPC APIs

- Cloud storage and orchestration tools

Support & Community

- Open-source community, vendor support for enterprise editions.

#4 — Qdrant

Short description: Qdrant is a vector database optimized for high-performance similarity search, embeddings management, and AI-powered applications.

Key Features

- REST and gRPC APIs for vector operations.

- Real-time indexing and low-latency search.

- Cloud and self-hosted deployment options.

- Multi-dimensional vector support.

- Integration with ML frameworks and AI pipelines.

Pros

- Open-source and flexible deployment options.

- Optimized for real-time similarity search.

Cons

- Enterprise support requires subscription.

- Scaling large datasets may require tuning.

Platforms / Deployment

- Windows / Linux / Cloud

- On-premises / Cloud / Hybrid

Security & Compliance

- TLS encryption, role-based access

- Not publicly stated for SOC 2

Integrations & Ecosystem

- HuggingFace, OpenAI embeddings, PyTorch, TensorFlow

- Cloud deployment on AWS, GCP, Azure

- API-driven integration

Support & Community

- Community support and enterprise support options.

#5 — Vespa

Short description: Vespa is an open-source search and vector engine designed for combining semantic search, vector search, and structured data at scale.

Key Features

- Distributed vector and text search engine.

- Real-time indexing and query processing.

- Combines structured filtering with vector similarity.

- Cloud and on-prem deployment.

- Supports embeddings and ML model integration.

Pros

- Powerful combination of vector and structured search.

- Highly scalable for large datasets.

Cons

- Complex setup and configuration.

- Requires expertise for performance tuning.

Platforms / Deployment

- Windows / Linux / Cloud

- On-premises / Cloud / Hybrid

Security & Compliance

- TLS encryption, access control

- Not publicly stated for SOC 2

Integrations & Ecosystem

- Python, Java SDKs

- Cloud platforms: AWS, GCP

- AI frameworks for embeddings

Support & Community

- Open-source community and enterprise support.

#6 — Chroma

Short description: Chroma is a developer-focused vector database built for embedding storage, retrieval, and AI-powered semantic search.

Key Features

- Python-native API and SDK.

- Persistent vector storage.

- High-performance ANN search.

- Integration with LLM and AI pipelines.

- Cloud and on-prem deployment options.

Pros

- Easy to integrate for Python-based AI applications.

- Lightweight and developer-friendly.

Cons

- Limited enterprise support.

- Smaller community compared to Milvus or Weaviate.

Platforms / Deployment

- Windows / Linux / Cloud

- On-premises / Cloud / Hybrid

Security & Compliance

- TLS encryption

- Not publicly stated for SOC 2

Integrations & Ecosystem

- OpenAI, HuggingFace, PyTorch

- Cloud deployments and orchestration

- API-driven integration

Support & Community

- Open-source support and commercial enterprise options.

#7 — Redis Vector

Short description: Redis Vector is an extension of Redis enabling vector similarity search, combined with its in-memory key-value engine for low-latency AI applications.

Key Features

- In-memory vector search for embeddings.

- Low-latency retrieval and caching.

- Integration with AI/ML pipelines.

- Clustering and replication support.

- APIs for multiple programming languages.

Pros

- Ultra-fast performance.

- Combines caching and vector search in one system.

Cons

- Memory-intensive for large datasets.

- Limited persistence compared to disk-based vector DBs.

Platforms / Deployment

- Windows / Linux / Cloud

- On-premises / Cloud / Hybrid

Security & Compliance

- TLS encryption, role-based access

- Not publicly stated for SOC 2

Integrations & Ecosystem

- Python, Java, Node.js SDKs

- AI frameworks for embeddings

- Cloud and orchestration tools

Support & Community

- Open-source community and enterprise Redis support.

#8 — Faiss

Short description: Faiss is a library for efficient similarity search and clustering of dense vectors, widely used for AI embedding search.

Key Features

- High-performance vector similarity search.

- GPU acceleration support.

- Supports large-scale datasets.

- Multiple ANN indexing algorithms.

- Integration with ML pipelines.

Pros

- Extremely fast and optimized for embeddings.

- Flexible open-source framework.

Cons

- Not a full database system; requires integration for persistence.

- More developer expertise required.

Platforms / Deployment

- Linux / Cloud

- On-premises / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python, C++, PyTorch, TensorFlow

- Embedding pipelines

- APIs and custom storage layers

Support & Community

- Open-source community.

#9 — Vespa.ai Cloud

Short description: Cloud-hosted Vespa offering vector and semantic search as a managed service, optimized for AI applications.

Key Features

- Fully managed cloud vector database.

- ANN search and structured data queries.

- High availability and scaling.

- API access for embeddings and AI models.

- Real-time indexing and query execution.

Pros

- Managed service reduces operational overhead.

- Scalable for large AI workloads.

Cons

- Limited control over infrastructure.

- Vendor lock-in to Vespa cloud.

Platforms / Deployment

- Cloud-native

- Cloud (AWS, GCP)

Security & Compliance

- TLS encryption

- Not publicly stated

Integrations & Ecosystem

- Python, Java SDKs

- ML/LLM pipelines

- Cloud analytics and orchestration

Support & Community

- Vendor support and documentation.

#10 — Qdrant Cloud

Short description: Managed Qdrant vector database providing cloud-native deployment with high-performance similarity search and AI integration.

Key Features

- Fully managed vector database.

- ANN search and filtering.

- High availability and scalability.

- Integration with LLM pipelines and ML frameworks.

- RESTful and gRPC API access.

Pros

- Fully managed, easy to integrate.

- Optimized for AI embeddings.

Cons

- Subscription required for enterprise features.

- Limited flexibility compared to self-hosted.

Platforms / Deployment

- Cloud-native

- Cloud (AWS, GCP, Azure)

Security & Compliance

- TLS encryption, role-based access

- ISO 27001, SOC 2

Integrations & Ecosystem

- OpenAI, HuggingFace, PyTorch, TensorFlow

- APIs and SDKs

- Cloud orchestration

Support & Community

- Vendor enterprise support and documentation.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Pinecone | AI embeddings | Cloud | Cloud-native | Managed vector search | N/A |

| Weaviate | Semantic search | Windows / Linux / Cloud | Cloud / On-prem / Hybrid | Semantic graph queries | N/A |

| Milvus | Large-scale embeddings | Windows / Linux / Cloud | On-prem / Cloud / Hybrid | High-performance ANN search | N/A |

| Qdrant | AI developers | Windows / Linux / Cloud | On-prem / Cloud / Hybrid | Cloud & self-hosted | N/A |

| Vespa | Enterprise AI search | Windows / Linux / Cloud | On-prem / Cloud / Hybrid | Vector + structured search | N/A |

| Chroma | Python & AI apps | Windows / Linux / Cloud | On-prem / Cloud / Hybrid | Python-native | N/A |

| Redis Vector | Real-time AI | Windows / Linux / Cloud | On-prem / Cloud / Hybrid | In-memory vector search | N/A |

| Faiss | ML pipelines | Linux / Cloud | On-prem / Cloud | GPU-accelerated similarity search | N/A |

| Vespa.ai Cloud | Managed AI search | Cloud-native | Cloud | Cloud-managed vector + structured search | N/A |

| Qdrant Cloud | Managed vector DB | Cloud-native | Cloud | Fully managed cloud service | N/A |

Evaluation & Scoring of Vector Database Platforms

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Pinecone | 9 | 8 | 8 | 8 | 9 | 8 | 7 | 8.3 |

| Weaviate | 8 | 7 | 8 | 8 | 8 | 7 | 7 | 7.7 |

| Milvus | 9 | 7 | 8 | 8 | 9 | 7 | 7 | 8.0 |

| Qdrant | 8 | 8 | 8 | 8 | 8 | 7 | 7 | 7.8 |

| Vespa | 9 | 7 | 7 | 8 | 9 | 7 | 7 | 7.9 |

| Chroma | 8 | 9 | 7 | 8 | 8 | 6 | 7 | 7.7 |

| Redis Vector | 8 | 8 | 7 | 8 | 9 | 7 | 7 | 7.9 |

| Faiss | 9 | 7 | 7 | 8 | 9 | 6 | 7 | 7.8 |

| Vespa.ai Cloud | 8 | 8 | 7 | 8 | 8 | 7 | 7 | 7.8 |

| Qdrant Cloud | 8 | 8 | 7 | 8 | 8 | 7 | 7 | 7.8 |

Which Vector Database Platform Is Right for You?

Solo / Freelancer

Chroma, Redis Vector, or Faiss for personal AI/ML projects or small-scale embedding storage.

SMB

Weaviate or Qdrant provide flexible deployment with cloud or hybrid options for moderate workloads.

Mid-Market

Pinecone, Milvus, or Vespa offer enterprise-grade performance and scalability for multi-region deployments.

Enterprise

Pinecone Enterprise, Vespa.ai Cloud, or Qdrant Cloud are ideal for mission-critical, high-volume embeddings and AI pipelines.

Budget vs Premium

Open-source options like Milvus, Weaviate, Faiss, or Chroma are cost-effective. Managed cloud services offer convenience, scalability, and enterprise-grade SLA at higher cost.

Feature Depth vs Ease of Use

Managed platforms (Pinecone, Qdrant Cloud) simplify deployment but provide less control. Open-source platforms allow deep customization but require operational expertise.

Integrations & Scalability

Managed and cloud-native vector databases integrate with AI/ML frameworks, LLM pipelines, and cloud orchestration. Enterprise deployments scale across regions and workloads.

Security & Compliance Needs

Select platforms offering encryption at rest/in transit, role-based access, audit logging, and compliance support for ISO 27001, SOC 2, and GDPR.

Frequently Asked Questions (FAQs)

1. What is a vector database?

A database optimized for storing, indexing, and searching high-dimensional vector embeddings.

2. How is it different from traditional databases?

Vector databases are designed for similarity search and AI workloads, unlike relational databases focused on structured data.

3. Which AI use cases need vector databases?

Semantic search, recommendation engines, LLM embeddings, computer vision, and NLP applications.

4. Can vector databases scale horizontally?

Yes, most modern vector DBs like Milvus, Pinecone, and Weaviate scale across nodes and regions.

5. Are these databases secure?

Managed platforms provide encryption, role-based access, and audit logging; open-source may require configuration.

6. Can I integrate them with LLM pipelines?

Yes, all major vector databases support embeddings generated from LLMs or ML frameworks.

7. Are open-source vector databases reliable?

Yes, Milvus, Weaviate, and Faiss are widely used with active communities.

8. Do they support cloud deployments?

Most provide managed services in AWS, GCP, or Azure, and hybrid/self-hosted options.

9. How is query performance optimized?

Using approximate nearest neighbor (ANN) algorithms, GPU acceleration, and indexing structures.

10. Which platform is best for real-time applications?

Redis Vector and Pinecone provide low-latency real-time similarity search.

Conclusion

Vector Database Platforms are essential for AI-driven applications that rely on embeddings, semantic search, and similarity analysis. Open-source platforms like Milvus, Weaviate, and Faiss offer flexibility and cost-effectiveness for developers and SMBs, while managed cloud-native solutions like Pinecone, Qdrant Cloud, and Vespa.ai provide enterprise-grade scalability, high availability, and simplified integration with ML/LLM pipelines. Selecting the right platform depends on workload size, deployment preferences, AI integration needs, and operational expertise. The recommended next steps are to shortlist suitable vector database platforms, pilot with your embeddings, and validate performance, security, and integration before full deployment.